Why Healthcare AI Fails and How to Prepare AI for Real Clinical Use

Healthcare AI failures often show up as small breakdowns that are easy to miss at first: an assistant pulls the wrong detail from the chart, skips a key lab, or refuses to answer, and the clinician ends up spending extra minutes reconstructing the story by hand.

This article walks you through real examples and the operational root causes behind them, then shows how to prevent failed AI output in healthcare through AI-ready data, workflow-aware design, and interoperability, giving you a practical lens for planning custom AI software development that clinicians and admins can actually trust.

In 2025, one clinician’s “quick check” turned into a patient scare: an AI-enabled ECG interpretation flagged a heart attack for a healthy 29-year-old woman, and the message was reportedly shared before anyone paused to validate it. That is exactly why AI makes mistakes in the real world: the model can be statistically confident while still being clinically wrong.

If you need immediate consulting for efficient AI solution engineering, please contact our healthcare AI development experts so we can help you avoid mistakes.

The more common failure mode is quieter. When AI-powered documentation tools are dropped into the EHR, the results are mixed: in a 2024 health system trial, about half saw a better “after-hours” EHR experience, while a large subset reported no meaningful time benefit.

Both examples reflect AI limitations in healthcare operations:

- narrow inputs (the tool only “sees” what it is fed, so it misses important context)

- unclear handoffs (people assume the next step is covered, but nobody owns verification)

- automation bias (humans start trusting the output because it looks authoritative)

These are not edge cases; they are inside today’s healthcare AI trends, including multi-agent AI workflows where one model hands work to another, and vibe coding prototypes that reach production before clinical validation catches up. The gap is structural: why healthcare AI projects fail often comes down to why AI models struggle with EHR data and persistent data quality issues in healthcare AI, which also show up downstream in postmarket reality, including early device recalls tied to validation gaps.

Why TATEEDA is qualified to speak about healthcare AI failures

The conversation around AI in medicine is usually framed as progress: The team focuses on application speed, automation workflows, and new model releases.

“In practice, the harder question is what happens when the system is wrong… The failures of AI in healthcare rarely look like a dramatic crash with the ‘Blue Screen of Death’ shutting the operation down. A single confident but incorrect output can send a team down the wrong path, waste critical minutes, and in the worst cases endanger a patient’s life, which is why expert verification and error flagging must remain part of the workflow until the model proves itself in real use.”

Slava K., CEO at TATEEDA

These AI errors show up as subtle misinformation, missing context, and confident summaries that can endanger patients if no one pauses to verify. That is the lens we bring: how to prevent AI failures and errors before they reach clinical teams, and how to design workflows that help avoid AI misdiagnoses rather than quietly amplifying risk.

TATEEDA is a custom healthcare software development company headquartered in San Diego, California. Since 2013, we have built software for U.S. providers, payers, and healthtech firms. Our perspective comes from delivery work that lives inside real EHR-driven operations: staffing, scheduling, intake, billing, and device-connected workflows. Over the years, we have worked with AYA Healthcare (travel nurse staffing) and Abbott (biotech and medical devices) as one of their software development partners.

That experience exposed the real reasons AI succeeds or fails in production, including data bias, inconsistent documentation, and the gaps between what the model “sees” and what clinicians actually need at the point of care.

Today, our teams help healthcare organizations reduce risk by building systems with practical guardrails:

- Build and integrate custom healthcare platforms for U.S. organizations, with verification steps where AI output could affect care.

- Connect EHR, staffing, and billing data to AI layers in ways that preserve context and reduce refusal loops.

- Architect and implement AI agents that support scheduling, credentialing, patient intake, and revenue cycle work without turning automation into unchecked decisions.

- Design data pipelines that reduce bias, preserve provenance, and keep protected health information within compliant boundaries, so models are trained and used on inputs that hold up under real clinical pressure.

Table of Contents

AI Failures in Healthcare: Real Examples

Google’s Med-Gemini offered a reminder that “close enough” language is not close enough in medicine. In a public example that was meant to show clinical value, the model referenced a nonexistent brain structure, “basilar ganglia,” effectively blending two real terms into one fake one. The troubling part was not just the typo-like surface. It was that the mistake made it into outward-facing material, then was quietly edited, which is exactly how small errors can slip into bigger workflows, especially when staff are rushed and the output “sounds medical.” See the details in this Verge report.

The FDA’s internal assistant, Elsa, showed the same failure mode in a different setting: citations. Staff testing the tool reported that it could hallucinate studies and misrepresent research, forcing users to verify everything line by line instead of trusting the summary. When the goal is speed, a system that invents support is not a helper, it is a risk amplifier; it can quietly contaminate a review trail with false confidence. CNN covered the episode in this transcript segment.

Then there is the adversarial angle. A Mount Sinai team found that popular chatbots can be nudged into confident misinformation by a single fabricated medical term inside a prompt, after which the model may elaborate as if the fake condition were real. Their more hopeful finding was that a simple caution line in the prompt reduced those errors, which points to a practical design lesson: safer behavior often starts with skeptical defaults, not just “better prompts.” The study summary is in this Mount Sinai release.

It is not surprising, then, that trust remains fragile. A Salesforce Pulse of the Patient Snapshot found that 69% of U.S. adults are uncomfortable with healthcare companies using AI to diagnose them, as described in Salesforce’s research note. And in a December 2025 YouGov survey, 46% of U.S. adults expressed distrust of AI in health and personal care, while only 23% expressed trust, summarized in this YouGov analysis.

When AI Breaks the Flow of Clinical Work

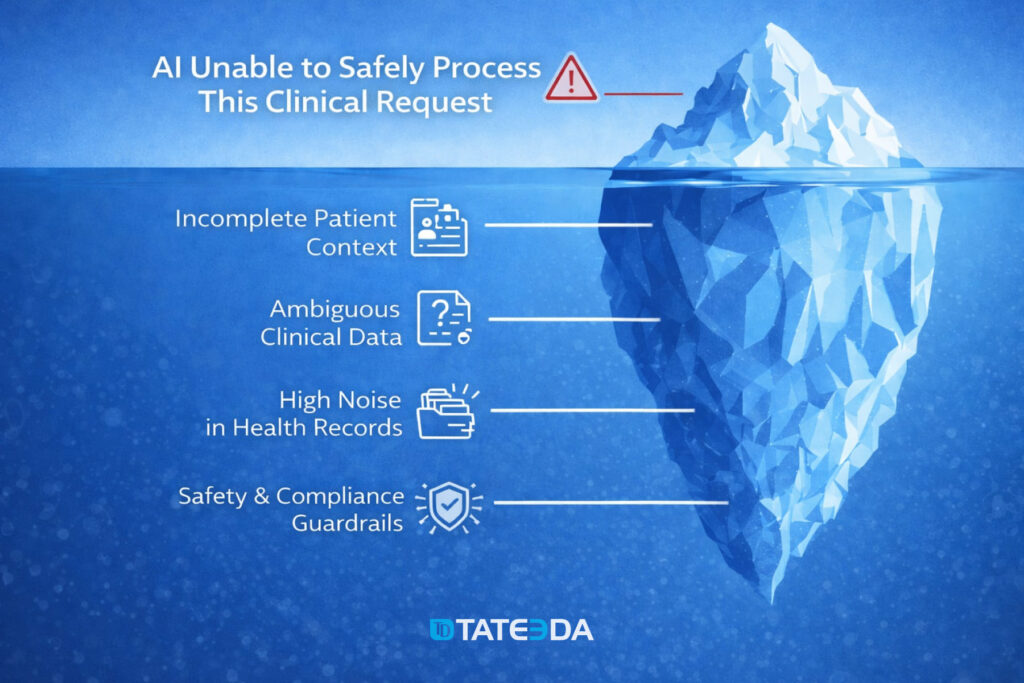

It usually happens in the middle of a routine task. A clinical clerk tries to summarize a patient’s recent lab trends before a physician rounds. A nurse asks the AI assistant to extract key medication changes from discharge notes. An operator in a call center attempts to confirm whether a referral meets internal criteria. Instead of help, the AI window returns a refusal or a vague warning, and the workflow stalls at the worst possible moment, which is why healthcare AI implementation challenges show up so quickly in day-to-day care.

What follows is familiar. The user retries the prompt, slightly rephrases it, then abandons the tool altogether. They copy data manually from the EHR, scan multiple screens, and work around the system they were told would “save time.” The issue is rarely the prompt itself. More often, the AI simply cannot act safely within the clinical context it sees, even though that context feels obvious to the human using it. This gap is a big part of why healthcare AI projects fail, and it also feeds longer-term healthcare AI adoption problems across teams that cannot afford repeated slowdowns.

What’s really happening beneath the surface

🗂️❓ Incomplete patient context

The AI does not see the full picture the clinician assumes is available. Key elements such as recent labs, active medications, prior diagnoses, or external encounter data may sit in separate systems or be excluded from the AI’s input scope. When context is fragmented, the model cannot safely interpret or summarize clinical information.

🔎🔀 Ambiguous clinical data

Much of healthcare data lives in free-text notes, dictated summaries, or copied-forward documentation. To a human reader, meaning is inferred instantly. To an AI, the same text may contain contradictions, outdated references, or unclear timelines. When the model cannot confidently resolve ambiguity, it opts to stop rather than risk an unsafe output.

🧹📁 High noise in health records

Administrative fields, billing codes, repetitive templates, and auto-generated content often outweigh clinically relevant signals. Important details are present, but buried. The AI struggles to separate what matters now from what is historical, duplicated, or irrelevant to the current task, which connects directly to data quality issues in healthcare AI.

🧷🔐 Safety and compliance guardrails

In clinical environments, AI systems operate under strict rules. When a request approaches diagnosis, treatment recommendations, or patient-specific judgment without sufficient validation, protective limits are triggered. From the user’s perspective, this looks like an unexplained refusal. In reality, it is the system that prevents potential clinical or legal risk.

Where Healthcare AI Initiatives Quietly Go Off Track

Once AI starts breaking down during everyday clinical tasks, a broader and more systemic pattern becomes visible. Many healthcare AI initiatives begin with strong engineering ambition but limited operational grounding. Teams often assume that once a system is technically capable, clinicians, nurses, and administrative staff will naturally adapt their workflows around it. In practice, many early deployments stall after pilot phases because they disrupt existing workflows rather than supporting them.

In reality, this assumption exposes the core AI limitations in healthcare operations, where technology is introduced without fully accounting for how care is actually delivered minute by minute.

Healthcare environments are shaped by fragmented systems, shifting priorities, and constant interruptions. AI models are typically trained on historical snapshots of data, yet clinical work depends on forward-looking judgment, evolving patient conditions, and context that changes throughout the day. Recent analyses of enterprise healthcare AI tools found that clinicians spend up to three hours per week correcting or bypassing AI outputs due to missing context or misinterpreted patient data.

This disconnect explains why AI models struggle with EHR data, which is often distributed across multiple systems, filled with copied-forward notes, delayed updates, and documentation created primarily for billing or compliance rather than decision support.

When these systems fail, the root cause is rarely a single technical flaw. More often, it reflects unresolved data quality issues in healthcare AI, such as missing lab values, inconsistent coding, unstructured clinical notes, and limited access to external records. At the same time, workflow realities are overlooked: clinicians need answers quickly, within the tools they already use, without switching screens or reconstructing context manually. Clinical responsibility adds another layer, as AI tools must operate within strict safety boundaries, avoiding diagnostic claims or recommendations unless the underlying data and validation are sufficient.

To understand why these breakdowns repeat across organizations, it helps to look beyond individual use cases and examine the structural conditions under which healthcare AI systems are conceived, built, and deployed.

Structural root causes behind healthcare AI failures

| Root cause | Explanation |

|---|---|

| Leadership and problem-definition gaps | Many AI initiatives begin without a clearly articulated clinical or operational problem. Leadership may express a general desire to “apply AI” but fail to define which decisions should improve, which users are involved, or how success will be measured. As priorities shift, engineering teams are forced to adjust direction midstream, producing systems that never fully align with real clinical needs. |

| Insufficient data readiness | Organizations frequently overestimate the quality and usability of their clinical data. EHR records may be incomplete, outdated, or shaped by billing workflows rather than clinical clarity. Leaders are often unprepared for the time, governance effort, and coordination required to make data reliable enough for AI use, reinforcing persistent data quality issues in healthcare AI projects. |

| Technology-first development | Data science teams may prioritize advanced models over practical problem-solving. In healthcare, this leads to tools that perform well in isolation but fail in production environments, where speed, interpretability, and accountability matter more than technical sophistication. |

| Weak operational and technical foundations | AI systems depend on stable infrastructure, including secure data pipelines, real-time integrations, monitoring, and access controls. When these foundations are underfunded or fragmented, deployments slow down, data arrives late or incomplete, and model performance degrades once exposed to live clinical workflows. |

| Mismatch between AI capabilities and clinical complexity | Some organizations attempt to apply AI to problems that exceed current technological limits, such as nuanced diagnostic reasoning or high-stakes treatment decisions without sufficient context. When models are pushed beyond validated boundaries, safety controls trigger refusals or outputs become unreliable, eroding trust among clinicians and staff. |

Seen together, these factors explain why even well-funded AI initiatives often stall after early pilots. Without aligning…

- data readiness (clean, complete, and accessible clinical information)

- workflow realities (how staff actually work under time pressure)

- clinical responsibility (clear limits on what AI can safely suggest)

…AI tools remain isolated layers on top of existing systems. They perform well in controlled demos but fail to withstand real clinical pressure, where incomplete context, compliance constraints, and human accountability cannot be ignored.

Prepared data improves model performance and clinical usefulness

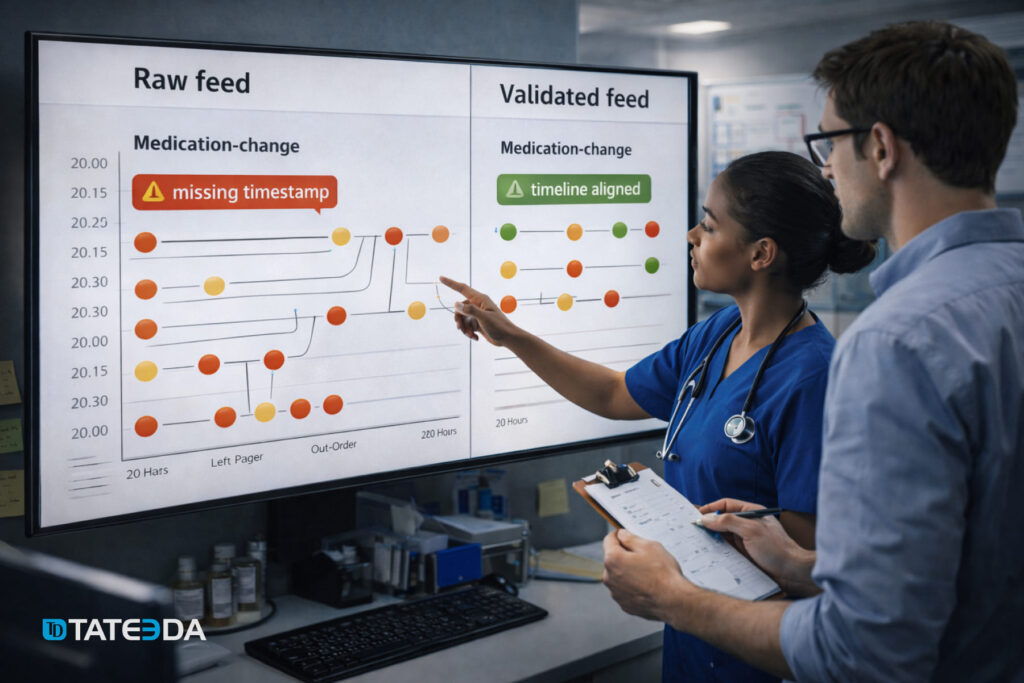

When AI is trained on datasets that do not reflect the full variability of real clinical care, it can fail in ways that feel unpredictable to the people using it. A nurse asks for a clean medication-change summary and gets a confusing timeline because updates were documented late. A clerk requests lab trends, and the output misses key results because they live in a separate feed.

These are not just model issues. These are data issues that show up as AI mistakes in the middle of real work.

That is why disciplined data preparation matters in healthcare. It is less about polishing spreadsheets and more about shaping data so it reflects actual patient journeys: multiple encounters, amended notes, revised orders, outside records, and the everyday mix of structured codes and free text. When the dataset carries that reality, the model is far less likely to guess, overreach, or refuse due to uncertainty.

Strong preparation practices usually include:

- Repeatable ingestion and transformation (So each new batch is handled consistently, and rules do not drift week to week.)

- Shared semantic definitions (So “active medication,” “encounter,” or “abnormal lab” means the same thing across teams and systems.)

- Consistent metadata and lineage (So you can trace where a value came from, when it was entered, and whether it was corrected later.)

- Controlled integration of new streams (So adding a new clinic, device feed, or partner system does not silently break assumptions.)

How things can go from worse to better when solutions are correctly arranged

This scenario is hypothetical, but it is built from patterns that come up again and again when healthcare organizations try to introduce AI into daily clinical work. Picture a regional provider network: one brand, one IT team, multiple outpatient clinics, and thousands of patient visits per week. Leadership wants an AI assistant that can draft visit summaries, pull recent lab trends, and flag obvious documentation gaps so clinicians spend less time hunting through the chart.

At first, the team deploys the assistant to a pilot group, for example a dozen clinics, and connects it to the EHR “as is.” In a demo, it performs well because the test cases are clean and the context is controlled. In live use, it struggles: key pieces of the patient story sit in different parts of the record, notes contradict each other, and timestamps reflect when something was entered, not when it actually happened. The assistant starts producing incomplete summaries or refusing requests because it cannot verify what it is seeing.

Trust drops fast. Staff revert to manual chart review, copy-pasting fragments into messages, and adding extra verification steps that were not there before. Instead of saving time, the tool becomes one more thing to manage during a busy day.

Then the organization fixes the foundation before expanding the rollout. It standardizes what “active medication” means across sites, cleans up problem list logic, aligns timelines for orders, administrations, and results, and reliably links labs, notes, and encounters. It also marks corrected entries so the assistant does not treat old mistakes as current truth.

After that, the AI assistant behaves differently. It can retrieve relevant context faster, produce summaries with fewer contradictions, and trigger fewer safety refusals because the underlying data tells a clearer story. The experience shifts from “extra work” to practical help, not because the model magically improved, but because the environment it operates in finally matches the reality of clinical care.

AI-ready Clinical Data vs Data that Derails Healthcare AI

| Gartner expects that by 2026, roughly 60% of AI initiatives will be dropped primarily because the underlying data is not AI-ready, leaving teams without the reliable, consistent inputs these systems need to work in practice. |

This table exists for a practical reason: it shows why an AI tool that looked fine in a pilot can still create AI medical mistakes once it meets real EHR workflows. It compares what “AI-ready” clinical data looks like against the kinds of gaps that repeatedly cause AI failures in healthcare.

Leaders often ask who is responsible for AI mistakes in healthcare. This side-by-side view helps answer that question without blame. It makes it easier to see whether the problem sits in the data itself, in how information moves through systems, or in the way the AI feature was introduced to staff.

Many teams run into AI implementation mistakes in healthcare, such as weak integrations, missing context at the point of use, or no clear step for verification before results reach the clinician. Other issues start earlier with AI development mistakes, when definitions are inconsistent, labels are unreliable, or training data does not match real clinical conditions.

| AI-ready data for healthcare AI | Data that causes failures in production |

|---|---|

| Shared clinical meaning across systems: the same concept is represented the same way everywhere (for example, problem lists, meds, labs, allergies use consistent code sets, units, and naming rules). | Mixed definitions: “active medication” means one thing in the inpatient EHR and another in ambulatory; lab units differ by site; diagnoses are coded inconsistently. Models learn contradictions instead of care patterns. |

| A trustworthy patient timeline: events have reliable time fields that separate “when it happened” from “when it was documented” (orders, administrations, results, notes). | Broken chronology: gaps in encounters, overwritten fields, missing result times, or backfilled documentation. The model cannot reconstruct what clinicians actually knew at decision time. |

| Clinically plausible values with unit control: vitals and labs are validated for realistic ranges, correct units, and clean reference intervals (for example, mg/dL vs mmol/L issues are caught). | Unvalidated noise: outliers, typos, wrong units, duplicated results, device glitches, or copy-forward errors get treated as real signals. This often produces false alerts, missed risks, or confusing summaries. |

| Provenance that stands up to audit: every data element can be traced to its source system, extraction time, transformation steps, and version (so errors can be investigated and corrected). | Unknown origins: data is merged through ad hoc scripts with no record of changes. When the model misfires, nobody can tell which feed or transformation introduced the problem. |

| Access rules match clinical reality: permissions reflect HIPAA minimum-necessary, consent, and role-based needs (clinician vs coder vs call center). Sensitive fields are segmented properly. | Risky exposure or unclear ownership: protected health information is mixed into places it should not be; ownership is ambiguous; teams avoid using the data, or the AI tool must refuse requests to stay safe. |

| Coverage across patient groups and care settings: training data represents different ages, genders, races, languages, payer types, and sites of care (including edge cases, not only “typical” visits). | Skewed samples: the dataset overrepresents one hospital, one demographic, or one workflow. The model works in one environment and degrades sharply when moved to a new clinic or population. |

| Outcome and label integrity: “ground truth” is defined carefully (for example, what counts as sepsis, readmission, deterioration) and is consistent across sites, time periods, and documentation styles. | Shaky ground truth: labels are based on billing codes alone, inconsistent clinician documentation, or shifting policies. The model learns documentation habits, not clinical reality. |

| Integration-ready structure for downstream use: data is shaped to support retrieval and summarization (for example, problem-med-lab links, encounter grouping, note sections, and cross-references). | Flat, disconnected data: notes, orders, results, and meds exist as separate piles with weak linking. The AI can “see” facts but cannot connect them into a coherent patient story. |

What “AI-ready” means in practice for healthcare teams

AI-ready data is not “perfect data.” It is data that is consistent enough to support safe inference, traceable enough to debug, and representative enough to generalize across sites and patient groups. When those conditions hold, you see fewer refusals, fewer contradictory summaries, and fewer “looks right in demo, breaks in clinic” moments.

Interoperability Gaps: When Connected Systems Decide whether AI Works

If you want to avoid failures of AI in healthcare operations, you cannot treat an AI assistant as a layer that sits “on top” of the EHR and magically sees the full patient story.

In real care delivery, context lives across many systems, for example:

- the EHR/EMR systems (including custom EHR systems)

- lab information systems

- imaging and radiology platforms

- pharmacy and medication feeds

- scheduling and registration tools

- payer portals and eligibility systems

- referral networks and care coordination platforms

- device and remote monitoring data.

When those systems do not speak the same language, the AI ends up reasoning over partial truth. That is a straight path to refusals, contradictions, and errors that users interpret as “the model is bad,” even when the deeper cause is disconnected data.

Interoperability is also where safety gets real. The AI can only be as reliable as the joins between sources: patient identity matching, encounter linking, timestamps, code mappings, and medication reconciliation across settings. Break any of those links, and the tool may omit a critical lab, misread a medication change, or summarize an outdated note as current.

So, if you are asking how to prevent healthcare AI failures, start by treating interoperability as a clinical safety requirement, not a back-office integration task.

What good interoperability looks like before you scale AI

- One patient, one identity across systems

Use strong patient matching rules and governance so lab results, imaging, outside encounters, and pharmacy data attach to the correct chart. Without this, even a well-trained model can produce confident summaries about the wrong person. - A coherent clinical timeline

Align event times across systems (ordered vs performed vs resulted vs documented). Many AI mistakes come from timeline confusion, especially when delayed documentation or backfilled notes look “new” to the model. - Shared definitions and code consistency

Standardize clinical concepts across tools: what counts as an active medication, an abnormal lab, a resolved condition, or an encounter type. Map local codes to common standards and keep units consistent. This is a practical way to prevent AI mistakes in healthcare without changing the model at all. - Reliable cross-links between data types

Make sure orders tie to results, results tie to the encounter, and notes reference the right episode of care. AI systems struggle when data arrives as isolated fragments rather than a connected patient narrative. - Controlled data access and auditability

Interoperability must respect role-based access and consent. The AI needs enough context to be useful, but with boundaries that prevent PHI leakage and support audit trails when something looks wrong.

How to operationalize this in an AI rollout

You can reuse the same “go-live discipline” you would apply to clinical software, but aim it at integrations:

- Validate integration outputs, not just model outputs

Test whether the AI sees the same meds, labs, and problems that clinicians see. Most production failures trace back to “missing inputs,” not “bad reasoning.” - Stage rollout by data maturity

Start in clinics where feeds are cleanest and most consistent, then expand as integrations stabilize. This reduces early frustration and improves adoption. - Monitor interoperability breakpoints continuously

Track missing fields, failed joins, sudden drops in outside records, spikes in refusals, and code mapping drift. When those signals shift, the AI will degrade quickly unless you catch it.

The short version: interoperability is not a technical detail you “finish later.” It is one of the main mechanisms behind whether you can avoid failures of AI in healthcare operations, and it is often the fastest lever for teams trying to prevent AI mistakes in healthcare without rebuilding the entire AI stack.

Final Word

Healthcare AI rarely fails in a dramatic, obvious way. It fails in small, operational moments: a missing lab result, a medication timeline that does not line up, a fabricated citation, a chatbot that confidently expands on a false premise, or a safety refusal that appears just when the team needs help most.

Across the examples and patterns in this article, the common denominator is clear: many AI breakdowns trace back to inputs that do not reflect real clinical variability, fragmented systems that hide context, and rollout practices that skip validation under real workflow pressure. When data is made AI-ready, interoperability is treated as a safety requirement, and deployment is staged with monitoring and human verification, the same models that “felt unreliable” can start behaving like dependable clinical support tools.

TATEEDA can help you build healthcare AI solutions that hold up in real operations, not just in demos. We work with U.S. healthcare organizations to design and deliver AI systems with the right foundations: clean and traceable clinical data flows, EHR and third-party integrations, workflow-aware guardrails, and verification loops that reduce risk while the system matures.

If your goal is to move from experimentation to practical value, we can help you implement AI in a way that lowers the odds of dangerous failures and keeps clinicians, admins, and patients on the safe side of automation.