Swarm AI in Healthcare: How IoT Hives Improve Clinical and Operational Decisions

In plain terms, what is swarm AI? It is a setup where many small, task-specific swarm AI agents work together instead of one big model trying to handle everything from a single server.

In swarm computing in healthcare, those agents sit close to the data coming from connected IoT devices, so they can react quickly when a bedside monitor changes, a lab feed arrives late, or a home sensor flags a trend.

This is the idea behind swarm intelligence in custom healthcare software development: lots of small, local decisions that combine into a clearer picture, rather than a single “central brain” that can stall or miss context. An IoT-swarm hive architecture healthcare uses local “hives” (edge gateways or unit servers) to collect, clean, and time-align signals, which is why an IoT swarm architecture for hospitals supports low-latency decisions and more graceful handling of gaps. The same approach to custom AI app development powers swarm-based patient monitoring at home and hospital robot fleet coordination, where safe handoffs matter more than one perfect model.

Hospitals in the United States are turning into dense “device neighborhoods”: many units run with 10 to 15 connected devices per bed, while remote care keeps expanding in parallel. Over five years, the share of hospitals offering remote patient monitoring climbed from 33.0% to 46.3%. In Medicare, that growth translated into large spending, with payments exceeding $500 million in 2024.

Inside facilities, coordination is shifting to “many nodes, one goal.” One widely reported hospital support robot has completed 1.25 million deliveries across more than 25 U.S. hospitals, pushing teams to think in fleets and handoffs rather than one centralized control point.

This is why swarm computing in healthcare is appearing on roadmaps. An IoT swarm architecture for hospitals can keep routine decisions close to where data is produced and escalate only what requires human attention. Care teams need low-latency decisions near the bedside, and they need graceful failure when data is late, partial, or split across tools (learn more –> healthcare AI failure examples).

Swarm intelligence in healthcare is one of the most important AI trends in US healthcare addresses that by distributing decisions across many small, local steps. The same pattern supports swarm-based patient monitoring at home and makes hospital robot fleet coordination less brittle than a single “central brain” that stalls when one feed goes dark.

Learn more: ➡️ Top 20 Healthcare Technology Trends in 2026

Why TATEEDA can speak credibly about swarm AI and IoT in healthcare

Most conversations about medical AI sound like a product launch: faster automation, shiny copilots, the latest model upgrade. In real hospitals, the pressure point is different. The question isn’t “can it generate an answer?”, it’s whether the system can keep its footing when…

- signals arrive late

- devices disagree

- workflows interrupt each other…

…and a human still has to act fast.

“Swarm computing in healthcare forces you to be honest about reality: data is produced in dozens of places at once, and no single system sees the full story. If you don’t engineer the handoffs, identity matching, and safety gates from day one, a confident mistake can ripple across devices and teams, and that’s how small technical gaps turn into clinical risk.”

Slava K., CEO at TATEEDA

That is the lens we bring to IoT-swarm hive architecture healthcare: how to build many small decision points that cooperate, verify, and escalate cleanly instead of guessing. When organizations deploy swarm AI agents without disciplined data governance and interoperability, problems don’t show up as a dramatic outage. They show up as quiet failure modes: missed context, contradictory summaries, alerts that fire too late, or automation bias where staff trust the system because it looks “consistent,” not because it is correct.

TATEEDA is a San Diego custom software development company headquartered in California. Since 2013, we’ve built and integrated software for U.S. providers, payers, and healthtech firms in the operational core of healthcare: EHR-connected workflows, scheduling and intake, billing and revenue cycle, staffing operations, and device-connected data pipelines. Across projects, including work with AYA Healthcare and Abbott as a software development partner, we’ve seen how real deployments behave when they leave the lab.

That experience shapes how we design swarm systems. We focus on the pieces that decide whether swarm intelligence in healthcare actually works: reliable interoperability (HL7 v2, FHIR, DICOM), clean patient identity resolution, controlled permissions, auditable event trails, and human verification loops that catch edge cases before they scale across the network. In short, we help teams build swarm-style AI that remains useful under clinical pressure, not just impressive in a demo.

Table of Contents

What Does Swarm Computing Mean in Healthcare?

People use several labels for the same family of ideas, so it helps to sort them out. When someone asks what is swarm AI, they usually mean a setup where many small decision-makers work together, each with a limited view, and their combined behavior is more useful than a single monolith.

In hospitals, those decision-makers can be software, devices, or robots, and they can live on local servers, on ward workstations, or directly on edge gateways.

Swarm AI agents are the software version of this: multiple task-focused agents that watch different signals and coordinate via simple rules. One agent may watch bedside vitals for sudden change; another checks whether a lab result arrived late; a third compares a medication administration record against the latest order.

None of them “knows everything,” but together they can assemble a safer, more complete picture for the clinician who stays accountable.

You will also hear what swarm learning is in healthcare, and that is a different, but related, concept. Swarm learning focuses on how models get trained: sites learn from their own data and exchange only model updates, so knowledge spreads without shipping raw patient records. That can be attractive in the U.S. when data-sharing agreements are slow, privacy constraints are tight, and you still want learning to reflect real care patterns.

“Swarm AI is useful in healthcare only when context is structured and traceable: each agent should attach sources, timestamps, and confidence so the system can state ‘this result is delayed’ instead of filling gaps. The advantage is controlled coordination…many small checks that reduce errors, surface uncertainty early, and keep clinicians accountable for final decisions.”

Slava K., CEO, TATEEDA

The phrase IoT-swarm hive architecture healthcare is best pictured as a “hub of hubs.” Each unit has a small edge hive that cleans, timestamps, and tags device feeds, then hands curated events to the agents that need them. A simple example: a nurse requests a medication-change summary; the hive pulls the right time windows from the eMAR, cross-checks them against orders and discharge notes, and asks an agent to draft a short, source-linked timeline.

If the lab feed is delayed, the system can say so clearly, instead of guessing. This is a practical multi-agent systems pattern: many small decision points, with humans in control. Over time, those hives can refine handoff rules safely.

| Application area | What the “swarm” is coordinating | Typical swarm nodes | Why it matters in real operations |

|---|---|---|---|

| Emergency department flow control | Micro-decisions around queueing, rooming, handoffs, and “who needs what next” | triage station, bed board, lab queue, radiology queue, transport, staffing board | Keeps throughput stable when arrivals spike, and reduces the domino effect of one delayed lab or imaging slot. |

| OR and procedure suite orchestration | Timing, turnover, instrument readiness, transport, and schedule recovery | OR scheduler, sterile processing, anesthesia readiness, patient transport, PACU, equipment tracking | Prevents small delays from turning into a full-day schedule collapse by coordinating many tiny constraints early. |

| Hospital-wide staffing load balancing | Matching workload to staff capacity across units in near real time | nurse call system, acuity scoring inputs, admission/discharge feed, staffing roster, float pool dispatch | Helps charge nurses and ops teams redeploy support before burnout shows up as missed tasks and overtime spirals. |

| Medication and formulary resilience | Detecting stock risk, substitution options, and “last safe moment” alerts | pharmacy inventory, ADC cabinets, e-prescribe feed, wholesaler ETA, unit-level usage trends | Cuts the odds of a late surprise when a med is unavailable, and keeps substitutions consistent with policy. |

| Infection prevention and bed hygiene routing | Coordinating cleaning priorities, isolation flags, and room readiness | EVS dispatch, bed management, isolation status, lab microbiology flags, PPE inventory | Speeds safe room turnover without guessing, especially when isolation status changes mid-day. |

| Radiology throughput and protocol alignment | Routing studies, prioritizing reads, and aligning protocols with patient constraints | modality worklists, contrast screening, patient prep status, radiologist queue, transport | Reduces “start-stop” imaging days by keeping prep, transport, and modality schedules in sync. |

| Revenue cycle triage and denial prevention | Distributing small checks that prevent big billing errors later | eligibility checks, prior auth status, coding queue, documentation completeness, payer portal signals | Catches gaps early, so teams do fewer reworks and fewer claims stall due to missing fields or mismatched codes. |

The IoT-Swarm Hive Architecture

Picture a step-down unit at 2 a.m.: a vitals monitor beeps, an infusion pump logs a rate change, a nurse scans a med, and a lab result arrives late because the interface queue is backed up. If you push all of that into one distant “brain,” you get delay, missing context, and brittle decisions. The point of swarm computing in healthcare is to keep small decisions close to where signals are born, then promote only the parts that deserve wider coordination.

A practical IoT-swarm hive architecture healthcare setup usually stacks four layers that behave like a relay team:

- Device nodes: bedside monitors, pumps, wearables, nurse call, mobile scanners (they produce raw signals and timestamps).

- Local edge hive: a near-bed or near-unit gateway (it cleans, time-aligns, de-duplicates, and tags events so they stop contradicting each other).

- Integration layer: connectors to the custom EHR systems, lab system, imaging, pharmacy, scheduling, and identity services (it resolves “who is this patient” and “which encounter is this,” then packages context).

- Cloud control plane: policy, model registry, fleet management, audit logs, and updates (it governs versions and keeps the whole network consistent across sites).

This is where swarm AI agents become useful. One agent watches for device drift, another builds a short medication-change timeline, a third checks whether a lab is missing or simply delayed. They do not need full charts in one place; they need curated, traceable context bundles.

A clean message flow looks like this: device event → edge hive normalizes, and tags it → integration layer attaches encounter and order context → an agent produces a draft summary with source pointers → the user sees it inside the workflow → the system writes back only permitted artifacts (for example, a draft note section, a task, or a flagged discrepancy).

That last step matters because an IoT swarm architecture for hospitals should degrade gracefully: if context is incomplete, it should say “source missing” and narrow the output, not guess.

| Layer | What it contributes | What it contributes |

|---|---|---|

| Device nodes | raw signals and status | gaps, noisy readings, misleading timestamps |

| Local edge hive | cleanup, alignment, tagging | duplicated events, conflicting timelines, false alarms |

| Integration layer | identity, encounter links, system-to-system context | “wrong patient” risk, missing labs, broken handoffs |

| Cloud control plane | policy, audit, version control | inconsistent behavior across sites, untraceable outputs |

Clinical Care Use Cases: Swarm Learning and Cooperative AI

A single hospital can build a trendy healthcare agentic AI model that looks impressive in a demo, then watch it wobble the moment it meets a new unit, a new documentation style, or a different patient mix. That mismatch is one reason swarm computing in healthcare keeps popping up in serious architecture conversations: it treats learning and decision-making as a network behavior, not a one-site party trick.

What swarm learning looks like in healthcare 🧠🔁

When people ask what is swarm learning in healthcare, the clean explanation is this: models learn locally, and only learning signals travel. Patient records stay inside each organization; what moves between participants are controlled model updates, gradients, or parameters.

A realistic flow often looks like:

- Local training at each site (on-site data, on-site compute, on-site controls)

- Shared learning updates exchanged through a coordination service (no raw charts)

- Global refresh distributed back to sites (versioned, auditable, reversible)

That “local learning, shared intelligence” pattern is especially useful when data-sharing agreements are slow, or when teams want the benefits of multi-site learning without turning privacy and contracting into a year-long blocker.

Cooperative AI in day-to-day care 📋✅

Swarm learning is about how models get trained. Cooperative AI is about how tools behave during work. Here, swarm AI agents act like task-focused teammates: each watches a narrow slice of the clinical picture, then hands off structured context instead of dumping a wall of text.

Think of it as swarm intelligence in healthcare expressed in small, practical moves:

- One agent watches for late-arriving labs and flags “delayed, not missing”

- Another assembles a medication-change timeline from orders plus administration events

- A third checks whether today’s encounter is incorrectly linked to an old problem list entry

None of these agents “knows everything.” Together, they reduce guesswork and reduce the odds of a confident-but-wrong summary.

Where this shows up first 🎯🚀

You tend to see cooperative learning and multi-agent coordination in areas where data variation is the norm:

- Imaging workflows: sites differ by scanner vendor, protocol, and labeling habits; swarm learning helps models generalize across that spread.

- Pathology: staining and slide prep vary; shared learning can reduce brittle pattern matching.

- Risk and deterioration models: one hospital’s population is not another’s; multi-site learning can reduce blind spots that look like “random” errors in production.

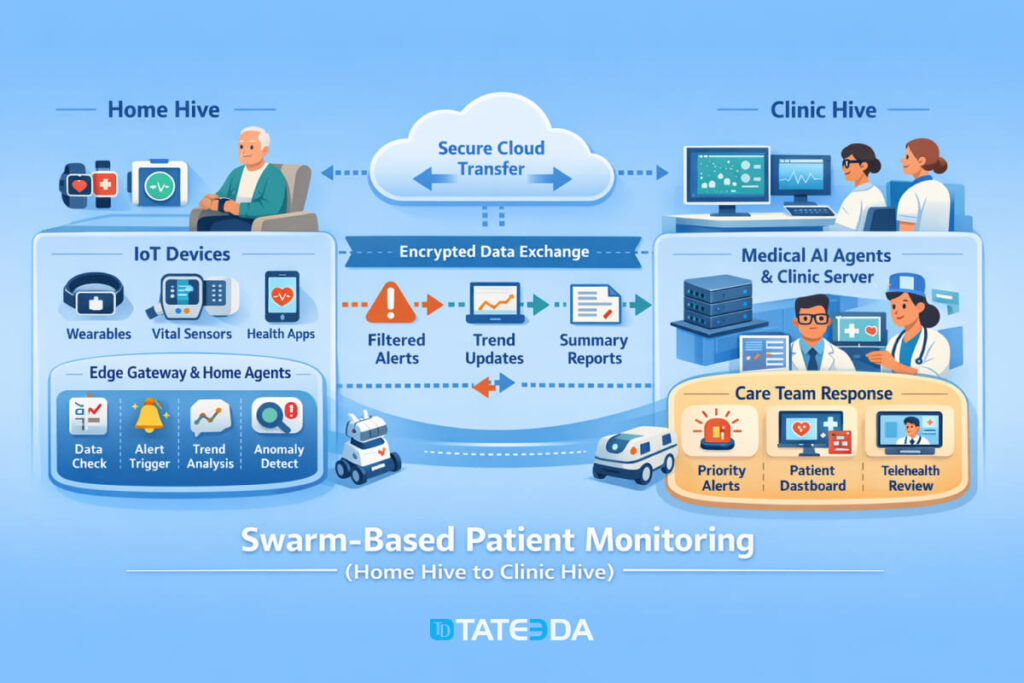

- Swarm-based patient monitoring: a home “mini hive” pre-validates signals (device on-body, timestamp sanity, missing context) before the clinic hive sees the alert.

Quick map of use cases 🗺️📌

| Use case | What stays local | What gets shared | What the clinician feels |

|---|---|---|---|

| imaging model improvement | studies, labels, protocols | model updates | fewer site-specific misses |

| pathology support | slide data, lab workflow signals | model updates | more consistent pattern detection |

| risk scoring | demographics, vitals, notes | model updates | fewer “surprise” false positives |

| remote monitoring triage | raw device streams | curated events + updates | fewer noisy alerts, clearer escalation |

Safety and responsibility, built in 🔒🩺

To keep this credible in clinical settings, cooperative learning needs clear gates:

- data boundaries (what never leaves the site)

- version control (which model produced which output)

- drift monitoring (when performance starts sliding)

- human verification paths (who reviews, when, and how errors get tagged back into improvement)

That’s the point: not “AI everywhere,” but AI that learns across reality…while still behaving like a tool clinicians can trust under pressure.

Hospital Operations Use Cases: Swarms that Move Things, Clean Spaces, and Reduce Friction

Hospitals already run like a choreography of handoffs—specimens, meds, linens, meals, equipment, waste, people. Swarm-style systems simply make that choreography less fragile by distributing “small decisions” to the edges, while keeping shared rules consistent across the building.

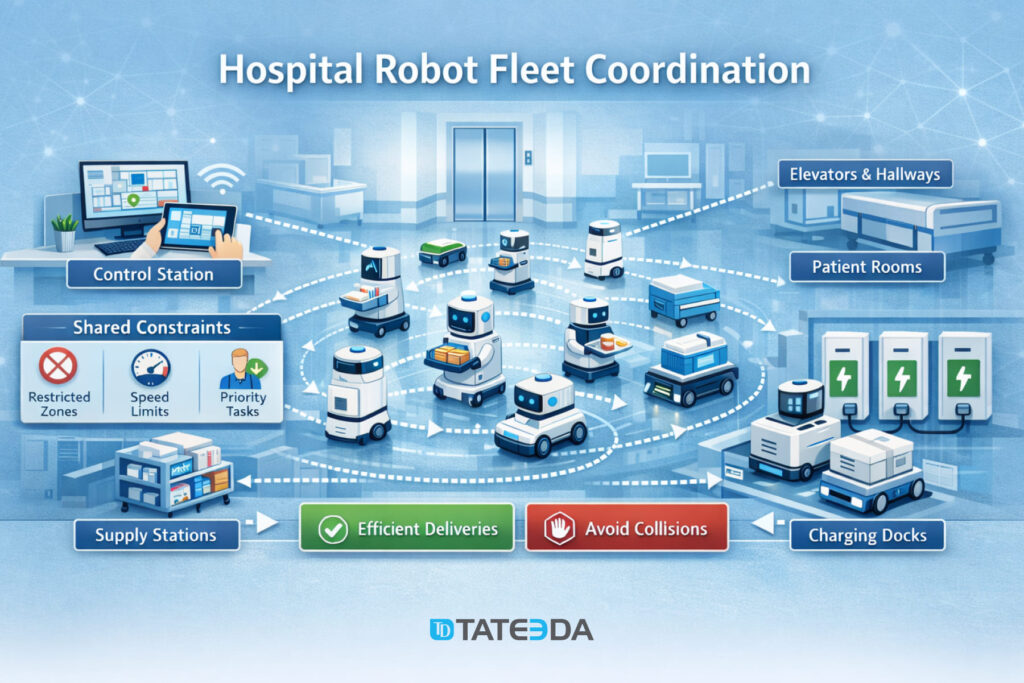

Robot fleets and coordinated logistics

A single delivery robot is a novelty. A fleet is operations engineering. In a swarm, each robot makes local choices (path, speed, pause, re-route) but obeys shared constraints (restricted zones, elevator etiquette, quiet hours, charger availability). The result is not sci-fi; it’s a calmer corridor.

Where the swarm logic actually pays off:

- Elevator and door handoffs: robots “negotiate” timing so you don’t get a traffic jam at the one elevator everyone needs right now.

- Priority routing: pharmacy-to-ward runs can preempt a low-priority pickup without stopping the whole system.

- Charger discipline: the fleet avoids the classic mistake of “everyone goes to charge at once,” which is the robot version of a coffee-break stampede.

- Exception handling: stuck wheel, blocked hallway, spills—one unit can fail without the fleet freezing.

Environmental monitoring and safety workflows that behave like a swarm

The same swarm idea applies to sensors and micro-workflows that keep clinical spaces safe. Instead of relying on one central brain to “notice everything,” multiple nodes watch for specific signals and escalate only when thresholds, context, and timing make sense.

Examples that feel small until they prevent a big day:

- Cold-chain protection: medication fridges, blood storage, specimen transport carts—local sensors validate temperature excursions and attach timestamps and device IDs before alerts hit staff.

- Airflow and isolation integrity: differential pressure, door-open durations, filter status, and room occupancy can combine into a single “risk state” rather than five unrelated alarms.

- EVS workflow support: cleaning status becomes a trackable state machine (occupied → discharged → cleaning in progress → verified) instead of a phone-call relay.

- Safety surfaces: UV disinfection devices or monitoring tools can be scheduled around traffic patterns so you don’t “sanitize the hallway” at peak shift change.

The punchline is practical: hospital operations don’t need one perfect system. They need many small systems that coordinate, degrade gracefully, and never turn a routine shift into a scavenger hunt.

Remote Monitoring Use Cases: the Home Hive for RPM and Chronic Care

Remote patient monitoring succeeds or fails on a simple truth: a home is not a clinic, and the data behaves accordingly. Cuffs are worn incorrectly, scales sit on carpet, batteries die, and Wi-Fi has the emotional stability of a toddler. A home hive solves this by adding local intelligence before anything reaches the care team.

Wearables plus home sensors plus local hub logic

Think of the home hive as a tiny “quality gate” that sits between devices and the clinic. It can run on a phone, a gateway, or both.

Typical home signals that benefit from swarm-style handling:

- Vitals: blood pressure cuffs, pulse oximeters, thermometers

- Chronic care devices: glucometers, CGMs, smart inhalers, connected weight scales

- Wearables: heart rate trends, sleep, activity, fall detection

- Context cues: medication reminders, symptom check-ins, simple questionnaires

Instead of shipping every raw reading upstream, the home hive can:

- validate ranges (“is this plausible?”),

- detect obvious artifacts (“was the cuff still inflating when the reading was captured?”),

- identify trends (“three days of upward weight drift in a heart failure pathway”),

- and package context (“reading taken after meds vs before meds”).

That last part matters. Clinicians don’t need more numbers; they need fewer surprises.

What “good swarm behavior” looks like when connectivity is imperfect

A strong design assumes the network will fail—quietly, repeatedly, and at the worst time.

Good swarm behavior in the real world:

- Store-and-forward: readings queue locally with timestamps and sync when the connection returns.

- Confidence labels: the clinic sees “verified,” “suspect,” or “needs repeat,” not a false sense of precision.

- Graceful degradation: when a device feed drops, the system explains what’s missing instead of guessing.

- Patient-friendly retries: prompts are human (“try again after resting 5 minutes”) rather than technical (“payload rejected”).

- Escalation discipline: only events that cross thresholds and persist and have a supporting context create a task for staff.

Done right, the home hive reduces alert fatigue and keeps RPM from turning into an inbox punishment. It’s the difference between “remote monitoring” and “remote noise.”

Implementation Checklist: How to Keep Swarm Systems Safe and Usable

Swarm systems are powerful because they’re distributed. That also means they can fail in distributed ways: partial data, mismatched identity, silent permission gaps, and “why did it do that?” moments. The goal is to make your swarm predictable, auditable, and clinically boring—in the best way.

Practical checklist that prevents avoidable surprises

Use this as a build-and-review guide before scaling beyond pilots.

| Implementation area | What to put in place | Why it prevents failure |

|---|---|---|

| Interoperability | HL7 v2 interfaces for legacy feeds; FHIR APIs for modern workflows; DICOM for imaging and metadata | Stops “missing context” caused by stranded systems and incompatible formats |

| Identity matching | Enterprise MPI strategy, consistent identifiers, de-duplication rules | Prevents “wrong patient” joins—the most expensive bug you can ship |

| Permissions | Role-based access, least privilege, SMART on FHIR/OAuth where relevant | Avoids overexposure of PHI and reduces unsafe tool behavior |

| Audit trails | Who/what/when for every decision, transformation, and handoff | Makes investigations possible without guesswork or finger-pointing |

| Data provenance | Source tags, timestamps, correction markers, lineage | Helps the system explain uncertainty instead of hallucinating confidence |

| Monitoring | Health checks for every node, lag detection, alert volume baselines | Catches slow failures before staff discover them mid-shift |

| Change control | Versioned rules, feature flags, rollback paths | Lets you undo bad updates fast (because you will eventually need to) |

Guardrails that keep the swarm clinically responsible

Swarm logic should ask for human judgment at the right moments, not pretend it replaced it.

Build in these safety behaviors:

- Human verification loops: clinical review for high-impact outputs; structured feedback to improve rules and prompts.

- Anomaly detection: flag outliers in data and outliers in model behavior (sudden spike in refusals, weird certainty, unexpected drift).

- Rollback modes: if a node becomes unreliable, fail closed (or fail safe) with clear messaging and minimal disruption.

- Failure containment: isolate a misbehaving agent or device feed instead of letting it contaminate downstream summaries.

If your swarm can explain what it used, what it couldn’t access, and why it escalated, it earns trust. If it can’t, it becomes one more system that people work around—quietly, creatively, and immediately.

Final word: Build a Custom AI Swarm System for Healthcare

Swarm-style systems are showing up in healthcare for a simple reason: care delivery is already distributed. Data is produced at the bedside, in the home, in imaging suites, in the lab, and inside scheduling and billing tools—and if you try to force all of that into one central “brain,” you usually get latency, missing context, brittle integrations, and the kind of AI behavior that erodes trust fast.

The strongest pattern we’ve covered is also the least glamorous: treat data quality, interoperability, and clinical responsibility as first-class engineering work. When device feeds are validated at the edge, handoffs are traceable, and humans stay in the loop for high-impact decisions, “swarm” stops being a buzzword and becomes a safer way to scale automation.

If you’re exploring this direction, TATEEDA, as one of the top healthcare AI software companies, can help you plan, design, build, and test swarm-based healthcare AI systems end-to-end—from IoT-swarm hive architecture and integration patterns (HL7 v2, FHIR, DICOM) to multi-agent orchestration, guardrails, and verification workflows. We focus on the unglamorous parts that prevent failures: reliable identity matching, permissions, audit trails, rollback modes, and realistic validation in the messy conditions of real clinical operations—so your system behaves like a trustworthy assistant, not another tool staff learn to avoid.