Vibe Coding vs. Professional Engineering: Is AI Making Development Services Obsolete?

In this article, we examine vibe coding trends through the lens of the real question behind the hype—whether AI is actually making development services obsolete, or simply changing what professional engineering is responsible for.

It also clarifies the vibe coding meaning in commercial and healthcare contexts, where architecture, verification, and compliance disciplines decide whether AI speed becomes a durable advantage or an expensive rollback.

In 2025, the vibe coding trend stopped being a niche developer meme and turned into a boardroom topic. Major tech media framed it as a step-change in how software gets built, and Collins Dictionary even named “vibe coding” its 2025 Word of the Year. That kind of cultural validation matters: it signals that AI-assisted building is no longer a curiosity—it’s becoming a default expectation in product discussions, especially when speed-to-market is tied to competitive advantage.

If you’re asking what vibe coding software development is, think of it as building by intent first: describe outcomes, let an AI draft the implementation, and iterate until it works. In what is vibe coding software engineering terms, it’s a workflow shift from “writing code” to “directing code generation, validation, and refactoring.” A modern vibe coding tool can scaffold features, wire integrations, generate tests, and propose refactors—often in minutes—while a human steers the constraints, architecture, and risk decisions that separate experiments from custom AI software development built for production.

What is vibe coding in 2026?

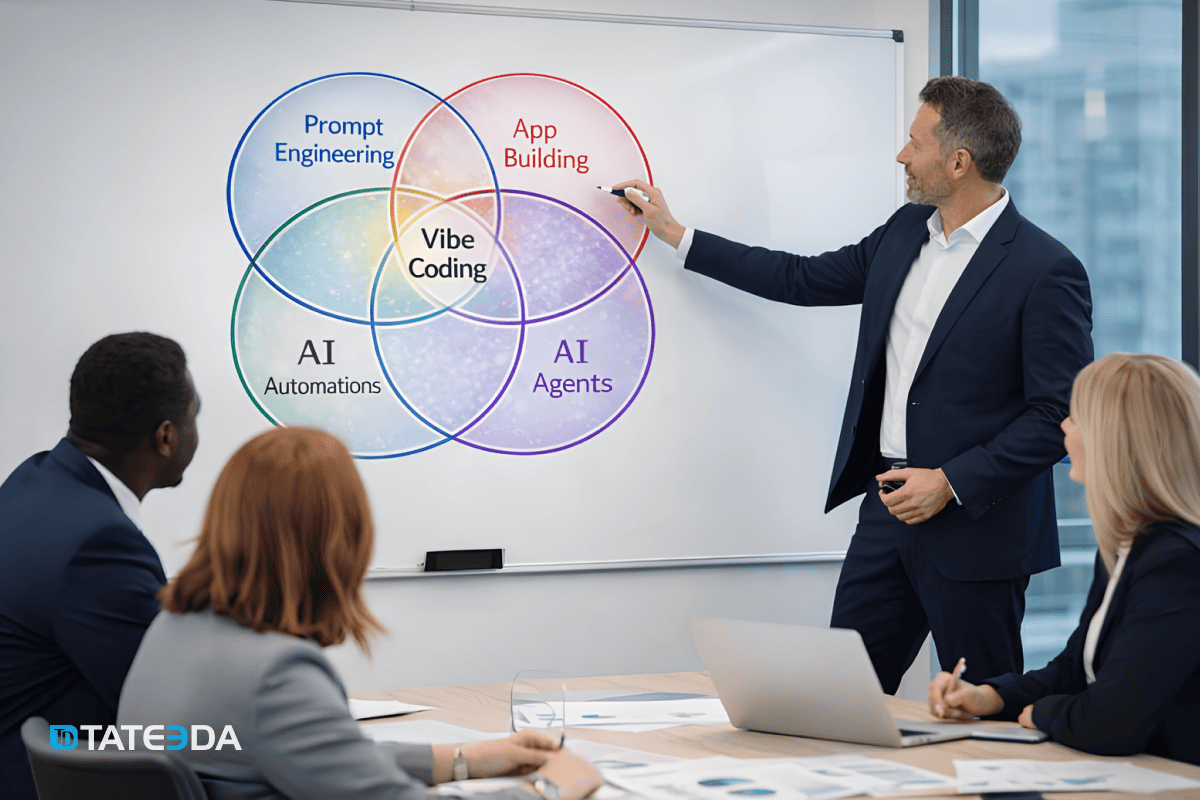

This Venn diagram offers a concise vibe coding definition: “vibe coding” sits at the intersection of…

- prompt engineering (expressing intent, constraints, and acceptance criteria in natural language)

- app building (turning outputs into real interfaces and services)

- AI automations (wiring workflows so software actually does work on a schedule or trigger)

- AI agents (delegating multi-step tasks to tool-using systems that can plan, call APIs, and iterate).

In other words, what does vibe coding mean in practice? It means software creation shifts from hand-authoring every line to orchestrating generation plus validation—where prompts become a controllable spec layer, and the developer’s job becomes direction, verification, and system shaping.

The key insight in the overlap is that none of these circles alone equals “vibe coding.” Prompting without delivery produces demos; app building without AI becomes slower; automations without guardrails become fragile; agents without product engineering become unpredictable. The “vibe coding” center implies a workflow where a team can ideate, scaffold, integrate, and operationalize quickly—producing vibe coding apps such as internal dashboards, lightweight workflow tools, prototype patient intake flows, analytics assistants, or integration microservices—while still requiring engineering discipline to keep architecture coherent, interfaces stable, and behavior testable as complexity rises.

Table of Contents

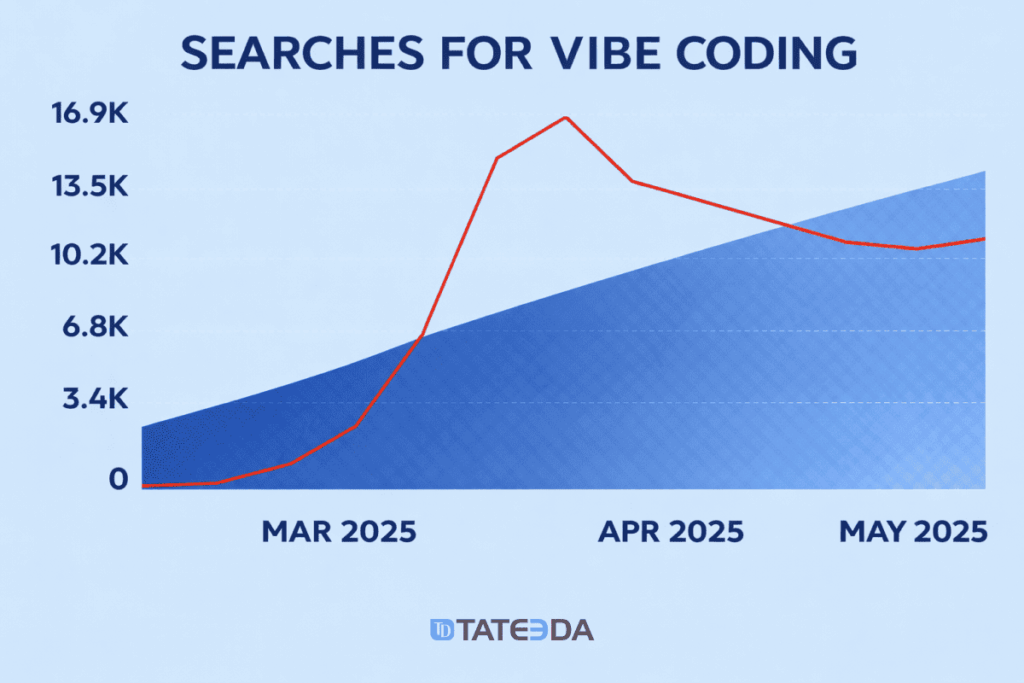

From Search Spike to Enterprise Standard: Vibe Coding Market in 2025

The adoption curve in 2025 was steep. Searches for “vibe coding” reportedly jumped 6,700% in spring 2025 (Exploding Topics), which mirrors what many CTOs saw internally: teams experimenting, then standardizing. By late 2025, surveys indicated 84% of developers were already using or planning to use AI coding tools, and 51% were using them daily. Some estimates put the share of AI-generated code at roughly 41% of all code written in 2025—less “assistive autocomplete,” more vibe coding AI as a co-producer.

Enterprises moved fast, too. Reports that “AI adoption reached 78% of enterprises in 2025” reflect a clear motivation: 25–50% productivity gains on well-bounded work. Meanwhile, McKinsey’s State of AI 2025 survey found 62% of organizations experimenting with autonomous AI “coding agents,” pushing vibe coding programming beyond snippets into multi-step execution. By the end of 2025, top engineering teams were reporting 85–90% daily usage rates—meaning AI assistants became part of the operating system of software delivery, not a side experiment.

For health tech and other regulated sectors, the conversation quickly turns to what are the limitations of vibe coding. The headline risk isn’t that AI can’t produce code—it’s that it can produce plausible code faster than teams can validate it without a disciplined engineering process. This is where the vibe coding concept becomes strategically important: the winners will treat it as an accelerator for experienced teams (architecture, testing, security review, compliance), not a shortcut around those fundamentals.

And that is the practical dividing line in 2025’s vibe coding trends: rapid creation is getting commoditized, while professional engineering judgment is becoming more valuable, not less.

This table summarizes a few key indicators of vibe coding’s industry-wide adoption in 2025:

| 2025 Adoption Metric | Statistic |

|---|---|

| Search interest growth (Q1–Q2 2025) | “Vibe coding” +6,700% searches in 3 months. |

| Developers using AI coding tools | 84% of developers use or plan to use; 51% use them daily. |

| Portion of code generated by AI | ~41% of all code in 2025 was AI-generated. |

| Enterprise AI adoption (overall) | 78% of enterprises use AI in dev processes. |

| Orgs experimenting with AI “agents” | 62% of companies are trying autonomous dev agents. |

| Y Combinator startups using AI code | ~25% of YC companies use AI-generated code. |

Why Vibe Coding Feels Polarizing: Skill-Dependent Outcomes and the TCO Curve

The sharp divide in opinions about vibe coding is largely explained by variance. LLM-assisted development does not deliver a uniform uplift; it produces a wide spread of outcomes that depend on baseline fluency in code, the ability to validate results, and how much of the work is prototyping versus long-lived system design. In that sense, the debate around the vibe coding method often conflates two different questions: “Can an LLM generate runnable code?” (often yes) and “Can that code be trusted, maintained, and evolved efficiently?” (it depends on who is steering and verifying it).

In practice, the pattern often looks like this:

- Near-zero coding fluency: LLM output can be hard to operationalize because there is insufficient ability to run, inspect, and troubleshoot the result; the bottleneck shifts to verification rather than generation.

- Early-stage fluency: The floor rises meaningfully—templates, examples, and scaffolds appear faster, making small utilities and prototypes more attainable.

- Mid-level experience: The workflow can degrade into “prompt-driven patching,” where debugging and integration efforts outgrow the initial speed gains; this is where teams feel the friction most acutely in Vibe coding versus traditional coding.

- Senior expertise: value increases again because experienced engineers set constraints, detect fragile logic, impose architecture, and demand tests; the model becomes a throughput accelerator rather than a decision-maker.

A useful additional lens is decision latency: vibe coding compresses implementation time but can defer key engineering decisions (interfaces, invariants, failure modes, security boundaries). Traditional workflows force many of those decisions earlier through design reviews, explicit modeling, and structured testing. The most productive teams in 2025 tended to treat LLMs as high-speed draft generators inside a disciplined pipeline—strong specs, tight feedback loops, automated checks, and human accountability—so the upside compounds without turning “more code” into “more uncertainty.”

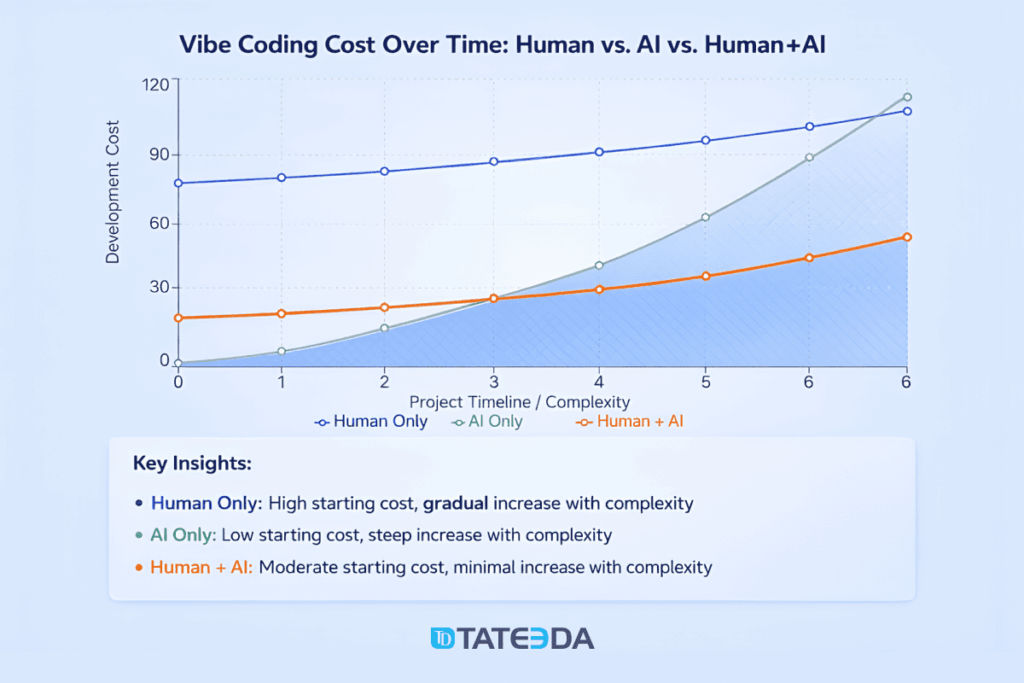

The figure behaves like a small economics lecture disguised as a line chart: cost accumulates differently depending on who (or what) is doing the generating, the checking, the stitching, the owning. “AI only” rides low at the beginning—scaffolds appear, endpoints materialize, screens light up—then the curve bends hard as the project acquires mass: integration drag, ambiguous intent baked into code paths, security patching after the fact, tests arriving late and thin, refactors turning from optional to unavoidable.

“Human only” enters with a steeper opening price because the early spend goes into architecture, review cadence, quality gates, and operational hygiene, so later growth looks steadier, almost boring in a good way. The “Human + AI” trace is the operational sweet spot the chart argues for: fast draft-generation plus human constraint—design discipline, refactor pressure, verification loops, and governance—so the marginal cost stays flatter while complexity rises.

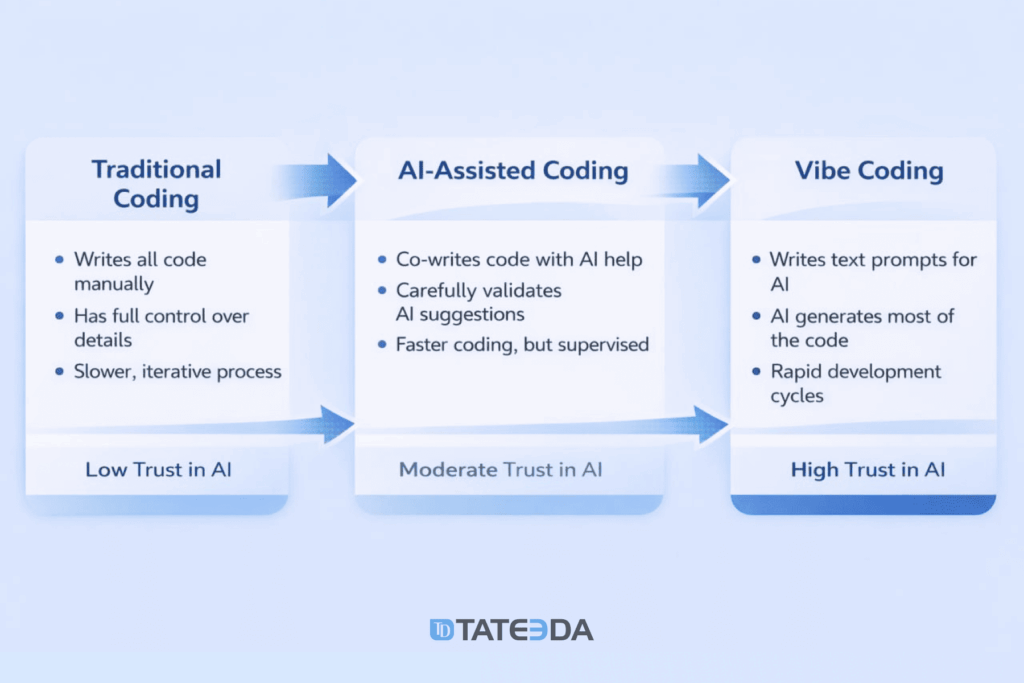

Vibe Coding vs Traditional Coding: Where Speed Ends, and Real Engineering Begins

A practical vibe coding definition starts with intent-first building: natural-language prompts drive rapid scaffolding, the model drafts implementation, and iteration replaces a lot of hand-authored boilerplate. That vibe coding method can feel like a step-function change in throughput—especially for prototypes, internal tools, and early proofs of concept—because the “first working version” arrives faster than most teams are used to. The vibe coding pros and cons become visible almost immediately: speed and low friction upfront, followed by growing verification load, architectural drift, and rework if guardrails are absent.

However, once a project crosses into production gravity—real users, real integrations, real data, real uptime—the center of the discussion shifts to what are the limitations of vibe coding. The differentiator is not whether an AI can generate code; it is whether the work has been engineered into something operable: clear system boundaries, predictable failure modes, test coverage that actually catches regressions, secure data handling, and observability strong enough to diagnose issues under load.

The comparison below clarifies how “vibe coding” differs from “vibe engineering” across the dimensions that determine whether AI-assisted development becomes a durable advantage or a delayed cost spike.

| Dimension | Vibe coding (casual AI-first) | Vibe engineering (professional AI-assisted delivery) |

|---|---|---|

| Primary objective | Get something working immediately; prove the idea. | Deliver a product that holds up under real load, real users, and real risk; make it viable to operate and monetize. |

| Prompting style | Short, informal prompts (“build X”). | Structured instructions: roles, constraints, security rules, data boundaries, non-functional requirements, background jobs, and success criteria. |

| Planning artifacts | Often ad hoc; limited specs. | Lightweight but explicit specs: user flows, acceptance criteria, edge cases, and integration contracts. |

| Technical architecture skills | Optional in the short term. | Expected: system design, APIs, third-party services, deployment patterns, and integration tradeoffs. |

| Tooling awareness | Basic use of an assistant/editor is enough. | Broader toolchain literacy: CI/CD, DevTools, environments, secrets, observability, policy checks; often includes agent tooling and orchestration (e.g., MCP-style tool calling). |

| Coding literacy | Can be minimal; heavy reliance on generated output. | Sufficient to challenge output: logic, data flow, error handling, performance implications, and refactoring decisions. |

| Quality control | “It runs” becomes the acceptance bar. | Reviews, tests, static analysis, and reproducible builds; correctness is treated as an engineering property, not a lucky outcome. |

| Security posture | Risk is frequently implicit and missed (data exposure, injection issues, weak auth). | Security is part of the workflow: threat-aware design, input validation, least privilege, secure defaults, dependency hygiene. |

| Reliability & operations | Limited consideration for failure modes. | Operability built in: metrics, logs, alerts, runbooks, rate limits, retries, backoff, and graceful degradation. |

| Data discipline | Data handling is often an afterthought. | Clear data rules: classification, retention, access controls, auditability; suitable for sensitive workloads. |

| Typical risks | Hidden defects, brittle behavior, “works on the happy path,” accidental leaks, unowned code. | Higher upfront effort and coordination cost, but fewer late-stage fire drills and regressions. |

| Maintainability | Hard to extend; unclear intent; changes trigger cascades. | Designed for change: modularity, clear interfaces, documentation, and a predictable evolution path. |

| Best-fit scenarios | Demos, experiments, internal throwaways, early prototypes. | Production software, customer-facing systems, regulated domains, integrations, and long-lived codebases. |

| What it feels like | Fast iteration, novelty, lots of “paste-and-try.” | Flow design, context management, testing discipline, KPI definition, and ongoing monitoring. |

| Team collaboration | Often solo-driven; knowledge stays in prompts and local context. | Reviewable work products: PRs, ADRs, tickets, test plans; easier onboarding and handoffs. |

| Cost profile over time | Low entry cost; rework can spike sharply as complexity grows. | Moderate entry cost; flatter cost curve as scope and complexity increase. |

| Career impact | Can plateau if it never progresses beyond “prompt-to-output.” | Expands scope: building with AI while owning outcomes; increases leverage and responsibility. |

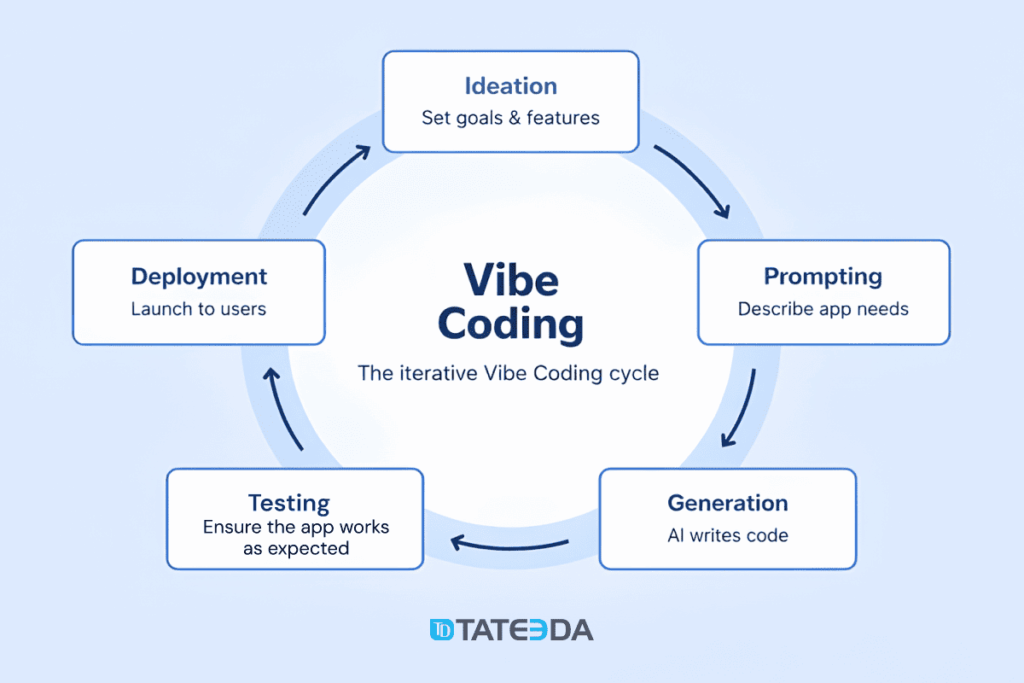

Vibe Coding as an Iterative Loop

Vibe coding programming rarely succeeds as a single “prompt → code” moment; it behaves more like a loop that tightens over time. An idea gets compressed into a minimal set of goals and features, prompts translate intent into constraints, generation produces a draft, testing exposes what the draft misunderstood, and deployment turns a local success into an operational artifact. That cycle is where velocity actually comes from—short feedback distances, fast correction, and repeated passes that steadily replace guesswork with verified behavior.

This is also where the vibe coding pros and cons become obvious. The upside is immediate: ideation-to-first-version time collapses, boilerplate stops consuming senior attention, and early prototypes become cheap experiments. The downside emerges when the loop skips steps: unclear prompts produce plausible-but-wrong code, generation inflates surface area faster than it can be reviewed, testing gets delayed, and deployment becomes a scramble of environment issues, secrets, and unobserved failures. The difference between a demo that “runs” and a product that survives is almost always the discipline of the loop—especially the testing and deployment edges.

In practice, the “best vibe coding tools” are the ones that reinforce the loop rather than just speeding up the generation box, and the most effective vibe coding platforms are the ones that keep context consistent across iterations (requirements → code → tests → deployment signals). A useful lens is to ask where a tool strengthens the cycle’s weakest link:

- Ideation → Prompting: helps convert fuzzy goals into constraints, acceptance criteria, and edge cases.

- Prompting → Generation: keeps codebase context, style, and architecture patterns consistent instead of producing one-off blobs.

- Generation → Testing: drafts meaningful tests, expands coverage, and reduces “happy path” bias.

- Testing → Deployment: integrates CI checks, environment configuration, and release guardrails so shipping stays repeatable.

- Deployment → Next iteration: surfaces logs/metrics and user feedback as structured inputs for the next prompt cycle.

An Expert Take: Where Vibe Coding Actually Creates Durable Gains

Vibe coding works best when treated as an assisted workflow that still requires active supervision. AI coding tools can accelerate implementation, but they rarely behave like autonomous builders; their output quality depends on tight scoping, clear intent, and continuous verification. The most reliable pattern is incrementalism: prompts that target small, well-defined slices of functionality, combined with an explicit plan for how those slices assemble into a coherent system.

“AI-assisted coding relocates effort. Typing becomes cheaper; judgment becomes the constraint. The first draft arrives quickly, but the risk surface expands just as fast—misread requirements, implicit assumptions, brittle edge-case behavior, and security gaps that look harmless until a real workflow hits them. Durable progress comes from treating prompts as executable specifications: narrow the slice of work, state constraints and acceptance criteria, generate, then immediately interrogate the output with review, tests, and operational checks. When teams run that loop consistently, AI accelerates delivery without diluting accountability; when they don’t, speed mainly produces more code whose intent is unclear, whose behavior is unproven, and whose future changes get expensive.”

— Slava K., CEO of TATEEDA GLOBAL

In practice, effective AI-assisted development looks less like “one big prompt” and more like a controlled drafting pipeline. Teams that get consistent results typically invest in prompt discipline (sometimes even asking AI to produce higher-grade prompts and checklists first), keep requirements visible, and cross-check answers across multiple models to reduce blind spots and tool-specific failure modes. Using several assistants—GitHub Copilot, ChatGPT/Codex, Gemini, Grok, and others—also helps when one model stalls, misunderstands context, or produces code that conflicts with the chosen stack.

A practical “in-a-nutshell” operating model:

- Start with a build plan: goals, constraints, key flows, and a short definition of done.

- Prompt in small units: one function, one component, one integration step—then validate before expanding scope.

- Ask for “expert prompts” first: have AI generate structured prompts, acceptance criteria, and edge cases before generating the code.

- Compare outputs across tools: use two or more models to sanity-check logic, security assumptions, and implementation approach.

- Treat AI output as a draft: review, refactor, and test immediately; avoid merging opaque code no one can explain.

Are Developers Obsolete because of Vibe Coding?

Short answer: no—commercial software still demands engineering, not just code generation. Vibe coding makes code production cheaper and faster, but commercial projects remain constrained by requirements ambiguity, integration edge cases, security and privacy obligations, performance under load, incident response, and long-term maintainability. Organizations still pay for outcomes—reliable workflows, predictable releases, controlled risk—not for keystrokes. That keeps professional developers and engineers in demand because they translate intent into systems that survive real users, real data, and real operational pressure.

The role of developers is shifting upward, not disappearing. Engineers increasingly function as system designers, reviewers, and operators of AI-assisted pipelines: defining constraints and acceptance criteria, selecting architectures, enforcing standards, proving correctness via tests, and building telemetry plus guardrails that keep production sane. Vibe coding can ship a demo; engineering ships a product that can be maintained, audited, secured, and extended by a team—especially in regulated environments (health tech, fintech) where reliability, privacy, and traceability are conditions for doing business.

What “AI-enhanced developers” deliver that pure vibe coding usually cannot (at scale):

| Benefit of AI-enhanced developers (Human + AI) | What it enables in practice | Why it beats pure vibe coding |

|---|---|---|

| 1) Higher throughput per engineer | Fewer people can deliver more features per sprint | Pure vibe coding generates volume, but often shifts effort into rework and debugging |

| 2) Faster time to production-ready | Scaffolding + immediate refactor + tests | Pure vibe coding optimizes for first run, not release quality |

| 3) Better requirements translation | Intent → constraints → acceptance criteria | Pure vibe coding often overfits prompts and misses hidden requirements |

| 4) Architectural coherence | Consistent module boundaries, interfaces, patterns | Pure vibe coding tends to accumulate mismatched styles and ad hoc decisions |

| 5) Lower defect density over time | Reviews + test automation + static analysis | Pure vibe coding commonly leaves happy-path correctness and brittle edges |

| 6) Stronger security posture | Threat-aware design, secure defaults, least privilege | Pure vibe coding can introduce silent vulnerabilities and weak data handling |

| 7) More reliable integrations | API contracts, retries, idempotency, versioning | Pure vibe coding frequently breaks at integration seams |

| 8) Operability by design | Logs, metrics, alerts, tracing, runbooks | Pure vibe coding often ships without visibility |

| 9) Lower maintenance cost | Readable code, documentation, predictable refactors | Pure vibe coding can leave opaque code no one changes confidently |

| 10) Compliance readiness | Audit trails, access controls, change management | Pure vibe coding rarely produces the artifacts auditors expect |

| 11) More predictable delivery | Smaller change sets, clearer risk boundaries | Pure vibe coding makes scope slippery and progress hard to measure |

| 12) Better cross-model validation | Comparing outputs for logic and security assumptions | Pure vibe coding often trusts one model without triangulation |

Whether you are a seasoned developer or just starting to move beyond vibe coding, presenting your technical expertise correctly is key. Check out this guide on how to build a strong software engineer resume to showcase your professional engineering mindset.

Conclusion: Vibe Coding Changes the Workflow, not the Need for Engineers

Vibe coding has clearly moved from a niche practice to a mainstream way of drafting software: prompts become a first-class input, AI accelerates scaffolding and iteration, and teams can reach a working prototype faster than in traditional workflows. However, the article’s core point is that speed alone does not equal delivery. As systems grow, the hard problems reassert themselves—architecture consistency, integration reliability, security posture, testability, operational visibility, and the day-two realities of maintenance and change.

That is why vibe coding is rarely the best fit as a primary approach for commercial products—especially in custom healthcare software development—where software architecture decisions and compliance requirements are non-negotiable. Regulated environments demand traceable changes, controlled access, predictable failure modes, and defensible quality processes; AI can accelerate implementation, but it does not replace accountability for design, verification, and governance. The winning pattern is disciplined AI-assisted engineering: generation speed wrapped in review, testing, security checks, and production readiness.

At TATEEDA, the operating model is built around that balance. Teams combine experienced engineers who can design and validate complex systems with AI-assisted code generation and debugging to maintain a productive delivery rhythm—without turning output volume into unowned risk. The result is practical: faster iteration where it is safe to move quickly, tighter controls where it is necessary to be careful, and cost structures that stay predictable as projects move from prototypes to production-grade software.