AI Myths in Healthcare [2026]: Separate Facts from Fiction

If you run teams or make decisions in care delivery, this guide helps you cut through noisy claims and anchor plans to evidence. CTOs, healthcare decision-makers, and software architects will find clear examples that turn AI myths in healthcare into practical next steps, along with artificial intelligence in healthcare statistics that inform 2025–2026 budgeting, staffing, and governance, so you can move faster without risking patients or compliance.

AI is extensively used in analytical and operational systems in clinics, laboratories, and back offices; yet, myths about AI in healthcare persist alongside the headlines. The signal is strong: 66% of U.S. physicians used AI in 2024 and hospital adoption of predictive tools reached about 71% in 2024; the FDA now cites more than 1,000 authorized AI-enabled devices; public caution persists with 60% of Americans uncomfortable when their own care might rely on AI; and clinical trials show nuance with ambient AI scribes cutting burnout from 51.9% to 38.8% in 30 days.

Expect these adoption curves and concerns to evolve through 2025 and 2026 as custom agentic AI software development scales, governance matures, and buyer expectations for safety and explainability harden into procurement checklists that keep healthcare AI myths debunked in practice.

Below is a plain-spoken tour of medical AI myths where perception and reality collide in real workflows. Use it to start debunking AI myths for healthcare in your organization during 2025 to 2026 planning cycles, shape roadmaps with measurable outcomes, track healthcare agentic AI trends that signal where to invest, and pick first steps that actually deliver.

| Myth | Healthcare AI myth explained | Why did this myth appear | What is affected and how |

|---|---|---|---|

| 1. AI will replace clinicians | Algorithms seem faster at pattern detection, so people assume doctors and nurses become redundant. | Media demos beating benchmarks, sensational headlines, and confusion between assistance and autonomy. | Staffing plans and clinician trust; leads to resistance, over-automation, and unsafe delegation of decisions. |

| 2. More data always means better care | Volume is equated with quality, as if bigger datasets automatically improve outcomes. | Big data narratives and leaderboard culture that reward size over relevance and curation. | Data sprawl, higher costs, privacy exposure, and noisier inputs that degrade model performance. |

| 3. FDA clearance means plug-and-play | A cleared device is treated as a drop-in solution for any setting. | Marketing shorthand that conflates safety and effectiveness with workflow fit. | Budgets and timelines; underestimates integration, training, and post-market monitoring needs. |

| 4. HIPAA compliance equals security | A checkbox is taken as proof that systems are fully secure. | Compliance language is mistaken for operational security practice. | PHI exposure and incident risk; gaps in access control, logging, and response readiness. |

| 5. AI decisions are objective and bias-free | Machines are assumed neutral because they lack emotions. | Misunderstanding of training data and historical inequities embedded in records. | Equity and outcomes; hidden disparities, legal exposure, and loss of community trust. |

| 6. Interoperability is solved, so AI can read everything | APIs are seen as semantic agreement across hospitals. | FHIR success stories oversold as universal standardization. | Data mismapping, model errors, and patient safety events from subtle meaning differences. |

| 7. Explainability is optional if accuracy looks good | High accuracy is thought to be enough to drive adoption. | Benchmark thinking and focus on aggregate metrics over bedside trust. | Clinician acceptance and oversight; slower uptake and inappropriate reliance in edge cases. |

| 8. Chatbots can safely handle all patient questions | A tuned assistant is expected to triage and educate without supervision. | Extrapolation from retail support bots to clinical risk domains. | Patient safety and liability; escalation delays and harmful advice if intents are too broad. |

| 9. Cloud is inherently insecure for PHI | On-premises is assumed safer by default. | Memory of early breaches and a desire for physical control. | Cost and agility; missed security primitives, slower delivery, and fragmented tooling. |

| 10. AI agents can run clinical workflows on their own | Autonomous agents are presumed able to order, message, and close loops. | Hype from general AI narratives and slick demos. | Safety, ethics, and legal accountability; requires human approval gates that are often skipped. |

| 11. Ambient documentation ends note burden overnight | Scribes are expected to eliminate charting immediately. | Vendor promises and underestimation of behavior change. | Change fatigue and unmet expectations; quality issues without review and consent routines. |

| 12. One model can serve every specialty | A universal model is expected to work equally well across domains. | Desire for consolidation and simplified operations. | Specialty performance and safety; misses context-specific signals and error tolerances. |

| 13. Once deployed, models stay accurate | Post go-live performance is treated as stable. | Software maintenance habits projected onto data-driven systems. | Silent drift and calibration errors; gradual loss of accuracy and clinician confidence. |

| 14. De-identified data is risk-free | Removing direct identifiers is seen as complete protection. | Oversimplified views of re-identification risk and linkage attacks. | Privacy and reputation; unexpected re-identification and contract violations. |

| 15. AI will automatically worsen inequities | Bias is assumed to be inevitable and unfixable. | Backlash to highly publicized harms and mistrust of data. | Adoption and innovation; paralysis that prevents targeted improvements and outreach. |

| 16. A great AUC guarantees quick ROI | A single metric is treated as a proxy for financial outcomes. | KPI simplification and pressure for fast wins. | Budget and ops design; misallocated spend and stalled projects without workflow changes. |

| 17. Pick a platform first, then the use cases | Buying a platform is expected to create value on its own. | Procurement comfort and fear of missing out on standards. | Time to value and shelfware; diffuse scope and weak alignment to clinical pain points. |

| 18. Fine-tune a large model and specialty needs vanish | Tuning is seen as a shortcut to domain expertise. | Blog-driven optimism and underestimation of retrieval and evaluation needs. | Safety and factual grounding; hallucinations and brittle behavior in complex cases. |

| 19. Synthetic data eliminates the need for real clinical data | Generated records are assumed to cover all scenarios. | Privacy pressure and desire to avoid governance overhead. | Generalization and edge cases; blind spots in rare conditions and device quirks. |

| 20. Governance slows innovation | Reviews and checklists are viewed as blockers, not safeguards. | Past experiences with heavy processes and unclear criteria. | Rework and compliance findings; unpredictable approvals and hidden risks that surface late. |

Why we’re qualified to debunk healthcare AI myths

Since 2013, TATEEDA has built and operated HIPAA-grade software for U.S. providers. As a San Diego custom software development company, we work within EHRs, revenue-cycle tools, and clinical operations, which enables us to separate AI myths in healthcare from measurable results and turn medical AI myths into implementation details that leaders can trust.

Working with AYA Healthcare, we put our teams inside credentialing, scheduling, timekeeping, compliance reviews, and audit trails at a national scale. Those firsthand lessons let us test myths about AI solutions in healthcare against real workflows and publish this “healthcare AI myths debunked” review.

Our approach to debunking AI myths for healthcare is simple and transparent: break complex tasks into auditable steps, route each step to the right service or agent, and attach provenance with guardrails and safe-to-fail checks. Integrations cover FHIR R4 reads and writes, HL7 v2 feeds, SMART on FHIR launches, payer and clearinghouse APIs, device clouds, scheduling, secure messaging, and analytics; security practice includes least-privilege access, role-based controls, PHI minimization, consent capture, immutable logs, and human approval gates; releases ship with acceptance tests, drift and quality monitors, canary phases, rollbacks, dashboards, and runbooks.

What this looks like in real work:

- Clinical documentation workflow copilots, triage and intake flows, prior-authorization packet builders, remote-monitoring signal filters, and revenue-cycle helpers that fit existing routines.

- Evaluation harnesses that verify citations, data types, thresholds, payer edits, NCCI rules, and policy constraints before promotion; failures trigger targeted replans that reduce rework and improve auditability.

- A delivery model that pairs U.S. discovery and architecture with nearshore engineering for speed, overlap, and value, keeping pilots moving while preserving control and visibility.

Result: a practitioner team that knows where AI myths in healthcare originate, how to test them in production, and how to convert them into safe, observable systems that clinicians accept and executives can measure.

Table of Contents

20 AI Myths vs Realities in Healthcare: What Teams Should Know

The list below frames each misconception as it is usually stated in meetings or vendor decks, then contrasts it with what actually happens in clinical environments. Use it to set expectations, scope first projects, and decide where policy, process, or engineering changes are required before software ships.

Myth 1: AI Will Replace Clinicians

Myth: Algorithms read journals in seconds and outperform humans at pattern detection, so doctors and nurses will become redundant, one of the oldest AI myths in healthcare.

Reality: Clinical care mixes pattern recognition with empathy, consent, context, and accountability; tools can draft, summarize, or rank, while licensed professionals explain options, document reasoning, and take responsibility. Clear task boundaries, including steps that require a human signature, reduce ambiguity and risk, and periodic sampling of decisions confirms the line still holds.

Interfaces that embed supervision, offer easy escalation, and capture clinician rationale support audits and ongoing learning, and when paired with AI-driven integration of wearable device data into EHRs, they also surface timely, context-aware insights that clinicians can trust and act on.

Myth 2: More Data Always Means Better Care

Myth: If you pour in enough data, performance will rise without limits, a familiar theme in myths about AI healthcare.

Reality: Healthcare data is messy and uneven across sites; better results come from curated cohorts, documented provenance, and governance that tracks lineage and corrections. A minimal feature set often outperforms sprawling inputs during validation because marginal gains from each added field can be measured clearly. Priority typically goes to elements that reduce error for underrepresented groups rather than inflate headline metrics.

Myth 3: FDA Clearance Means Plug-and-Play

Myth: Once a product is cleared or approved, you can drop it into the EHR and expect instant value, a persistent medical AI myth around regulation.

Reality: Clearance validates safety and effectiveness for a defined use; real gains require workflow fit, integration, guardrails, change management, and post-market monitoring. Limited scope pilots with explicit go or no-go criteria and documented rollback plans tend to surface integration gaps early. Adoption and false alert rates by role provide fast feedback for training and configuration.

Myth 4: HIPAA Compliance Equals Security

Myth: A HIPAA logo means the system is secure, which often appears in AI myths in healthcare lists.

Reality: Compliance is the floor; strong security uses least privilege access, segmentation, encryption, audited keys, phishing-resistant authentication, and tested incident response. Regular credential rotation, tabletop breach exercises, and release time configuration scans lower residual risk. Secrets and identities function as living assets with owners and expiry dates, not static checkboxes.

Myth 5: AI Decisions Are Objective and Free of Bias

Myth: Machines lack feelings, and therefore, outputs are neutral, a core claim in myths about AI healthcare.

Reality: Models learn from historical data that reflects inequities; fairness reviews, subgroup reporting, representative training, and recourse mechanisms protect patients and keep medical AI myths in check. Subgroup dashboards published with each model update make performance differences visible, while a simple clinician flag harm control keeps feedback flowing. Multidisciplinary review of flags with authority to adjust thresholds turns concerns into changes.

Myth 6: Interoperability is Solved, so AI Can Read Everything

Myth: With modern APIs in place, systems can absorb the entire chart across organizations, another common AI myth in healthcare.

Reality: Interfaces exist, yet semantics differ across hospitals; winners define the minimal clinical context per use case, validate on real records, and improve iteratively. A living mapping glossary shared across teams prevents semantic drift as systems evolve, and reconciliation time during go-lives avoids last-minute surprises. Explicit input quality metrics catch degraded feeds before they distort outputs. As healthcare mobile app trends in 2026 tilt toward mobile-first intake, wearable streams, and edge inference, consistent semantics across APIs and devices becomes a prerequisite for safe AI, with input validation and provenance tracking moving from nice-to-have to mandatory.

Myth 7: Explainability Is Optional if Accuracy Looks Good

Myth: High accuracy is enough to drive adoption, a repeat theme in myths about AI healthcare.

Reality: Trust grows when systems show their work; saliency on images, key chart features, confidence intervals, and comparable cases help clinicians decide when to accept a suggestion or escalate. One-click access to supporting evidence and note templates that capture why advice was accepted or declined improves trust without slowing care. Explanations that mirror the model’s actual reasoning, rather than decorative overlays, foster appropriate reliance.

Myth 8: Chatbots Can Safely Handle All Patient Questions

Myth: A prompt-tuned assistant can schedule, triage, educate, and follow up without supervision, fueling medical AI myths about autonomy.

Reality: Safe deployments keep narrow intents, clear escalation rules, logging, and no free-form clinical advice; anything uncertain routes to humans quickly. Handoff rates, near misses, and patient sentiment form a safer success scorecard than completion counts alone. Periodic transcript reviews with clinicians refine intents and scripts as real questions evolve.

Myth 9: Cloud Is Inherently Insecure for PHI

Myth: Protected health information belongs on premises, or it is exposed, a durable myth about AI healthcare.

Reality: Major providers offer encryption, logging, private networking, and boundary control; the weak points are configuration and process. A documented shared responsibility matrix, backed by policy as code checks in CI, clarifies who guards which controls. Independent third-party reviews validate that the live environment still matches the design.

Myth 10: AI Agents Can Run Clinical Workflows On Their Own

Myth: Capable multi-agentic healthcare AI conglomerates can read notes, place orders, and message patients without oversight, reinforcing risky AI myths in healthcare.

Reality: Autonomy raises safety, legal, and ethical issues; safer use has agents draft, gather, and summarize, while humans approve actions, and audit trails capture who did what and why. Write permissions that default to draft states keep irreversible actions out of automated hands. Full provenance on each action makes investigations straightforward and supports patient safety committees.

Myth 11: Ambient Documentation Ends Note Burden Overnight

Myth: Install a scribe and the chart writes itself, one of the resilient medical AI myths.

Reality: Gains arrive with consent language, microphone discipline, and clinician review; early trials show benefit when workflows are tuned. Clear criteria for a good note help clinicians calibrate expectations as habits shift. Edit rates and turnaround times reveal where additional tuning or education is still needed.

Myth 12: One Model Can Serve Every Specialty

Myth: A single generalized model can power radiology, dermatology, cardiology, and psychiatry at the same level, a sweeping AI myth in healthcare.

Reality: Modalities and error costs differ; many teams pair a general model for retrieval or summarization with specialty models tuned to decisions that matter in each domain. Separate evaluation suites with specialty-specific metrics protect domain safety requirements. Independent thresholds for each service prevent one specialty’s risk tolerance from leaking into another.

Myth 13: Once Deployed, Models Stay Accurate

Myth: After go-live, results hold steady, a quiet medical AI myth.

Reality: Devices change, codes drift, and populations shift; monitoring performance, calibration, and equity with scheduled re-validation prevents silent degradation. Dataset versions labeled by vintage make time-based shifts visible during analysis. Drift tracked as an operational metric, with named owners and remediation playbooks, keeps accuracy from eroding silently.

Myth 14: De-identified Data Is Risk Free

Myth: Strip identifiers and data is safe to share widely, a subtle AI myth in healthcare.

Reality: Re-identification depends on context and auxiliary data; strong policy, contracts, review boards, and anomaly detection protect patients. Limited datasets and differential privacy, where appropriate, reduce re-identification risk without halting progress. Access logs tagged with the purpose of use and periodic audits place accountability on record.

Myth 15: AI Will Automatically Worsen Inequities

Myth: Since data is biased, systems must make outcomes worse, another broad myth about AI healthcare.

Reality: With intent, systems can detect gaps, standardize follow-up, and surface overlooked signals; explicit subgroup reporting and tailored thresholds convert equity goals into operations. Dedicated funding for remediation work signals that equity fixes are part of the roadmap, not side projects. Community input during success criteria design anchors outcomes to the people being served.

Myth 16: A Great AUC Guarantees Quick ROI

Myth: Accuracy alone delivers financial results, a boardroom-flavored medical AI myth.

Reality: ROI depends on adoption, workflow redesign, contracts, and downstream capacity; a readmission model without a staffed program is the classic miss. Operational ownership for each use case, paired with a measurable target and plan, aligns technical metrics with service goals. Training, workflow redesign, and staffing appear as necessary line items on the same budget as the model.

Myth 17: Pick a Platform First, Then the Use Cases

Myth: Choose one vendor and the rest will follow, a procurement-shaped myth about AI healthcare.

Reality: Value arrives through specific pains clinicians feel; start narrow, define success, then build shared identity, data access, and logging. Common plumbing tends to emerge naturally after a few wins reveal repeatable patterns. Components that remain unused after several cycles become candidates for retirement to limit complexity.

Myth 18: Fine-Tune a Large Model and Specialty Needs Vanish

Myth: Add your data to a base model, and it behaves like a seasoned specialist, a hopeful medical AI myth.

Reality: Fine-tuning helps with tone and templates, yet safety and factual grounding rely on careful retrieval, curated sources, and evaluation harnesses. A standing red team routine focused on edge cases exposes brittle spots before patients are affected. Scenario-specific test sets catch regressions early and keep release notes honest about known limitations.

Myth 19: Synthetic Data Eliminates the Need for Real Clinical Data

Myth: Generate enough synthetic records, and you can train anything, another persistent AI myth in healthcare.

Reality: Synthetic data helps with testing and education, but cannot capture rare edge cases and documentation quirks; treat it as a supplement, not a stand-in. Generated records marked clearly as synthetic prevent accidental contamination of production analytics. Validation against real-world distributions remains the last gate before promotion.

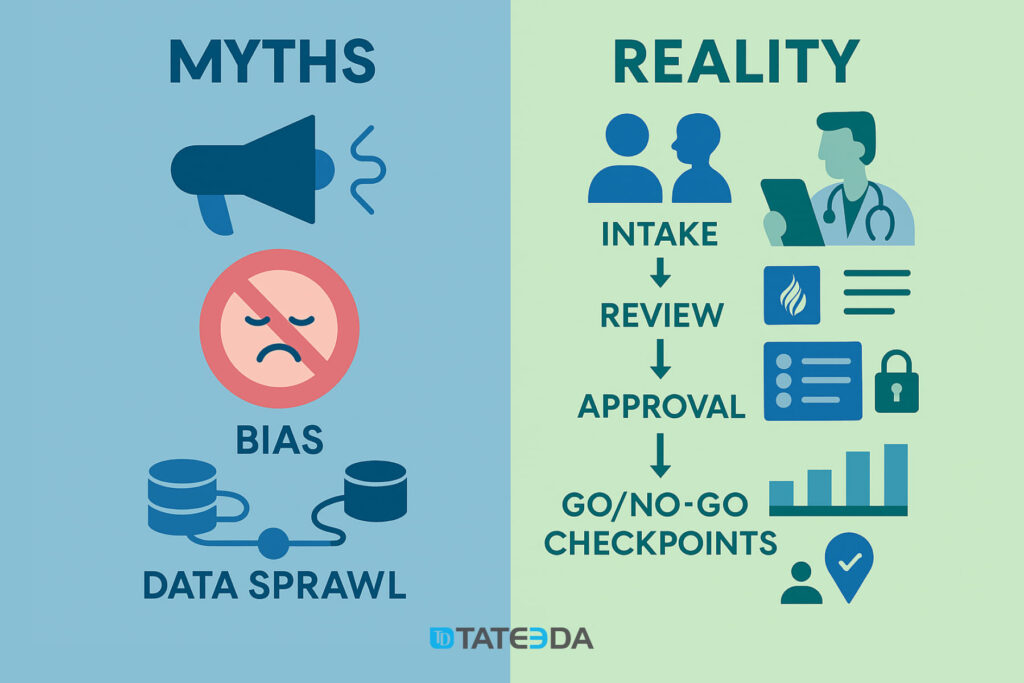

Myth 20: Governance Slows Innovation

Myth: Committees and checklists strangle progress, a quiet myth about AI healthcare.

Reality: Lightweight intake, clinical champions, focused security reviews, and predictable go/no-go cadences cut rework and speed approvals. A concise policy that lists gates, owners, and required evidence gives teams a predictable path from idea to deployment. Cycle time tracking through each gate keeps the process transparent and improvable.

How to Approach AI in Healthcare Without the Myths

Start with a single, narrow problem, not a platform. This simple move is the antidote to many AI myths in healthcare. Choose a use case clinicians actually feel, for example, automating prior authorization packets, flagging missed preventive care, or drafting notes for specific visit types, then define one or two outcome metrics such as turnaround time and rework rate. Pilot in one clinic, run in read-only or suggest-only mode first, and document results so your healthcare AI myths debunked story is backed by before-and-after numbers, not slogans. When possible, compare to a small control group so artificial intelligence in healthcare statistics reflects causation rather than coincidence.

- Pick one clinic or service line and write a one-page success spec.

- Pre-agree on 2 metrics and a baseline window.

- Run suggest-only first, then graduate to approvals.

Design the workflow first, then the model. If nurses triage calls, walk through their screens and scripts before writing code. Map who clicks what, where data is missing, and where delays appear. That habit counters myths about AI healthcare by aligning tools to rhythms that drive adoption. Wire the solution into touchpoints such as SMART on FHIR buttons, CDS Hooks cards, and EHR inbox tasks, and add short in-product tips so new steps do not require classroom training. The payoff shows up in artificial intelligence in healthcare statistics like reduced handoffs and fewer abandoned tasks.

- Create a click-path diagram and pain-point list.

- Place the assist at the existing decision moment.

- Add micro-tips and record completion time deltas.

Treat data as a product. Decide which tables, scans, and documents are in scope and assign clear owners for each. Write down lineage, refresh cadence, and quality checks, which is practical debunking AI myth for healthcare when teams assume more data alone will help. Include wearables and home devices where they matter. Integrating wearable device data into EHRs with AI intelligence can supply timely vitals and adherence signals, yet only after calibration, consent language, and device metadata are captured consistently. This is how medical AI myths about easy data wins become durable data practice.

- Publish a mini data contract per source with the owner and SLA.

- Track freshness, completeness, and drift as visible metrics.

- Gate new inputs behind a validation checklist.

Build for explainability and recourse. Provide signals that show why the tool suggested something, for example, key chart features, saliency on an image, or a citation snippet from a validated library. Give clinicians a one-click way to correct the output and mark the reason. That loop converts AI myths in healthcare into trust and creates a record for healthcare AI myths debunked audits. Avoid decorative explanations that do not match real model behavior; clarity beats cleverness.

- Show the top 3 contributing factors with confidence.

- Log accept, modify, or reject with reason codes.

- Review explanations in monthly safety rounds.

Measure what matters and watch it over time. Beyond model accuracy, track adoption, alert fatigue, manual overrides, turnaround time, and subgroup equity. These make your artificial intelligence in healthcare statistics reflect lived reality, not just sandbox performance. Keep a model registry with version, dataset vintage, known failure modes, and owner. Monthly reviews that include a clinical lead and a data lead keep myths about AI healthcare from creeping back into planning.

- Stand up a model registry and an outcomes dashboard.

- Break results out by site, role, and subgroup.

- Set thresholds that trigger rollback or retraining.

Keep humans in the loop. Decide where the human confirms, where the system only suggests, and where the system simply informs. Encode these choices in policy and UI so they persist through staff changes and software updates. This is the everyday work of debunking AI myth for healthcare that autonomy alone is safe. Track how often staff override suggestions and why, then fold those reasons into training data or simple rules. Patient-facing flows need the same clarity, including escalation paths and consent text that people can understand, which guards against medical AI myths about fully hands-off care.

- Write a short RACI for human vs. system actions.

- Instrument override reasons and review them quarterly.

- Add visible escalation paths for staff and patients.

Plan for drift and change. Create re-validation schedules, rollback paths, and a habit of small releases. Devices change, coding practices drift, and populations shift, so AI myths in healthcare about permanence give way to a lifecycle mindset. Shadow tests before promotion, canary releases with tight monitoring, and automatic alerts on calibration or equity drift keep healthcare AI myths debunked as an operating norm rather than a one-time clean-up.

- Calendar re-validation by model and by site.

- Use canary cohorts with automated rollback criteria.

- Monitor calibration and equity drift as first-class signals.

Invest in governance that accelerates rather than blocks. Lightweight intake forms, clear ownership, and Go/no-go checkpoints reduce rework. Security reviews should test real risks such as prompt injection, over-broad API scopes, and PHI handling, not just paperwork. Publishing the minimal evidence needed for approval turns governance from a rumor into a path, which helps retire myths about AI healthcare that compliance and speed cannot coexist.

- One-page intake with owner, data, risks, and metrics.

- Time-boxed security and privacy reviews with checklists.

- Track cycle time through each gate and publish trends.

Choose the simplest technical pattern that works. Retrieval-augmented generation with a curated corpus often beats indiscriminate fine-tuning for clinical text. Event-driven microservices or serverless jobs may be enough for data prep, instead of heavy platforms. These choices limit moving parts and make medical AI myths about one-size-fits-all platforms easier to resist.

- Start with RAG plus a documented corpus and guardrails.

- Keep data prep in small jobs with observable queues.

- Add complexity only when metrics stall.

Prove value where money actually moves. Tie each use case to a service line owner and one operational metric, such as reduced denials, shorter discharge cycles, or quicker message turnaround. This keeps artificial intelligence in healthcare statistics connected to outcomes that finance teams recognize. When the metric improves, expand carefully; when it stalls, fix the workflow before blaming the model.

- Publish before-and-after deltas and note the workflow changes that drove them.

- Assign an operational owner with budget authority.

- Pick a single money-linked metric and a target window.

Final Word: Get AI Integrated into Your Healthcare System

This article separated hype from reality across 20 common AI myths in healthcare, showed why workflow-first design beats platform-first bets, explained how data-as-a-product, explainability, and human-in-the-loop habits build trust, and outlined what to measure over time so models stay safe, accurate, and useful.

As a nearshore AI-integrated software development provider in the U.S., TATEEDA designs and ships agentic AI for healthcare that fits clinical work, passes security reviews, integrates with EHR and revenue-cycle systems, and runs with audit trails, drift monitoring, and clear approval gates. In short, we build AI agentic solutions for healthcare without the myths and the problems those myths create. Also, we can help you integrate modern AI platfroms with

If you have an AI solution in mind, contact us today and tell us what you want to achieve.

Frequently asked questions

Does AI make clinical decisions today?

AI contributes by summarizing, ranking, or highlighting signals, but licensed clinicians remain responsible for orders and diagnoses; that balance keeps AI myths in healthcare from turning into risky shortcuts and keeps healthcare AI myths debunked visible in the record.

How do we keep patient privacy intact with modern AI?

Use strong identity and access controls, encrypt data in transit and at rest, prefer retrieval that leaves PHI in your store, and restrict tuning to de-identified sets under contract, a set of steps that turns myths about AI healthcare into clear policy and publishes artificial intelligence in healthcare statistics you can defend.

Where is the best place to start?

Pick a task that consumes time yet carries low risk, such as ambient notes in a well-defined clinic or outreach on missed preventive care, then share results to keep medical AI myths at bay and continue debunking AI myths for healthcare with practical wins.

What skills do we need on the team?

Blend clinical champions with data engineers, security, and product thinkers; add analysts to build evaluation harnesses and ops for monitoring, which directly addresses AI myths in healthcare about magic tools and keeps healthcare AI myths debunked in delivery.

How do we guard against bias?

Report metrics by subgroup from the first experiment, invite patient advocates, document gaps, and test how small configuration changes alter outputs so myths about medical AI do not drift back, and so your artificial intelligence in healthcare statistics include equity, not just accuracy.