How to Integrate Wearable Device Data into EHRs with AI Intelligence

This article is a practical playbook on how to integrate wearable device data into EHRs with AI intelligence. You’ll see the patterns that work for FHIR wearable integration for healthcare providers and how to embed a SMART on FHIR wearables app into the clinician workflow.

We’ll also outline a 30–60–90-day pilot plan, KPIs that prove value, and how to stand up a HIPAA-compliant wearable data pipeline for hospitals. If you’re a healthcare IT leader, clinician, or device maker, you’ll finish with a plan you can act on today.

| ⚠️ If you need immediate help with integrating wearable device data with EHR systems and/or integrating wearable device data into AI solutions, please contact our team. |

Wearables are everywhere in U.S. healthcare—from consumer smartwatches to medical-grade patches—and they’re producing nonstop vitals, sleep, rhythm, and activity data. Roughly one in three U.S. adults uses a health wearable, and more than 80% of those users are willing to share readings with a clinician. Reimbursement is pulling in the same direction: remote patient monitoring claim volumes grew ~1,294% from 2019 to 2022. The opportunity is clear: connect these streams to the chart with patient-generated health data (PGHD) EHR integration, add AI to separate signal from noise, and you get earlier detection, faster follow-up, and clearer conversations with patients and payers. Here’s what the EHR + wearables + AI stack unlocks:

- Continuous monitoring that surfaces meaningful trends for patients and care teams.

- Real-time alerts for arrhythmias, hypoglycemia, oxygen dips, or falls, backed by FDA-cleared wrist ECG for AFib detection.

- AI summaries of weekly vitals and activity inside the EHR for quicker visits and better handoffs.

- Predictive risk scores that flag likely deterioration so teams can intervene earlier.

- Medication and follow-up reminders are tied to recent wearable signals in the portal and mobile apps.

- Secure sharing and consent management that satisfy privacy and audit requirements.

Why this matters now: staffing is tight, documentation time is high, and value-based programs reward earlier action and fewer readmissions. Raw device feeds alone can overwhelm teams. With HL7 v2 device data interfaces for events, FHIR write-backs for Observations, and clear billing pathways via RPM CPT codes such as 99454/99457, you convert raw readings into concise trendlines and risk indicators while advancing custom medical AI assistant development anchored in HIPAA-safe workflows.

Health systems are already pairing wearables with predictive analytics (as in an example of the Cleveland Clinic’s collaboration with Masimo on surveillance and AI for continuous monitoring) to catch deterioration earlier and route alerts safely. The outlook is strong. Kaiser Permanente’s virtual cardiac rehab reported an 87% completion rate and <2% readmissions, demonstrating how integrated wearables can outperform traditional models.

On the diagnostics side, the Mayo Clinic demonstrated that AI can analyze Apple Watch ECGs to flag asymptomatic left-ventricular dysfunction, and patients can transmit their Apple Watch ECGs directly into the EHR via a secure Mayo app. Add a focused pilot that proves value in 60–90 days, then scale by specialty. The result is calmer clinician workflows, more responsive patient engagement, and a data foundation ready for the next wave of AI-enabled virtual care.

Why TATEEDA is Qualified to Integrate Wearables, EHRs, and AI

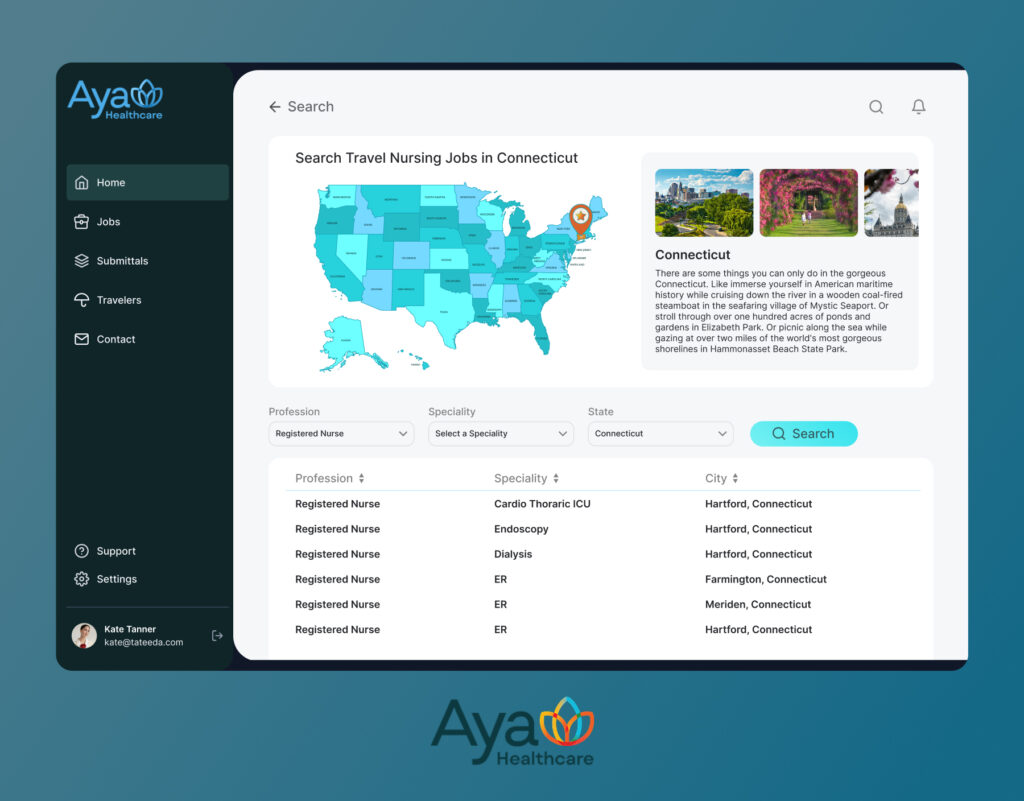

Since 2013, TATEEDA has built healthcare platforms as a custom software development company in San Diego, backed by senior nearshore teams in LATAM and Europe. A flagship example is our work with AYA Healthcare, one of the largest nurse staffing agencies in the United States. We helped power a high-volume staffing platform that processes thousands of users daily and runs safely under HIPAA—experience that translates directly to custom healthcare software development services for wearable, EHR, AI programs.

What we delivered for AYA—and why it matters here:

- AI components for workforce ops: NLP resume intake, ranking and matching, anomaly checks in timekeeping, and assistant features for recruiters and staff. This mirrors custom medical AI assistant development patterns used to summarize signals, flag risk, and route tasks in clinical workflows.

- Credentialing and compliance at scale: License capture, renewal alerts, audit trails, and role-based access. These controls map to PHI safeguards, consent flows, and tamper-evident logging required when wearable data lands in the EHR.

- Scheduling and mobile experience: Self-service onboarding, shift bids, and notifications. The same mobile patterns support patient reminders, symptom check-ins, and secure messaging when you connect wearable streams to portals and EHR apps.

- Integration depth: Interfaces to third-party systems and payer workflows. The same approach applies to FHIR R4 read/write, HL7 v2 feeds, SMART on FHIR launches, and identity via OAuth 2.0 or OIDC when connecting devices, device clouds, and developing custom EHRs or EMRs.

Result: a team that ships secure, high-throughput healthcare software, then hardens it with identity, audit, and change control. If your next step is integrating wearable device data with EHRs and AI, we bring the execution habits from AYA’s at-scale platform—clear interfaces, measurable KPIs, and safe automation—to your clinical use cases.

Table of Contents

Executive Overview: Why Integrate Wearable Devices with EHR and AI Now

This article is a fast, practical guide to why to integrate wearable devices with EHR and AI in the U.S. context. You will learn how to integrate wearable device data into EHRs with AI intelligence using patterns that already work in hospitals: FHIR reads and writes, SMART on FHIR (Substitutable Medical Applications and Reusable Technologies on FHIR) launch, and HL7 v2 (Health Level Seven version 2) event feeds.

You will also see how to stand up a HIPAA-ready data pipeline, and how to run a 30-60-90 day pilot that proves value with clean KPIs before you scale.

Contemporary wearable devices to plan for in 2025–2026:

- Apple Watch (latest series) and Samsung Galaxy Watch

- Google Pixel Watch and Garmin Venu or Forerunner lines

- Oura Ring and Galaxy Ring; WHOOP strap

- Withings ScanWatch; Omron HeartGuide (wrist blood pressure)

- Dexcom G7 continuous glucose monitor; Abbott FreeStyle Libre 3

- iRhythm Zio patch; VitalConnect VitalPatch; BioIntelliSense BioButton

- Masimo Radius PPG and other clinical-grade wireless sensors.

What flows from these devices into the record are time-stamped Observations such as heart rate and rhythm strips, heart rate variability (HRV), SpO₂ (peripheral capillary oxygen saturation) and respiration rate, skin-temperature trends, sleep stages, daily activity and VO₂ max (maximal oxygen uptake) estimates, blood-glucose curves, intermittent blood-pressure readings from a BP cuff (blood pressure cuff), fall events, and short ECG snapshots.

Medical diagnostics AI then filters noise, normalizes against personal baselines, detects anomalies, and generates concise summaries or risk scores that a clinician can act on inside the visit note or in-basket.

What you will take away:

- Technical patterns: FHIR wearable integration for healthcare providers, SMART on FHIR apps, HL7 v2 device feeds, and safe write-backs.

- Compliance spine: HIPAA eligibility, BAAs (Business Associate Agreements), encryption, consent, redaction, and tamper-evident logs.

- Pilot playbook: a 30-60-90 program with gates for accuracy, safety, and throughput.

- KPIs that matter: review time, intervention lead time, alert quality, readmission-risk capture, and patient activation.

- Examples and templates you can adapt to your stack.

A quick scenario: a 68-year-old heart-failure patient wears a smartwatch, a connected BP cuff, and a wireless weight scale. Nightly HRV drops while resting heart rate rises, step count falls, and weight increases by 2 kg in 48 hours. The AI layer compares these signals to the patient’s baseline, validates data quality, and posts a concise “worsening status” summary to the EHR with a confidence score. The clinician receives a safe-action suggestion (televisit today, lab-draw order, medication-titration checklist), and the patient’s mobile app shows plain-language guidance plus a one-tap scheduling link. The result is earlier intervention, fewer false alarms, and a visit that starts with context rather than a blank screen.

U.S. Market Signals for AI Wearable Data Integration into EHRs

The timing is favorable for AI wearable data integration for EHR systems in the US because consumer adoption, reimbursement, and clinical workload all point in the same direction. Millions of Americans already wear trackers that produce continuous physiological streams. Medicare’s remote-patient-monitoring codes fund device-supported care and monthly data review. Clinicians need automation that translates raw signals into concise, defensible insights they can trust during a short follow-up visit.

Wearables shaping clinical programs in 2025–2026:

- Smartwatches that record ECGs and passively detect rhythm irregularities

- Smart rings tracking heart rate, HRV, temperature, sleep, and recovery

- Chest and adhesive patches streaming multi-lead rhythm, respiration, and mobility

- Continuous glucose monitors with minute-level curves and event markers

- Connected BP cuffs (blood pressure cuffs), scales, and pulse oximeters for at-home vitals

- Clinical sensor platforms for inpatient telemetry and step-down monitoring

Why now is the moment to integrate wearable devices with EHR and AI has three parts. First, the data already exists at scale, so even a narrow use case like post-discharge surveillance can ride existing devices instead of waiting for new hardware.

Second, the EHR connectivity story is mature enough to start. SMART on FHIR embeds decision support in the chart. FHIR Observations carry normalized vitals. HL7 v2 feeds push admissions and results that keep the AI layer in sync. On the back end, these same interfaces can tie into custom medical billing software solutions so RPM artifacts—device-days, review minutes, and clinical actions—are captured automatically for compliant claims and cleaner audit trails.

Third, the AI role is additive. Models clean and align data across devices, tune to individual baselines, detect anomalies or trends that precede deterioration, and draft human-reviewable summaries so the signal reaches the right clinician without adding inbox noise.

Consider a health system piloting activity-based cardiac rehab at home. The smartwatch provides heart rate, exertion, and session compliance. A ring contributes to sleep and recovery. A patch adds rhythm precision during the first two weeks. AI fuses these signals, flags over-exertion or poor-recovery days, and posts a weekly snapshot to the EHR rehab flowsheet with a traffic-light status and brief rationale.

Patients see tailored coaching in the mobile experience and patient portal—delivered via custom patient portal software development that surfaces trends, reminders, and secure messages in plain language—while care managers see who needs a call, and cardiologists open the chart to a one-screen summary instead of scrolling through thousands of points. This is the practical edge of AI wearable data integration for EHR systems in the US: safer automation for clinicians, clearer guidance for patients, and measurable improvements that support value-based goals and downstream billing accuracy through integrated custom health insurance software.

Wearables to EHR and AI — Compact Reference

| Device type (examples) | Signals to EHR (typical FHIR) | How AI adds value |

|---|---|---|

| Smartwatch (Apple Watch, Samsung, Pixel, Garmin) | HR, HRV, single-lead ECG, SpO₂, respiration, steps, sleep → Observation ; ECG summary → DiagnosticReport ; device → Device | Rhythm irregularity flags, fatigue trends, early CHF decompensation risk, visit prep summaries |

| Smart ring (Oura, Galaxy Ring) | HR, HRV, temp deviation, sleep stages, recovery → Observation | Early illness detection from HRV/temp shift, recovery coaching, sleep-quality impact on BP/glucose |

| Adhesive patch (iRhythm Zio, VitalPatch, BioButton) | Multi-lead rhythm, RR, posture, activity, temp → Observation ; analysis → DiagnosticReport | High-fidelity arrhythmia detection, safer post-discharge surveillance, fewer false positives vs wrist |

| CGM (Dexcom G7, Libre 3) | Minute-level glucose, hypo events, time-in-range → Observation | Predict excursions, titration check prompts, sensor failure detection, personalized diet nudges |

| Home vitals (BP cuff, scale, pulse oximeter) | Systolic/diastolic BP, weight, resting SpO₂ → Observation | Fluid-retention flags from weight + BP + HRV, prioritized outreach lists, measurement-quality checks |

| Clinical sensor platform (Masimo Radius PPG, etc.) | Continuous SpO₂, RR, pulse, motion → Observation | Deterioration prediction on the ward, step-down surveillance dashboards, safer escalation paths |

Data Plumbing 101: Standards and Connection Options

Think of your data plumbing like a subway map. Riders are signals from wearables, stations are EHR endpoints, and the tracks are open standards. If you want trains to arrive on time, you need clear routes for patient-generated health data (PGHD) EHR integration and predictable handoffs between systems.

Start with FHIR wearable integration for healthcare providers. The backbone resources are Patient, Device, Observation, and Encounter. Observations carry the time-stamped vitals and trends. Device records identify the watch, ring, patch, or sensor. Encounter adds context for where and when the data matters. Typical reads use filters like _lastUpdated and category=vital-signs. Typical writes create or update Observation with LOINC codes, link subject to the Patient, and reference the Device that produced the measure. Patient matching often combines MRN, date of birth, and internal enterprise identifiers. Provenance can record who or what created the data, which keeps audit reviewers happy.

You still need classic pipes. An HL7 v2 device data interface for EHR integration uses ADT messages to tell the AI layer who is admitted, discharged, or transferred. ORU^R01 messages deliver unsolicited results like pulse oximetry events or home BP readings. Order–result loops appear when a patch or monitor is placed under an order. You might see ORM for the order and ORU for the resulting streams. An interface engine can translate HL7 v2 into FHIR so downstream services speak the same language.

To put insights in front of clinicians, launch a SMART on FHIR wearables app for clinicians from within the chart. SMART provides the patient and encounter context, OAuth scopes, such as patient/Observation.read and patient/Observation.write, and secure tokens with short lifetimes. The app can render trendlines, show a confidence score, and post a concise summary back into the note. That is how you integrate wearable device data into EHRs with AI intelligence without forcing clinicians to switch screens.

Common Datapoints, FHIR Mapping, and Clinical Use

| Wearable datapoint | FHIR resource mapping | Example clinical use |

|---|---|---|

| Single-lead ECG snippet | Observation plus DiagnosticReport summary; Device linked | Rhythm review during tele-visit and AFib flag confirmation |

| SpO₂ and respiration rate | Observation with LOINC codes | Nocturnal desaturation tracking for COPD or sleep disorder follow-up |

| HRV and resting heart rate | Observation with trend profile | Recovery readiness after cardiac rehab or post-viral fatigue checks |

| Weight and home BP | Observation with Device reference | Fluid retention alerting in CHF and medication titration review |

| Glucose curve from CGM | Observation events and time-in-range | Hypoglycemia alerts and therapy adjustment planning |

From Sensors to the Chart: AI that Elevates Wearable Data in EHRs

Raw wearable streams are busy and imperfect. Motion artifacts, missed samples, outliers, and device quirks arrive right alongside valid measurements. The first job of the AI layer is to clean and align. A single-lead ECG is denoised and segmented before rhythm analysis. Minute-level glucose values are smoothed and tied to meals or activity. SpO₂ and respiration are checked against motion so desaturation alerts carry useful context instead of noise. This preprocessing makes AI anomaly detection for wearable vitals in EHRs far more reliable for arrhythmia flags, hypoglycemia alerts, and oxygen-desaturation warnings.

Once the feed is trustworthy, forecasting and summarization turn data into decisions. AI predictive analytics from wearables in clinical workflows estimate short-horizon deterioration risk, readmission risk, and recovery progress from multi-signal trends tuned to each patient’s baseline rather than a one-size threshold. Clinicians then see a concise note in the EHR with a confidence score, a brief rationale, and a safe next action. Patients get clear guidance in the portal or mobile app.

To keep this practical, here is how the AI layer typically earns trust:

- Normalize, then detect. Align signals by time, remove outliers, and run validated detectors so alerts are rare and meaningful.

- Score risk on a short horizon. Give a 48–72 hour outlook with a confidence band so teams can act without guessing.

- Summarize for humans. Compress thousands of points into a few sentences, link back to the chart, and show trend deltas week over week.

- Coach the patient. Convert signals into simple actions in plain language inside your custom AI-integrated mobile patient app.

- Close the loop. Record outcomes, learn from clinician feedback, and tune thresholds over time.

These patterns apply across programs, from home monitoring to telemedicine software development services where visit prep is driven by a one-screen summary, and even into pharmaceutical software development services where adherence insights and physiology trends inform therapy adjustments without burying clinicians in graphs.

Building the Pipe: API-First, Aggregators, or In-House HIPAA Pipelines

Different teams take different roads to production. Pick the one that proves value fastest while protecting the core EHR.

Path A: Direct device cloud → EHR (API-first)

- What it is: Pull authorized data from device clouds, transform, then write FHIR

ObservationandDiagnosticReportrecords to the EHR. Common starters include Apple HealthKit and Fitbit connectors with an AI sidecar for cleaning and analysis. - Why it works: Quick to launch, ideal for a narrow cohort or single specialty, easy to measure in a 30–60–90 day pilot.

- Use when: You need proof this quarter and can scope to a focused signal set. Excellent for post-discharge surveillance or activity-based rehab.

- Notes: Keep consent flows simple, enforce idempotent writes, and log every action with correlation IDs.

Path B: Aggregators + interface engine

- What it is: Use a data aggregator to unify OAuth consent, device APIs, and models, then route through an interface engine that converts HL7 v2 ORU messages to FHIR and throttles bursts.

- Why it works: One place to govern many devices at scale, a strong fit for multi-site programs and mixed hardware.

- Use when: You support diverse devices, need a centralized policy, and want fewer custom connectors to maintain.

- Notes: Define data quality rules in the engine, map LOINC consistently, and monitor latency end to end.

Path C: Your own HIPAA-compliant wearable data pipeline for hospitals

- What it is: You host ingestion, token vaults, transformations, AI services, and observability. You manage BAAs, encryption, PHI segregation, audit, and retention on your infrastructure.

- Why it works: Maximum control over consent, identity, and deep write-backs into flowsheets, notes, and orders.

- Use when: Governance is strict, integrations are deep, or you must align with existing security and data platforms.

- Notes: Stand up sandboxes, synthetic datasets, and rollback playbooks early. A SMART on FHIR wearables app for clinicians keeps in-workflow review consistent across all three paths.

How to choose quickly

- Need a fast win? Start with Path A for a tightly scoped pilot, then graduate to Path B as coverage grows.

- Running a multi-device, multi-site program from day one? Path B keeps connectors and policy manageable.

- Heavy compliance and deep EHR writes with custom rules? Commit to Path C and build once for long-term reuse.

All three approaches can coexist in a hybrid rollout. You might pilot with Path A in cardiology, adopt Path B to expand across service lines, then stand up Path C components where policy or scale demands full control. This sequencing lets you prove value early while laying the foundation for durable, enterprise-grade integrations.

Absolutely. I tightened up the writing, removed the long anchor keyphrases you said are no longer needed, and expanded unclear spots so the ideas land with more specificity. I also enriched examples, kept paragraphs short, and preserved the helpful lists and tables.

Workflow Design: Getting Signals to the Right Place

Wearable streams help only when they show up where teams actually work. Map each signal to a destination, an owner, and a response rule, then test the flow with real clinicians before you scale.

Primary delivery channels:

- EHR inbox. Send a compact summary, not raw graphs. Include a risk score, the top three contributing signals, a two-sentence clinical rationale, and links to the underlying Observations. For example, “Rising resting HR, falling HRV, and a 2 kg weight increase in 48 hours suggest fluid retention. Recommend tele-visit today and labs within 24 hours.”

- Clinician mobile. Provide a one-screen alert card for on-call decisions. Show the same score and rationale, plus safe actions like “call patient,” “place lab order set,” or “schedule video visit.” Keep taps to a minimum.

- Population dashboards. Rank cohorts by risk and list why each patient is on the board. Add filters for specialty, device type, and data freshness so managers can plan staffing and outreach.

- Care-manager queues. Present prioritized call lists with scripts that reflect the alert type. If the signal suggests post-surgical over-exertion, the script asks about pain, swelling, and medication adherence, then offers a same-day visit slot.

- Patient portal or mobile app. Translate analytics into plain language and a small set of actions. “Your overnight oxygen dipped several times. Please use the oximeter again tonight and answer two questions before 10 a.m.”

Alert hygiene that avoids fatigue:

- Tune thresholds to the individual baseline rather than applying one global cutoff.

- Add cooldown windows so a single event does not trigger duplicates.

- Define an escalation ladder: gentle nudge, care-manager review, clinician review, urgent contact.

- Gate any high-risk action behind human confirmation and record who approved it.

- Stamp every decision with a correlation ID so audit and quality teams can replay the sequence.

“Good alerting should feel quiet most of the time. The point is one clear next step with a short explanation that a human can verify in seconds.”

Slava K., CEO, TATEEDA

Routing cheat sheet:

| Destination | What to send | Who acts | Cadence |

|---|---|---|---|

| EHR inbox | Weekly summary plus new high-risk flags with rationale | Assigned clinician | Weekly and event-driven |

| Clinician mobile | Urgent alert cards with safe actions | On-call provider | Event-driven |

| Care-manager queue | Ranked outreach list with call scripts | RN care team | Daily |

| Population dashboard | Cohort trends and program KPIs | Service-line leaders | Weekly |

| Patient app/portal | Plain-language tips, reminders, secure messages | Patient or caregiver | Daily or as needed |

Pilot First: 30–60–90 Days to Integrate Wearable Device Data into EHRs with AI Intelligence

Small, real, and measured beats grand and vague. Pick one use case, wire the data correctly, and let the numbers decide whether to expand.

Step-by-Step Action Plan for AI Wearable Data Integration in U.S. EHR Systems

- Day 1–30: sandbox and safety

- Load a synthetic dataset that mirrors your target cohort, including common edge cases.

- Stand up device connections and FHIR writes in a test tenant and verify that Observations, Device, and Provenance are complete.

- Document HIPAA controls, patient consent, encryption, and audit flows.

- Dry-run alerts with clinicians and capture what they would want to see on screen.

- Day 31–60: limited live cohort

- Enroll 50–150 patients in one specialty.

- Turn on weekly summaries, targeted alerts, care-manager queues, and patient messages.

- Hold short huddles each week to adjust thresholds, wording, and escalation rules based on actual experience.

- Day 61–90: decide and scale

- Compare a clean KPI snapshot to baseline.

- Choose to expand, refine, or pause.

- Write runbooks, change control steps, and rollback procedures for production.

KPI Menu for Wearable-to-EHR AI Programs: Practical Targets that Prove Value

- Time to review per alert (aim to move from 8 minutes to 3 minutes).

- Intervention lead time (hours between alert and action).

- Documentation time saved in follow-up visits.

- Readmission or ED utilization deltas for the test cohort.

- Alert quality, broken out by type, with a target true-positive rate.

- Patient activation, measured by task completion in the app or portal.

Funding the Work: RPM CPT Codes for AI Wearable Data Integration in the U.S.

99453 for device setup and education, 99454 for device supply and transmission per 30 days, 99457 and 99458 for monthly clinician review time, and 99091 for physician data interpretation time. Always confirm payer policy and local rules before launch.

“Prove impact in weeks, not quarters. Put a narrow use case into the world, measure it, then widen the path once the story writes itself.”

Slava K., CEO, TATEEDA

Architecture Blueprint for AI Wearable-to-EHR Integration: Build Around the Legacy Core

Do not carve into the EHR core. Attach intelligence at the edges so the chart stays stable while your assistant gets smarter.

Sidecar pattern, in practice:

- Ingest device data through secure APIs.

- Normalize units and timestamps, then align signals by time.

- Run detection and short-horizon risk models that are tuned to each patient.

- Write back via FHIR Observations and compact DiagnosticReports.

- Use HL7 v2 for admissions, discharges, and results so the AI layer stays in clinical context.

- Offer a SMART on FHIR app for in-workflow review and note insertion.

Reliability and safety controls:

- Idempotent writes. Use a request key so repeats never duplicate a record.

- Retries with backoff. Short spikes and brief outages do not drop data.

- Dead-letter queues. Any failed event goes to a monitored lane for human review.

- PHI separation. Analytics stores never keep identifiers.

- Tamper-evident audit. Export logs to write-once storage.

- Least-privilege identities. OAuth 2.0 or OIDC scopes are as small as possible.

Observability that people use:

- A correlation ID travels from ingest to EHR write to human action.

- Dashboards show latency, error rate, and backlog per connector.

- Traces for every alert include who reviewed it, what action was taken, and when.

Compact architecture map:

| Component | Purpose | Notes |

|---|---|---|

| Ingest API | Pull authorized device data | Token vault, consent checks |

| Transformer | Normalize and validate | LOINC mapping, unit conversion, clock drift correction |

| Launch context, short-lived tokens, and note insertion | Detect and predict | Baseline per patient, short-horizon risk |

| Writer | FHIR and HL7 output | Idempotency, retries, rate limits |

| SMART app | Clinician UI | Launch context, short-lived tokens, note insertion |

| Monitor | Logs and metrics | Dashboards, alerts, audits, traces |

Clinical and Operational Use Cases: From Wearable Signals to EHR Decisions with AI

Pick scenarios that change a decision at the point of care or reduce time on routine work. Then layer in complexity once teams trust the results.

Clinical:

- Ambient notes that include wearable vitals. The progress note opens with two sentences that summarize the last seven days and the three strongest contributors. Example: “Resting HR increased 12 percent, HRV fell 18 percent, and weight rose 2 kg over 48 hours. These changes started after the diuretic dose reduction.” The note links to each Observation and provides a small sparkline so the physician can confirm the pattern without hunting.

- Arrhythmia triage that prioritizes scarce attention. Wrist ECG snippets are denoised, checked for irregular rhythm, and placed into a cardiology review queue with a confidence score and a list of features that drove the decision. The cardiologist sees ten patients sorted by clinical risk instead of hundreds of raw strips.

- Imaging follow-up assist. Recovery signals that lag expectations trigger a nudge to review prior imaging or schedule a follow-up. The assistant proposes an order set and documents why it was suggested.

Operations

- Medication prompts tied to physiology. If wearable patterns indicate poor recovery or nighttime dips in oxygen, the system schedules a coaching touch and reminds the patient to confirm dosage timing.

- Activity-based discharge readiness. Real-world mobility improves over several days, the pain score trend stabilizes, and no desaturation events were recorded. The dashboard moves the patient to “review for discharge,” which safely shortens the length of stay.

- Supply and service planning. Device usage patterns indicate battery or sensor replacement is due next week, which avoids gaps in monitoring.

Access and engagement:

- EHR mobile app integration for wearables and AI. Patients see simple cards that explain what changed, why it matters, and what to do next.

- Telemedicine intake with pre-loaded trends. The video visit opens with a one-screen summary so both sides start on the same page.

“Start where one new signal can change what a clinician does today. After that, add depth and guardrails as trust grows.”

Slava K., CEO, TATEEDA

VentriLink: Where We Started, and How AI Would Lift It Further

VentriLink provides a wireless heart-monitoring sensor that streams ECG to a server. TATEEDA built a cross-platform tablet app so clinicians can view cardiograms with professional tools. The app synchronizes with the server, visualizes strips, identifies events such as arrhythmia and tachycardia, supports notifications and history, and runs well across major tablets. The system has been used in hospitals, and the client returned for additional work based on early success

How to extend VentriLink with AI and open standards:

- Standards mapping. Represent ECG segments as FHIR Observations and publish concise DiagnosticReports that summarize findings. Use HL7 v2 ORU events so inpatient teams see relevant results without leaving their routine.

- AI detection with explainability. Clean and segment the ECG, detect irregular rhythms, and present a confidence score alongside a short explanation. For example, “Irregular RR intervals with absent P waves in 43 percent of beats across a two-minute window.”

- In-workflow viewing. Launch a SMART on FHIR app inside the EHR so cardiologists see summaries and can click through to the full strip without switching systems.

- Patient experience. Provide plain-language insight in the mobile app, along with clear next steps and simple self-checks.

- Evidence for billing and quality. Log device days, review time, and human actions so the program can demonstrate value and support standard reporting.

Illustrative flow:

| Step | What happens | Who benefits |

|---|---|---|

| 1 | Summary appears in the EHR inbox and SMART viewer, with safe actions | IT, compliance |

| 2 | AI flags irregular rhythm with an explanation of the features used | Cardiologist |

| 3 | Summary appears in the EHR inbox and SMART viewer, with safe actions | Clinician |

| 4 | Patient receives guidance and a one-tap link to schedule follow-up | Patient |

| 5 | Program metrics record review time and outcomes for leadership | Clinical ops and finance |

Six steps to upgrade VentriLink in a controlled way:

- Wire FHIR and HL7 events in a sandbox and validate with synthetic ECGs.

- Add AI detection with denoising and beat segmentation, then test on historical data.

- Launch a SMART app in the EHR test tenant and collect feedback from cardiology.

- Run a 90-day live pilot with a limited cohort, track alert quality and intervention timing.

- Turn on program metrics and monthly dashboards for leaders.

- Expand by site or specialty once KPIs hold and support processes are in place.

If you want help turning this into a working program, we can map destinations, wire standards, and set up a pilot that proves value without disturbing the core systems you already rely on.

Cloud AI Platforms That Plug Into EHRs and Device Streams

Below are widely used cloud options that support HIPAA-eligible deployments, FHIR or HL7 integration, and AI workflows. You will still need a signed BAA and private networking to keep PHI controlled.

- AWS:

- Amazon HealthLake (managed FHIR store), HealthLake Imaging (DICOM at scale), SageMaker (ML training and inference), Bedrock (generative AI), IoT Core for device ingestion, and Kinesis for streaming.

- Typical wiring: devices → IoT Core or HTTPS → Kinesis → transform to FHIR → HealthLake → results back to the EHR via FHIR

Observationor HL7 v2 through an interface engine.

- Microsoft Azure:

- Azure Health Data Services (FHIR, DICOM, and MedTech service for device signals), Azure AI Foundry and Azure OpenAI Service for LLMs, Azure Machine Learning for custom models, Event Hubs for streaming, and IoT Hub for devices.

- Typical wiring: wearables → IoT Hub → MedTech service → FHIR service → SMART on FHIR app inside the EHR; AI scoring runs in Azure ML or Azure OpenAI and writes summarized results as

DiagnosticReport.

- Google Cloud:

- Cloud Healthcare API (FHIR, HL7 v2, DICOM), Vertex AI (ML and generative AI), Pub/Sub, and Dataflow for streaming and transforms.

- Typical wiring: device data → Pub/Sub → Dataflow normalization → Cloud Healthcare API (FHIR store) → EHR via FHIR REST; AI runs in Vertex AI and posts risk scores or summaries to

ObservationorDiagnosticReport.

- Oracle Cloud + Oracle Health:

- OCI Data Science for model training and deployment, Oracle Health FHIR APIs for EHR connectivity, Streaming for event pipelines, API Gateway for secure ingress.

- Typical wiring: device streams → OCI Streaming → FHIR mapping service → Oracle Health FHIR endpoints; AI inference in OCI posts back to the chart and to clinician inbox workflows.

- IBM:

- watsonx.ai and watsonx.data for model lifecycle and governed storage, IBM FHIR Server (open-source, cloud-deployable), and Event Streams (Kafka) for ingestion.

- Typical wiring: Kafka ingest → transform → IBM FHIR Server → SMART-on-FHIR UI; AI summaries generated in watsonx.ai with provenance recorded for audit.

- Salesforce:

- Health Cloud with Data Cloud for Healthcare and Einstein for AI; MuleSoft provides FHIR or HL7 bridges to primary EHRs.

- Typical wiring: device vendor APIs → MuleSoft → FHIR objects in Health Cloud → clinician-facing records and care plans; EHR remains the system of record, with bidirectional sync where allowed.

Integration notes that keep projects on track:

- Use a managed FHIR store as the system of record for wearable Observations, Device metadata, and clinical context.

- Bridge HL7 v2 via interface engines when the EHR prefers ORU results or when orders drive device placement.

- Run AI in the same cloud boundary as PHI, then write back only minimal, explainable results with Provenance recorded.

- Present insight inside workflow using a SMART on FHIR app with least-privilege OAuth or OIDC scopes.

- Lock down traffic with private endpoints, VPC peering, encryption in transit and at rest, and a signed BAA for every covered service.

Final Word: Turn Wearable Signals into EHR Decisions with AI

If you’ve read this far, you have the outline to move from wearable streams to clinician-ready insight. We covered the “why now” for U.S. providers and device makers; the data plumbing that actually ships (FHIR resources, HL7 v2 eventing, SMART on FHIR in-workflow apps); the AI layer that cleans, detects, predicts, and summarizes; practical integration paths from quick API pilots to enterprise pipelines; a pilot-first plan with clear KPIs; and workflow design that gets the right signal to the right person at the right time. We also showed how a real project like VentriLink can step up with standards, explainable detection, and in-chart viewing that saves time without adding noise.

What this adds up to is simple: start with a narrow use case that changes a decision today, wire standards so the data is portable, put AI where it earns trust, and measure outcomes that leaders care about. Build around the legacy core, not through it. Keep consent, identity, and audit transparent so security teams sleep at night. Expand once the numbers prove the story.

What TATEEDA brings to your program:

- Hands-on integration and systems engineering: FHIR reads and writes for Patient, Observation, Device, and Encounter; HL7 v2 ADT and ORU bridges; SMART on FHIR launches with correct scopes; eventing, queues, idempotency, and fault isolation so pipelines stay reliable.

- Full-stack coding: backend services in .NET, Java, Node.js, Python, or Go; frontends in React or Angular; native iOS and Android; API-first designs with clean contracts and versioning.

- Database engineering and programming: relational and NoSQL schema design, FHIR stores, time-series tables for signals, indexing and partitioning, stored procedures where appropriate, and ETL jobs with data lineage and quality checks.

- Connector development: device and cloud adapters for Apple HealthKit, Fitbit, Dexcom, and clinical sensor platforms; EHR connectivity for Epic, Oracle Health (Cerner), and athenahealth; interface engines; custom bridges for HL7 v2, FHIR, and proprietary APIs.

- Testing and QA at multiple levels: unit and integration tests, contract tests for FHIR resources, synthetic data sets for edge cases, performance and soak tests, security testing, and model validation for AI outputs.

- Production AI habits: preprocessing that reduces false alerts, short-horizon risk scoring, human-readable summaries, safe actions, and feedback loops that learn from clinician decisions.

- DevOps and site reliability: CI and CD, infrastructure as code, blue-green and canary releases, logging, tracing with correlation IDs, and clear incident runbooks.

- Security and compliance discipline: HIPAA controls, BAAs, encryption in transit and at rest, key management, role-based access, redaction, tamper-evident audit, and periodic access reviews.

- A pilot that lands: a 30–60–90 plan, KPIs such as review time and intervention lead time, and a sequenced path from one specialty to many without disrupting daily operations.