Nearshore AI Development Team Services: A Practical Guide for U.S. Companies

Are you weighing speed, cost, and talent—and wondering which path actually gets your AI project shipped? This piece explains why nearshore AI software development is gaining traction in the U.S.

If you’re asking how to start nearshoring your custom AI software development the right way, we’ll walk through scope, data access, and collaboration so you avoid false starts.

And because next steps matter, you’ll get a practical roadmap to nearshoring AI software projects that you can hand to your team and use immediately.

| ⚠️ If you need immediate help with a custom AI assistant or chatbot development project, contact our representatives today. |

According to McKinsey’s latest survey, 78% of organizations now apply AI in at least one function. That scale of usage is a big reason why we choose nearshore AI development for U.S. teams that need progress without ballooning costs.

This isn’t only an enterprise story. A U.S. Chamber report shows 58% of small businesses are already using generative AI, and the full 2025 report notes 82% of those adopters expanded headcount. For many, a custom nearshore AI team extension for data science and MLOps becomes the practical bridge from pilot to production.

Economics are bending in your favor. Stanford highlights a 280× drop in GPT-3.5-level inference costs since late 2022—shorter cycles, cheaper experiments, fewer stalled sprints. That’s fuel for nearshore execution, where tight time-zone overlap compounds speed.

Hardware is catching up, too. The AWS Trainium2 announcement promises up to 4× faster training and approximately 30 to 40 percent better price-performance than prior generations, which reduces experimentation cycles and cuts compute spend for teams working across different time zones. We also address the question of whether nearshoring AI projects is safe for healthcare data, outlining safeguards, contracts, and audits that keep PHI under control.

Next, we explain how nearshore AI software development differs from typical outsourcing, with an emphasis on tighter collaboration windows and faster iteration for model work. Finally, you will get a clear roadmap to nearshoring AI software projects that shows how to evaluate partners, define scope, and set milestones that move from pilot to production without surprises.

| 🤔 How much can AI actually lift team output—enough to justify nearshore AI software development for U.S. companies focused on fast, same-day iteration? A large field study of 5,179 support agents showed a 14% productivity increase on average, and 34% for less-experienced staff when a gen-AI assistant was introduced, evidence that structured AI plus tight feedback cycles can narrow skill gaps quickly. |

Why TATEEDA Is Qualified to Guide Nearshore AI Development for U.S. Companies

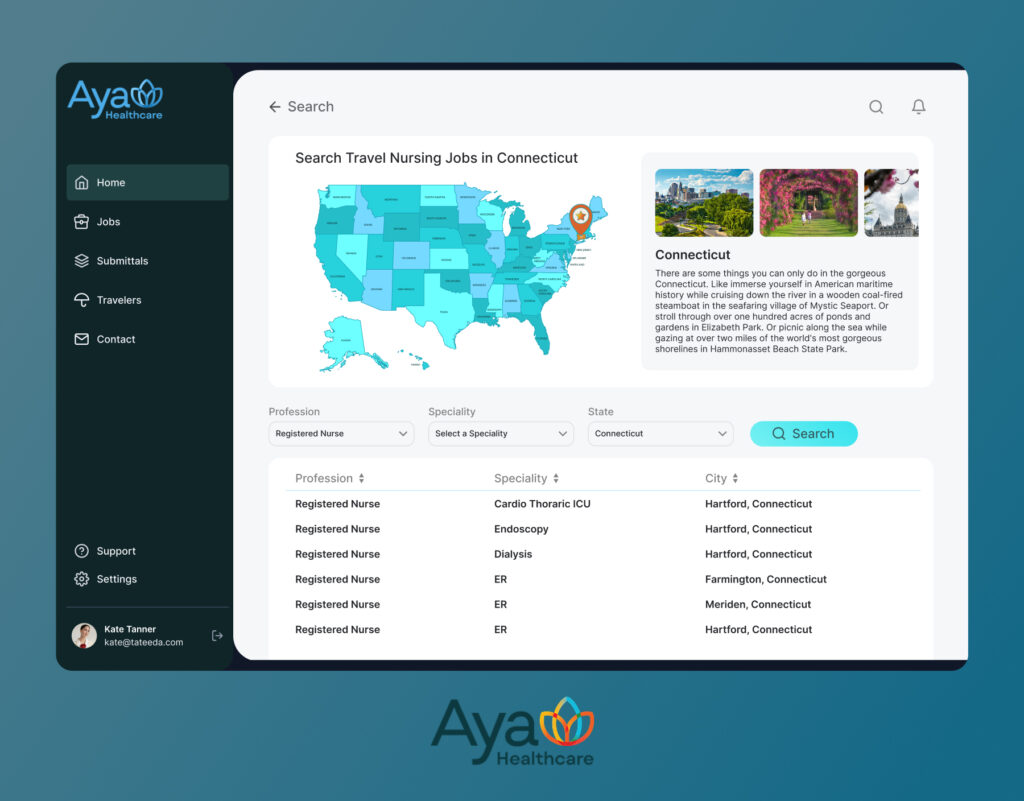

Since 2013, TATEEDA has shipped healthcare and data-intensive platforms as a custom software development company in San Diego, supported by senior nearshore engineers across LATAM and Europe. A flagship engagement is AYA Healthcare, one of the largest U.S. travel-nurse staffing providers. We helped operate a high-throughput platform that serves thousands of users daily under HIPAA. That real-world scale now powers our custom healthcare software development services in the US for AI assistants, AI agents, and AI analytics that plug into modern clinical and operational workflows.

What this means for your AI program:

- AI assistants and agents, built for work: recruiters and staff copilots, chart or document summarizers, task routers, anomaly flags, and triage flows. Patterns from AYA’s NLP intake, ranking and matching, and timekeeping checks translate into patient, member, or back-office assistants that shorten cycle time and reduce rework.

- Data governance and compliance at scale: credential capture, renewals, audit trails, and role-based access carried over to PHI safeguards, consent handling, immutable logs, and least-privilege controls.

- Mobile experience that sticks: self-service onboarding, notifications, and scheduling refined on large user bases become patient reminders, symptom check-ins, secure messaging, and visit summaries in healthcare apps.

- Integration depth, not surface glue: proven links to third-party systems and payers inform FHIR R4 read or write, HL7 v2 feeds, SMART on FHIR app launches, and identity via OAuth 2.0 or OIDC. The same approach connects device clouds, wearable streams, and EHR or EMR endpoints.

Result: a nearshore AI delivery model that ships secure, measurable outcomes. Suppose your next step is rolling out AI assistants, AI agents, or AI analytics across clinical or back-office use cases. In that case, we bring the habits learned on AYA’s at-scale travel-nurse staffing software platform: clear interfaces, testable KPIs, privacy controls, and automation that is safe to run in production.

Table of Contents

Nearshore AI Services You Can Put to Work

Bring practical AI into your stack with nearshore teams that ship quickly and fit U.S. time zones. We focus on clear outcomes, tight security, and clean handoffs to your product and IT groups.

| 🧩 Did you know that by 2026, more than 80% of enterprises will use generative AI APIs or deploy GenAI apps (up from under 5% in 2023)? That surge is exactly why nearshore capacity in U.S.–friendly time zones is becoming a go-to for fast iteration and safe delivery. |

- Integrate AI into legacy systems to upgrade obsolete codebases, refactor brittle modules, and add smart features that meet today’s needs. Think API-first patterns, safer PHI handling, and performance tuning that keeps existing workflows intact.

- Build AI agents that automate routine tasks such as document intake, triage, data entry, claim checks, and routing. Multi-step logic with tool calls, guardrails, and audit trails so results are explainable.

- Add AI to mobile healthcare apps as assistants that answer questions, summarize visits, draft messages, and guide next steps. HIPAA-aligned designs with FHIR or HL7 connectivity where required.

- Stand up AI analytics and dashboards for forecasts, anomaly alerts, and operational insights. Pipelines, feature stores, and cost monitoring that make results repeatable.

- Integrate AI agents with custom ambulatory software to handle intake triage, eligibility checks, care-gap reminders, visit summaries, and referral routing—using HIPAA-aligned patterns with FHIR/HL7 or SMART on FHIR, role-based access, and audit trails that fit front-desk, MA, and clinician workflows.

- Connect wearables and devices to EHRs using secure data flows, consent tracking, and write-backs. Summaries for clinicians, simple views for patients.

- Harden delivery with MLOps, including model registries, canary releases, drift checks, and rollback plans that keep production stable.

- Add an AI agent to healthcare document workflows for intake, OCR, NLP classification, and RAG-based summarization; auto-extract key fields, validate against payer or EHR data, route for e-signature, and write back via FHIR/HL7 with versioned audit logs and human-in-the-loop approvals.

Need senior AI engineers in your time zone?

We have delivered since 2013 and our hiring playbooks bring you proven nearshore specialists quickly.

What Nearshoring for AI Is and Why U.S. Companies Choose It

Nearshoring means building with teams in nearby countries that share business hours and working norms. In nearshore AI software development for U.S. companies, that proximity pays off because model lifecycles are intensely iterative: you’ll pull fresh data, run evaluations, inspect errors, and tune features repeatedly.

With a typical 0–3 hour gap across much of LATAM—and close alignment with Eastern Europe (including Poland, Romania, Ukraine)—those loops happen the same day instead of the next morning, which keeps momentum and stakeholder confidence high.

| 📈 Are AI-exposed industries really pulling ahead—and is that why companies outsource AI development to nearshore locations to accelerate adoption? Cross-industry analysis finds sectors most exposed to AI achieved 4.8× higher labor-productivity growth and 3× higher revenue per employee growth than less-exposed sectors, a macro signal that focused AI programs correlate with measurable business lift. |

Speed isn’t just a nice-to-have; it matches how teams are actually using AI at work. Microsoft’s latest Work Trend Index shows three in four workers already using AI on the job, which means leaders need execution capacity now, not next quarter. That urgency helps explain why companies outsource AI development to nearshore locations: the overlap enables same-day stand-ups, immediate eval re-runs, and faster rollback-or-ship decisions, so pilots move from discovery to canary in weeks, not months.

The talent pool is broad and getting broader. PwC’s Global AI Jobs Barometer reports sectors exposed to AI are seeing 4.8× productivity growth, a signal that experienced data engineers, ML engineers, and MLOps specialists are in high demand—and nearshore markets are supplying them:

“For U.S. teams, that translates into seasoned practitioners who already work on AWS, Azure, or Google Cloud and understand compliance-heavy domains like healthcare and finance. Add MLOps muscle—model registries, canary releases, cost and drift monitors—and you get AI that’s not just clever in a demo but stable, auditable, and affordable in production.”

— Slava K., CTO, TATEEDA GLOBAL

Geography helps operations and the business case. Boston Consulting Group notes Mexico’s exports to the U.S. hit a record $475B in 2023, with manufacturing FDI growing ~20% annually since 2019—evidence of a durable regionalization trend that also benefits tech delivery.

Closer flights mean 3–6 hour in-person workshops are feasible, and shared business norms reduce rework during requirements and QA. On the infrastructure side, IDC expects AI infrastructure spend to reach $223B by 2028, signaling ample capacity for nearshore teams to train, serve, and iterate on mainstream cloud stacks.

Benefits at a glance:

- Same-day iteration: Stand-ups, model reviews, canaries, and fixes land inside your business hours. That cadence aligns with the AI-at-work reality—faster cycles, fewer “lost” days, and tighter stakeholder feedback.

- Access to senior talent: Data engineering, ML engineering, and MLOps with production wins. The productivity lift in AI-exposed sectors reflects the kind of experience you can tap nearshore to move from ideas to shipped features.

- Lower total cost of outcomes: Many U.S. teams see 20–40% savings when you factor in cycle time and reduced coordination overhead; add the coming AI infra scale, and you get more experiments per dollar.

- Easier travel and cultural fit: Quick on-site sessions (often 3–6 hour flights) and shared communication styles reduce misreads during planning, QA, and launches—consistent with the regionalization trend across North America.

- Integration-ready delivery: Fluency with U.S. cloud stacks (AWS, Azure, Google Cloud) and healthcare rails (FHIR/HL7) keeps handoffs clean; the Work Trend Index shows the scale of enterprise AI usage these integrations must support.

| 💡 What about developer velocity—the heartbeat of delivery in nearshore AI software outsourcing from the United States to friendly jurisdictions? Controlled studies on AI coding assistance report developers complete tasks up to 55% faster, with additional gains in readability and time-to-merge, turning overlap hours into more shipped improvements per sprint. |

Drawbacks and How to Blunt Them in Nearshore AI Development

Nearshoring brings speed and senior talent, yet it also introduces a few predictable risks. The good news is that most of them are operational. With a clear playbook, you can move quickly while keeping data, budgets, and scope in check. Below are practical fixes you can put in place before the first line of code ships…

“Start with access and privacy. Map data domains early, decide what never leaves your tenant, tokenize PHI for development, enforce least-privilege with SSO, log every query, and ship Sprint 1 on synthetic data while BAAs and DPAs finalize. Set clear redlines—no production dumps, no personal devices, no shadow tools—so teams move fast without guessing.”

— Slava K., CTO, TATEEDA GLOBAL

Many pilots stall when data is stuck behind unclear approvals or informal sharing. Treat day one like day zero: define what data is needed, which columns include PHI or PII, and who gets access.

Then stage synthetic data for early sprints while the production pathway is finalized. In parallel, align security controls with your company standards so the team can deploy safely from the first build.

Scope and tooling need the same discipline. AI work is exploratory by nature. That does not mean the project should meander. Lock a tight pilot brief with measurable outcomes and weekly gates.

Keep the toolset compact so every engineer can ship, test, and recover without a scavenger hunt.

Common pitfalls and fixes:

- Data access delays: Solve with a clear DPA or BAA, least-privilege roles, and staged synthetic datasets for early sprints.

- Security concerns: Require SOC 2 or ISO 27001, device policies, SSO or OIDC, audited access logs, and incident runbooks.

- Scope creep: Lock a pilot brief with success metrics and weekly backlog gates.

- Tool sprawl: Standardize on a minimal stack and enforce coding standards, tests, and reviews.

Quick Risk-to-Action Table

With these controls, nearshore AI development services can move quickly and safely: clear data pathways, security you can audit, a crisp pilot scope, and a toolset small enough to be reliable under pressure:

| Risk | Early Warning Signs | Primary Owner | Practical Safeguards | Success Metric |

| Data access delays | Tickets linger, and engineers are blocked waiting for exports | Data steward + Security | DPA or BAA in place; access via least-privilege roles; synthetic data for Sprint 1 | Model baseline trained by the end of Week 2 |

| Security gaps | Unmanaged devices; ad hoc credentials | Security lead | Device policy, SSO or OIDC, VPN or VPC, audit logs, and defined incident runbooks | Zero critical findings in the pre-launch checklist |

| Scope creep | Backlog balloons; shifting acceptance criteria | Product owner | Written pilot brief; weekly scope gates; change log with impact | Scope stability above 90% per week |

| Tool sprawl | Multiple overlapping libraries and services | Tech lead | Standard stack; reusable templates; CI checks for style and tests | Lead time cuts by 20% within two sprints |

| Cost drift | Cloud bills trend upward with little value | Finance partner + Tech lead | Budget guardrails; cost dashboards; canary limits; scheduled retrain | Cost per 1k requests stays within the target band |

| Quality or drift | KPIs fluctuate; user complaints rise | MLOps lead | Eval harness, drift monitors, rollback, and hotfix playbooks | Time to rollback under 30 minutes |

| 🧭 Could regional proximity itself be an operational advantage for nearshoring AI work? The U.S. named Mexico its largest goods trading partner in 2024 with $839.6B in total goods trade—evidence of deep, frequent cross-border flows that also make short-notice workshops and faster turnarounds more practical for delivery teams. |

Technologies Commonly Used in Nearshore AI Development

Modern nearshore AI work rides on stable, well-known stacks. You want tools that ship quickly, run economically, and are easy to hire for later. Start with the cloud platforms and modeling frameworks that give you velocity today and runway for tomorrow.

Cloud AI and Platforms:

- AWS: SageMaker for training or hosting; Bedrock for managed foundation models.

- Google Cloud: Vertex AI for pipelines, registries, and online prediction.

- Microsoft Azure: Azure ML for notebooks, jobs, and deployment.

- IBM: WatsonX for governed model catalogs and enterprise data connections.

Modeling and LLM Tooling:

- Core frameworks: PyTorch, TensorFlow, XGBoost, LightGBM.

- LLM providers: OpenAI, Anthropic, Meta Llama (self-host or managed).

- Retrieval and orchestration: LangChain, LlamaIndex for RAG, and tool use.

“Pick platforms your team already knows, then standardize. If two tools solve the same problem, drop one; speed comes from fewer moving parts and clearer runbooks.”

— Slava K., CTO, TATEEDA GLOBAL

Under the hood, reliable data and MLOps keep models honest. You will see airflow-like orchestrators, versioned datasets, and feature stores that make experiments repeatable and services observable.

Data and MLOps Backbone:

- Orchestration and transforms: Airflow or Prefect; dbt for SQL-first modeling.

- Compute and streams: Spark, Kafka, or Kinesis; Delta or Parquet for storage.

- Experiment and deployment: MLflow or Weights & Biases; Docker and Kubernetes.

- Features: Feast or native platform feature stores.

App and Integration Layer:

- Services and APIs: FastAPI, gRPC, REST, event buses.

- Vector search: pgvector, Pinecone, FAISS for embeddings at scale.

- Healthcare rails: HL7 v2, FHIR, SMART on FHIR for EHR connectivity and write-backs.

Governance threads through everything: dataset lineage, bias testing, PII or PHI masking, prompt and response logging, drift monitors, and kill switches. These controls make AI safer to run in production and easier to audit when questions arise.

Technology Snapshot Table

| Layer | Primary Goal | Common Tools | Notes for Nearshore Teams |

| Cloud AI | Train and serve quickly | SageMaker, Vertex AI, Azure ML, watsonx | Choose one primary platform to reduce handoffs |

| Modeling | Build accurate models fast | PyTorch, TensorFlow, XGBoost, LightGBM; OpenAI, Anthropic, Llama | Keep a small “approved” set with examples |

| Retrieval | Ground LLMs on your data | LangChain, LlamaIndex; pgvector, Pinecone, FAISS | Standardize chunking, caching, and evals |

| Data & MLOps | Reproducibility and scale | Airflow, dbt, Spark, Kafka, Delta/Parquet; MLflow, W&B | Enforce registries, tags, and CI gates |

| App & Integrations | Deliver features to users | FastAPI, gRPC, REST; HL7 v2, FHIR, SMART | Prebuilt adapters cut weeks from delivery |

| Governance | Safety and compliance | Lineage, masking, logs, drift, kill switches | Tie controls to audits and SLAs |

Security, Compliance, and Data Governance

Security is not a bolt-on for nearshore AI software development for U.S. companies; it is the scaffolding that holds every sprint together from the first dataset import to the final rollout. Treat privacy and compliance as product features, and you will move faster with fewer blockers. Start by writing down what data you need, where it lives, and who may touch it. Then codify protections so they run automatically rather than as ad hoc checks.

To make this real, anchor controls in five areas that map to common enterprise expectations:

- Contractual controls: put a DPA and NDA in place, assign IP to the client, and confirm work-for-hire ownership before any access is granted.

- Access: require least-privilege roles, SSO or OIDC, VPN or bastion entry, plus VPC peering for system-to-system paths.

- Data controls: scope PHI or PII, tokenize sensitive fields, mask in non-prod, and use synthetic data for development so teams can start while full approvals are complete.

- Audits and standards: align delivery with SOC 2 or ISO 27001; for healthcare, add HIPAA with BAAs; for payment, add PCI-DSS.

- Model governance: track dataset lineage; run bias tests and red-team exercises; monitor performance and drift; maintain a kill switch and a rollback plan.

When these pieces are part of your custom nearshore AI agent development services in the US, you get velocity with accountability, plus artifacts that satisfy security reviews without slowing the work.

“Start with access and privacy. Map data domains early, decide what never leaves your tenant, tokenize PHI for development, enforce least-privilege with SSO, log every query, and ship Sprint 1 on synthetic data while BAAs and DPAs finalize. Set clear redlines—no production dumps, no personal devices, no shadow tools—so teams move fast without guessing.”

— Slava K., CTO, TATEEDA GLOBAL

How the Nearshore AI Development Model Works

Nearshore delivery works best when the path is clear, visual, and measurable. You are not outsourcing decisions; you are outsourcing repeatable execution with tight feedback loops. Treat the project like engineering a system, not just writing a model, and write the story of the agent before any code: who speaks first, what data is needed, which tools the agent can call, and how success is judged at each hop.

1) Discovery and framing

Capture the business intent, users, and moments of truth. Inventory data sources, label anything that contains PHI or PII, and define precise inputs and outputs the agent must handle, for example, a claim number in and a triage decision out. Set metrics now, such as precision on flagged items, average handling time, and cost per 1,000 requests.

2) Schema and multi-step algorithm design

Draft engineering schemas that everyone can read. Use a swimlane or BPMN chart to map human and agent responsibilities. Add a sequence diagram for tool calls, such as retrieval, policy check, and database write. Describe the agent algorithm as a loop: sense, plan, act, verify. Spell out guardrails, timeouts, and retry paths. If the agent uses specialized tools, define each tool’s contract as a small spec with inputs, outputs, and failure modes.

3) Feasibility spike and data scaffolding

Stand up a thin vertical slice with sample data. Build a small evaluation harness that tests the algorithm end-to-end. While production access is pending, use synthetic records that mirror edge cases. Record baselines for accuracy, latency, and cost.

4) Build and integrate

Implement pipelines, features, and the agent graph. Wire APIs that the agent will call, then expose the agent through FastAPI or another interface your app team prefers. Add basic UI to reveal the agent’s reasoning trace for triage and audits.

5) MLOps and governance

Register models and prompts; set CI and CD for agent flows; release via canary. Monitor latency, error rate, cost, and drift. Keep rollback simple and documented. For healthcare or finance, pair the agent charts with a data-flow diagram that shows masking in development and least-privilege paths in production.

6) Handover and scale

Deliver runbooks, diagrams, and a backlog for version two. Version the schemas as well as the code so the organization can evolve the agent safely. This sequence fits staffed pods or nearshore AI software outsourcing from the United States to friendly jurisdictions, and it keeps everyone focused on measurable outcomes.

“For U.S. teams, that translates into seasoned practitioners who already work on AWS, Azure, or Google Cloud and understand compliance-heavy domains like healthcare and finance. Add MLOps muscle—model registries, canary releases, cost and drift monitors—and you get AI that is not just clever in a demo but stable, auditable, and affordable in production.”

— Slava K., CTO, TATEEDA GLOBAL

Agent Design Artifacts at a Glance

| Artifact | Purpose | What It Shows | Who Uses It |

| Swimlane or BPMN chart | Clarify roles | Human vs. agent steps with decision gates | Product, compliance, operations |

| Sequence diagram | Orchestrate tools | Order of calls: retrieval, policy, write-back, notify | Engineers, architects |

| Data-flow diagram | Prove safety | Where PHI or PII travels, masking in dev, access in prod | Security, data stewards |

| Agent algorithm spec | Make logic explicit | Sense, plan, act, verify; retries; timeouts; guardrails | Engineers, QA |

| Evaluation harness report | Keep quality visible | Accuracy, latency, cost, and drift over time | PM, MLOps, stakeholders |

Budgeting, Timelines, and Team Shapes

Budget talks go better when scope, cadence, and staffing are explicit. For a single, narrow use case, most teams succeed with a short pilot that is easy to measure. Broader initiatives can use the same pattern by splitting into small, testable streams and funding each by milestone rather than by open-ended hours.

Plan around three practical elements:

- Typical pilot ranges: for a focused use case, expect 6 to 12 weeks from discovery to canary, with 1 to 2 additional weeks for hardening if metrics are met. Tie payments to deliverables: baseline, evaluation report, and canary release.

- Team templates: a “Pod” usually includes 1 data scientist, 1 data engineer, 1 ML engineer, and a PM or tech lead who keeps decisions flowing. An “Extension” model adds 1 or 2 roles under your internal lead when you already own product and architecture. Define overlap hours up front to guarantee same-day iteration.

- Hidden costs to surface early: data cleanup and labeling, security reviews and device provisioning, access approvals, change management and training, post-launch monitoring, and periodic retraining budgets.

Tie spend to milestones, not guesses. Release a small feature, evaluate metrics, and either expand or stop. This keeps nearshore AI software development for U.S. companies financially sane and makes progress visible to finance and compliance, not just engineering.

Budget and Team Snapshot

| Scope | Duration | Team Shape | Milestone Examples | Notes |

| Narrow pilot (single use case) | 6–12 weeks | Pod: DS + DE + MLE + PM/Lead | Baseline report; evaluation harness; canary release | Easiest path to measurable ROI |

| Extension to existing team | 4–10 weeks | 1–2 roles under your lead | Data pipeline upgrade; model refactor; MLOps rollout | Use overlap hours for daily iteration |

| Production hardening | 2–4 weeks | Pod + Security reviewer | PII masking; access reviews; rollback drill | Required for HIPAA or PCI contexts |

| Scale-out phase | Ongoing | Pod plus SRE/MLOps | Cost dashboard; retrain schedule; capacity plan | Budget for monitoring and model drift |

Conclusion

Nearshoring for AI gives U.S. companies what they actually need: faster iteration in familiar time zones, senior talent that knows cloud and compliance, and a delivery rhythm built around measurable outcomes. When you pair clear governance with a compact toolset and disciplined MLOps, pilots move from idea to canary in weeks, integrations land cleanly, and you keep control of cost, risk, and quality. In short, this model turns AI assistants, agents, and analytics into working software that your teams can trust and your stakeholders can see.

TATEEDA is ready to help as your nearshore AI partner. We combine U.S.–based software architects and project managers for local communication and day-to-day coordination with proven R&D capacity across LATAM and Eastern Europe to keep budgets sensible without slowing delivery. If you want AI that fits your healthcare, finance, or operations workflows—designed with privacy in mind and built to run—we’ll assemble the right pod, connect to your stack, and ship.