Why Integrate AI Into Your EHR/EMR System: Use Cases, Architecture, and Real Costs

| Key Takeaways: ✅ AI EHR integration delivers the clearest ROI in clinical documentation and revenue cycle automation — physicians save 3.2 hours per day, and McKinsey estimates $360 billion in annual healthcare savings from AI broadly. ✅ HIPAA requires that any AI model processing PHI operates under a signed Business Associate Agreement (BAA), including cloud-hosted large language models such as OpenAI GPT or AWS Bedrock. ✅ Native AI from Epic, Oracle Health, and athenahealth covers generic workflows; custom AI development is required for specialty-specific logic, proprietary data pipelines, or integrations with non-standard systems. ✅ HL7 FHIR R4 APIs are the standard data exchange layer for AI-EHR integration; systems still running HL7 v2 messaging require translation middleware before AI can consume the data.✅ Clinician adoption, not technical architecture, is the most common failure point in AI-EHR deployments. Change management must be planned before the first line of code is written. |

AI EHR integration is already delivering measurable results at health systems across the United States. Physicians using AI-assisted documentation report saving 3.2 hours per day on charting. The technology’s adoption has doubled in a single year, with 31% of healthcare organizations running some form of artificial intelligence on their Electronic Health Record (EHR) infrastructure, up from 16% the year before.

The case for integration is no longer theoretical. But the “why” is only half the conversation. The harder question for CTOs and engineering leads is the “how” — specifically, how you build AI into a system that holds protected health information (PHI), must comply with the Health Insurance Portability and Accountability Act (HIPAA), and cannot tolerate the failure modes common in general-purpose AI deployments.

This guide covers the business case, the seven highest-value use cases, the HIPAA-compliant architecture requirements, the custom development versus native Electronic Medical Record (EMR) AI decision, real implementation challenges, and what this actually costs to get right.

Table of Contents

The Business Case for AI-EHR Integration

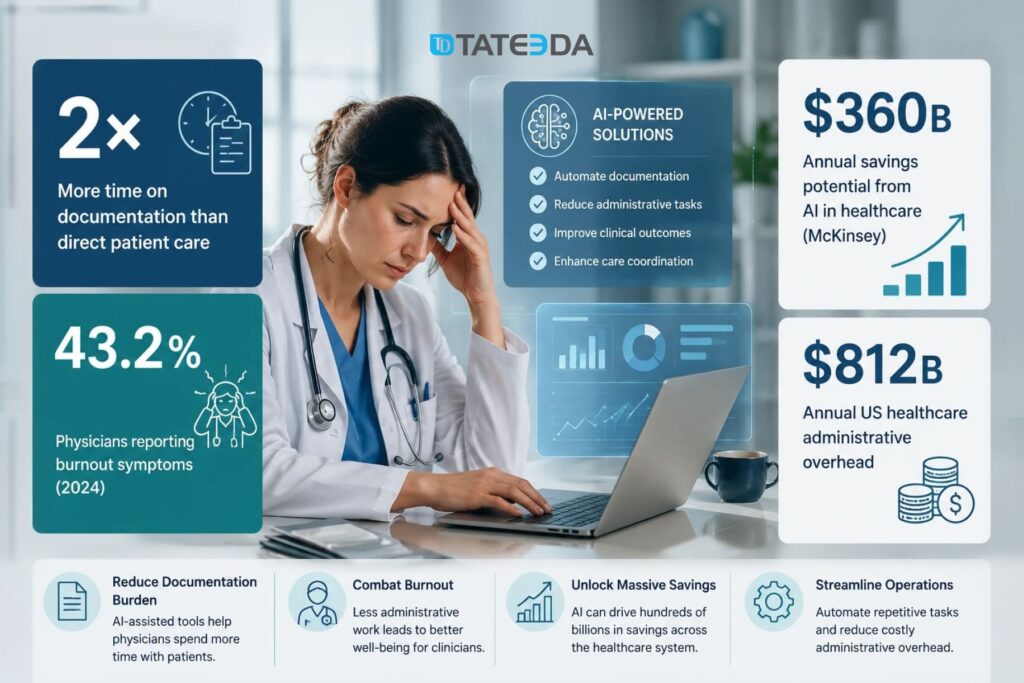

Physicians in the United States spend, on average, twice as much time on documentation as they do in direct patient care. A 2024 American Medical Association survey found that 43.2% of physicians reported burnout symptoms, and EHR documentation burden is consistently cited as the primary driver.

The financial stakes extend far beyond individual clinician productivity. Administrative overhead in US healthcare costs an estimated $812 billion annually. McKinsey estimates that AI applied systematically across health systems could yield up to $360 billion in annual savings through administrative automation, diagnostic improvement, and care pathway optimization. EHR-integrated AI is where a significant share of that reduction happens.

Reducing documentation burden and clinician burnout

| Dr. Sarah Kim, a cardiologist at a mid-sized California health system, used to spend four to six hours per evening in the EHR after clinic hours. Cardiology notes are structurally complex: they reference device data, imaging findings, medication titrations, and downstream coding requirements that general-purpose note templates handle poorly. Her after-hours charting was not a time management problem — it was a workflow problem built into the EHR. After her organization deployed an AI ambient documentation layer that captured patient-physician conversations in real time and pre-populated the EHR with structured note drafts, her after-hours charting dropped to under an hour. The physician still reviews and approves every note; the AI handles transcription, entity extraction, and code mapping. A Yale-led study measuring this category of intervention found that AI-assisted documentation reduced physician burnout probability by 30 percentage points over a 30-day period. |

Automating administrative and revenue cycle tasks

Prior authorization alone costs US healthcare an estimated $35 billion annually in administrative overhead. AI systems trained on payer coverage policies can generate prior auth requests from structured EHR data, submit them via payer APIs, and track approval status without manual staff entry. For high-volume specialties including oncology and orthopedics, this automation reclaims hundreds of administrative hours per month per practice.

Insurance eligibility verification, claims coding, and appointment scheduling are equally high-volume, low-clinical-value tasks that healthcare AI automation handles well — freeing clinical staff for work that requires human judgment.

Shifting from reactive treatment to predictive care

Predictive analytics is where AI-EHR integration delivers the most transformative clinical value. Historical EHR data — lab trends, vital sign patterns, medication histories, care gaps — can train models to identify patients at elevated risk for sepsis, hospital readmission, or cardiac events before those events occur.

A real-time risk stratification model integrated with the EHR can surface alerts to care teams at the point of care, enabling earlier intervention. That shift from reactive to predictive care has measurable downstream outcomes: reduced intensive care unit (ICU) admissions, shorter lengths of stay, and lower readmission penalties under Centers for Medicare and Medicaid Services (CMS) value-based care programs.

TATEEDA builds custom AI-assisted clinical workflows for healthcare organizations. See what we deliver through our custom AI development services for healthcare.

Seven High-Value Use Cases for AI in EHR/EMR Systems

Not all AI-EHR integrations deliver equal value. These seven use cases have the clearest return on investment and the most mature implementation patterns in production US health systems.

1. Ambient clinical documentation

AI ambient scribes listen to patient-physician conversations, extract clinically relevant information, and populate the EHR with structured note drafts. The physician reviews and approves the output — the AI does not write autonomously to the medical record. Physician-reviewed AI drafts is a key regulatory distinction.

Implementation requires a secure audio capture layer, a transcription pipeline with PHI handling controls, and a structured-data output mapping to the EHR’s note schema. HIPAA requires the transcription service to operate under a signed BAA. Epic has embedded ambient documentation into its platform; Oracle Health offers similar functionality. Third-party solutions, including Nuance DAX and Suki, integrate via FHIR APIs.

2. Clinical decision support

AI-powered clinical decision support systems (CDSS) analyze EHR data in real time to surface evidence-based recommendations: drug interaction warnings, diagnostic differentials, care protocol adherence alerts, and preventive care reminders.

The critical architecture requirement here is low-latency data access. Decision support that fires 30 seconds after the physician has moved on gets dismissed. The integration layer must query the EHR via FHIR R4 APIs with sub-second response times, requiring careful data pipeline design and a caching strategy. CDS Hooks, a specification built on FHIR, provides the standard mechanism for triggering clinical decision support within EHR workflows.

3. Predictive risk stratification

Models trained on longitudinal EHR data predict patient-level risk for sepsis, readmission, falls, and chronic disease progression. These models run as background services, continuously scoring the active patient population and surfacing high-risk flags to care teams via the EHR dashboard.

Oracle Health has integrated sepsis prediction models into its platform at hospital systems, including The University of Kansas Health System, where real-time AI alerts led to measurably faster clinical interventions and a documented reduction in preventable complications.

4. Prior authorization and insurance workflow automation

AI models trained on payer coverage policies can generate prior authorization requests from structured EHR data, submit them via payer APIs or clearinghouse integrations, and track approval status — all without manual staff entry. Integration with payers via EDI 278 transactions or REST APIs is the standard architecture for this use case.

5. NLP-driven data normalization and interoperability

A significant share of clinically valuable EHR data is unstructured: physician notes, dictations, scanned documents. Natural language processing (NLP) pipelines extract structured entities — diagnoses, medications, procedures, lab values — from free text, making that data queryable for analytics, population health, and AI model training.

This is an area where custom AI development often outperforms native EHR AI, because the NLP models need training on institution-specific note patterns, specialty-specific terminology, and local documentation conventions. Generic models produce acceptable results; fine-tuned models produce the accuracy required for clinical decisions.

6. Patient communication and follow-up automation

AI-driven patient communication layers integrated with the EHR’s patient portal automate post-visit follow-ups, medication adherence reminders, care gap notifications, and appointment scheduling. These workflows read from and write to the EHR, keeping communication records as part of the longitudinal patient record.

7. Revenue cycle management and coding accuracy

AI coding assistants analyze clinical notes and encounter data to suggest accurate ICD-10 and CPT codes, flag undercoding, and identify documentation gaps that trigger claim denials. Revenue cycle automation directly impacts cash flow. Claim denial rates above 5% are considered a performance problem in most revenue cycle benchmarks, and AI-assisted coding typically reduces denials by 15 to 30% in high-adoption implementations.

What AI-EHR Integration Requires: A HIPAA-Compliant Architecture

Most vendor-written content on AI-EHR integration mentions HIPAA without spelling out what it actually requires in your architecture. HIPAA-compliant AI development is an engineering discipline, not a checkbox — here is what your team needs to implement.

FHIR R4 APIs as the data exchange layer

Fast Healthcare Interoperability Resources (FHIR) R4 is the standard for structured healthcare data exchange in modern US health systems. The HL7 FHIR R4 specification defines the resource types, RESTful API patterns, and data formats that allow AI systems to query patient data from EHRs in a standardized way.

SMART on FHIR adds an OAuth 2.0-based authorization layer to FHIR APIs, enabling AI applications to access patient data with patient or clinician consent, scoped to specific resource types and patients. Any AI application reading PHI from an EHR via API must implement SMART on FHIR authorization correctly.

Systems still running HL7 v2 messaging — a significant portion of older hospital infrastructure — require translation middleware before AI systems can consume the data in FHIR format. This translation step is a non-trivial engineering task: it must preserve data fidelity, map HL7 v2 message segments to FHIR resource fields accurately, and maintain audit trail integrity through the translation.

Handling PHI within LLM pipelines

If your AI integration sends EHR data to a large language model (LLM) — including OpenAI’s GPT-4, Anthropic’s Claude, or AWS Bedrock models — that LLM provider must sign a HIPAA BAA before any PHI flows into the pipeline. This is a hard requirement under HIPAA’s Business Associate rule, not a best practice recommendation.

Several LLM providers offer BAA-eligible configurations: Microsoft Azure OpenAI Service, AWS Bedrock under the AWS BAA, and Google Cloud Healthcare API. Standard OpenAI API access, without a BAA, is not authorized for PHI processing.

Beyond the BAA, your pipeline must implement PHI minimization: stripping or replacing patient identifiers where the AI function does not require them. A coding assistance model needs clinical content; it does not need the patient’s name and date of birth. Every PHI field passed to an AI model expands the compliance surface area.

Audit logs must capture every PHI access event: which system accessed what data, when, under which user context, and what the AI system produced. These logs must be retained for a minimum of six years per HIPAA’s Retention Rule and must be immutable. See the HHS guidance on Business Associate obligations for the authoritative requirements.

Audit logging, explainability, and access controls

HIPAA’s Security Rule requires role-based access controls (RBAC) that enforce minimum necessary access to PHI. In an AI-EHR architecture, the AI system’s service account must be scoped to exactly the data types and patient populations it needs — not granted broad read access to the EHR database.

For clinical decision support systems where the AI’s recommendations become part of the clinical record, audit logs must also capture the model version that generated the recommendation. If a CDSS alert influenced a clinical decision and the model is later found to be biased or inaccurate, your organization needs to reconstruct exactly what the model produced for that patient encounter.

BAA obligations with third-party AI models

The BAA chain extends to every subprocessor that handles PHI. If you deploy an AI ambient scribe that uses a third-party transcription service, that transcription service is a Business Associate and needs its own BAA. Map the full data flow before selecting vendors — the legal exposure of a missing BAA in the chain is the same as having no compliance controls at all.

Custom AI Development vs. Native EHR AI

| Marcus Webb, VP of Engineering at a 300-bed regional health system, spent six months trying to make Epic’s native AI features solve his cardiology department’s specific documentation requirements. The native ambient documentation worked well for general medicine. For cardiology, where note structures reference device data from ECG systems and hemodynamic monitors, the native model produced drafts that required as much editing as writing from scratch. His team eventually built a custom AI documentation layer on top of Epic’s FHIR APIs: a fine-tuned model trained on their own cardiology notes, integrated with device data feeds, with structured output mapped directly to Epic’s note fields. The result was a tool that worked the way cardiologists actually chart — and adoption reached 87% within eight weeks of rollout. |

What Epic, Oracle Health, and athenahealth’s embedded AI actually does

Epic has integrated GPT-4-based ambient documentation, AI-assisted coding, and predictive analytics into its platform. Oracle Health offers note generation, clinical decision support alerts, and predictive models for specific high-acuity conditions. athenahealth provides AI-assisted coding and population health analytics.

These native features work well for common use cases across general clinical workflows. They are constrained by the EHR vendor’s model choices, training data, and update cadences. They cannot be customized to specialty-specific requirements, fine-tuned on your institution’s own patient data, or extended to integrate with systems outside the EHR vendor’s ecosystem.

Use native EHR AI when…

- Workflows are standard (primary care, general internal medicine)

- No large volumes of institutional data to fine-tune on

- Budget for AI implementation is limited

- Use case is on the vendor’s roadmap

- You accept the vendor’s model versioning schedule

Build a custom AI layer when…

- Specialty-specific documentation required (cardiology, oncology, psychiatry)

- Non-standard device or third-party integrations needed

- Predictive models must train on your own patient data

- You need model versioning and explainability control

- Data sovereignty is a regulatory or competitive requirement

A hybrid approach is common in practice: native EHR AI handles standard workflows; custom AI modules handle specialized cases via the EHR’s FHIR API layer, running as independently deployed services.

TATEEDA builds custom AI layers that integrate with Epic, Oracle Health, and athenahealth via HL7 FHIR R4 APIs. See our full scope at our EHR integration services page.

Real Implementation Challenges, and How to Solve Them

Data fragmentation and normalization

EHR data is rarely clean. A health system that has run Epic for eight years has eight years of data entry variations, free-text note conventions, custom code mappings, and legacy migration artifacts. Before any AI model operates on that data, it requires a normalization pipeline that standardizes formats, resolves conflicting entries, and handles missing values without corrupting the downstream model.

NLP pipelines that extract structured entities from clinical notes add another layer of complexity: the same clinical concept may appear differently across physicians, specialties, and years. A sepsis risk model trained on data where “septic shock” and “SIRS with organ dysfunction” are not mapped to the same entity will underperform on populations where different documentation conventions are common. Building the normalization layer is typically the most time-consuming pre-deployment task in an AI-EHR project.

Clinician adoption: the most common failure point

An AI system that clinicians do not use delivers no value regardless of technical quality. Most AI-EHR implementations that fail do so at the adoption layer, not the architecture layer.

The pattern is predictable. An AI documentation tool is deployed. Clinicians find that the drafts require as much review time as manual charting, either because the model was not validated against their note patterns or because the workflow introduced friction that offsets the time savings.

Adoption drops. The project is declared a failure.

The root cause is almost always a validation gap, not a model quality problem.

Successful adoption requires clinical champions involved in validation before rollout, a feedback loop that lets clinicians flag model errors directly into the training pipeline, workflow integration that surfaces the AI tool within the EHR rather than requiring a context switch, and measurable productivity metrics tracked within the first two weeks of deployment. When physicians can see their after-hours charting time in a dashboard and watch it decline, adoption becomes self-reinforcing.

Ongoing HIPAA compliance for AI systems

AI systems require ongoing compliance monitoring, not just initial validation. Model updates — whether from a vendor or from retraining on new institutional data — must be validated for PHI handling before deployment. Audit logs must be reviewed periodically for anomalous access patterns. BAAs with AI vendors must be tracked for renewal and scope changes.

The ONC’s Trusted Exchange Framework and Common Agreement (TEFCA) adds a layer of interoperability compliance for organizations participating in Qualified Health Information Networks (QHINs). By 2026, TEFCA alignment is increasingly expected for health systems operating AI-assisted data exchange.

AI bias and explainability requirements

Regulators, risk management teams, and clinical governance committees are asking healthcare organizations to document how AI recommendations are generated and validated for bias. Predictive models trained on historical EHR data can inherit historical disparities in care: models trained on datasets that underrepresent certain populations may produce less accurate risk scores for those groups.

Explainability frameworks — including LIME (Local Interpretable Model-agnostic Explanations) and SHAP (SHapley Additive exPlanations) — allow engineering teams to produce human-readable explanations of individual model predictions. Deploying explainability tooling is no longer optional for clinical AI systems at enterprise health systems that must demonstrate model governance to their clinical and compliance leadership.

What Does AI EHR Integration Cost?

Cost ranges vary significantly by scope. The table below provides a directional framework:

| Integration Type | Estimated Cost Range | Timeline |

|---|---|---|

| Full custom AI layer with FHIR pipeline, multiple use cases, and compliance infrastructure | $25,000–$100,000 (implementation + first-year support) | 2–6 months |

| Custom AI module on existing EHR (single use case, fine-tuned) | $150,000–$500,000 | 4–9 months |

| Full custom AI layer with FHIR pipeline, multiple use cases, compliance infrastructure | $500,000–$2,000,000+ | 9–18 months |

The largest cost drivers are: data pipeline complexity (normalization, HL7 v2 translation, legacy data migration), model selection and fine-tuning on institutional data, HIPAA compliance infrastructure (audit logging, BAA management, PHI handling validation, penetration testing), and clinical validation with change management, which are consistently underbudgeted in initial project scopes.

Return on investment benchmarks vary by use case. Ambient documentation delivers the fastest measurable payback — typically within 6 to 12 months based on clinician time recovered. Revenue cycle automation also delivers fast payback through denial rate reduction. Predictive analytics ROI is harder to attribute directly, but is measurable through readmission rates and length-of-stay reductions against pre-implementation baselines.

Use TATEEDA’s custom software cost calculator to generate a project-specific estimate based on your EHR environment, use case complexity, and compliance requirements.

How TATEEDA Builds AI Into Healthcare Systems

TATEEDA has built production AI integrations for healthcare organizations across clinical data capture, remote monitoring, and predictive analytics — all under HIPAA compliance frameworks with BAAs signed before any code is written.

For VisionTree, a healthcare data company managing electronic data capture (EDC) for clinical research, TATEEDA built a forms system processing 40 to 50 schema updates per release cycle, with NLP-driven normalization of structured and unstructured clinical data from hospital and clinic environments. The architecture was designed to accommodate the rapid iteration pace of clinical research while maintaining immutable audit trails for regulatory submissions. See the VisionTree EDC software case study for the technical breakdown.

For VentriLink, TATEEDA built a remote heart monitoring platform that captures real-time ECG data from connected devices, runs arrhythmia detection algorithms on the continuous data stream, and logs clinical events into a physician-facing application with EHR-ready output. That project combined IoT data ingestion, real-time AI inference, and clinical workflow integration in a HIPAA-compliant architecture. The VentriLink case study covers the technical architecture and outcomes in detail.

Our approach to every AI-EHR engagement starts with the compliance architecture. HIPAA BAA is signed before any PHI flows into the design. PHI handling is designed before model selection is finalized.

100+ senior engineers, no juniors on client-facing work, integrated within 48 to 72 hours. Our HIPAA-compliant healthcare software development practice covers the full stack, from FHIR data pipelines to clinical validation and ongoing compliance monitoring.

If you are evaluating the right integration architecture for your environment, our healthcare IT consulting team can help you scope the approach before a line of code is written.

The Right Architecture Starts Before the First Line of Code

If you are evaluating whether to extend your EHR vendor’s AI, build a custom integration, or design a HIPAA-compliant AI pipeline from scratch — our architects can help you

Frequently Asked Questions

What is AI EHR integration?

AI EHR integration is the practice of embedding artificial intelligence capabilities — including NLP, predictive analytics, clinical decision support, and automated documentation — into EHR or EMR systems. Integration may use the EHR vendor’s native AI features, custom AI modules built on the EHR’s FHIR API layer, or a combination of both.

Is AI in EHR systems HIPAA compliant?

AI in EHR systems can be HIPAA-compliant, but compliance is not automatic. Any AI model that processes, stores, or transmits PHI must operate under a signed BAA with every vendor in the data flow. The AI integration must implement RBAC, PHI minimization, audit logging with six-year retention, and encryption at rest and in transit. HIPAA compliance is an architectural outcome — it requires deliberate design decisions at every layer of the system.

How long does it take to integrate AI into an existing EHR?

Timelines range from two months for configuring native EHR AI features to 18 months or more for full custom AI layer development with FHIR data pipelines, model training, and compliance infrastructure. The largest time variables are data normalization complexity, clinical validation scope, and the extent of change management required for clinician adoption.

What is the difference between Epic’s native AI and custom AI development?

Epic’s native AI uses pre-trained models on large, anonymized datasets and covers general workflows. Custom AI development — built on Epic’s FHIR APIs — allows healthcare organizations to train models on their own patient population’s data, implement specialty-specific documentation and coding logic, and integrate with systems outside Epic’s ecosystem. Custom development carries a higher upfront cost but delivers higher specificity for complex clinical environments.

What FHIR version should AI-EHR integrations use?

HL7 FHIR R4 is the current standard for US healthcare data exchange and the version required under the CMS and ONC interoperability rules. New AI-EHR integrations should be built on FHIR R4 with SMART on FHIR authorization. Systems running HL7 v2 require translation middleware to expose data in FHIR format before AI systems can consume it.

What are the most common reasons AI-EHR implementations fail?

Clinician adoption failure is the most common — AI tools that do not fit the actual clinical workflow get abandoned regardless of technical quality. Data quality problems are the second most common: models deployed on fragmented, unnormalized EHR data produce unreliable outputs. Compliance failures are less common but carry the highest consequence: a single AI model processing PHI without a signed BAA constitutes a HIPAA violation regardless of the AI’s clinical accuracy.