The Role of AI Agents in Healthcare: Outcomes, Risks, and the Cost of Waiting

| Key Takeaways: ✅ AI agents in healthcare automate documentation, prior authorization, patient engagement, and revenue cycle workflows — with documented production outcomes, not projections. ✅ The technology is largely ready. The failure cases are organizational: absent review gates, poor EHR data quality, clinician resistance, and unclear liability structures. ✅ Hospital leaders evaluating AI agents must ask specific questions about failure modes, HIPAA BAA scope, and vendor liability — not just capability demos. ✅ Community hospitals (<300 beds) should start with one workflow. Multi-workflow deployments require governance infrastructure that most organizations don’t yet have. ✅ The cost of waiting is measurable: organizations running agentic AI pilots today are building institutional expertise and generating outcomes data their competitors don’t have. |

Physicians spend two hours on EHR documentation and administrative work for every one hour of direct patient care. That ratio has not improved in a decade — it has gotten worse. And it is the single most cited driver of physician burnout, staff turnover, and the quiet erosion of clinical capacity at US health systems.

If you require technical assistance in developing and integrating a custom agentic AI solution, please contact our AI experts for individualized project consultation!

AI agents are changing it. Not AI tools that suggest text. Not predictive analytics dashboards. AI agents — autonomous software systems that plan multi-step tasks, execute them across connected systems, verify results, and complete entire workflows without step-by-step human instruction for each action. The role of AI agents in healthcare is expanding fast, and for executives at hospital systems, health insurance companies, and health-tech organizations, the window for strategic positioning is closing.

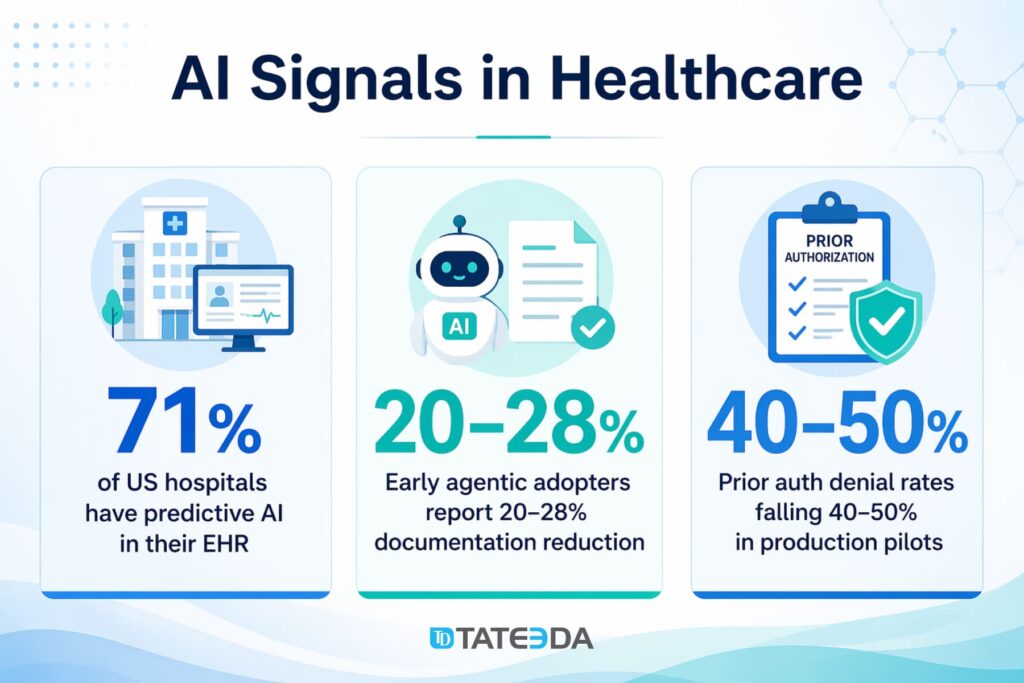

71% of US hospitals reported predictive AI integrated with their Electronic Health Record (EHR) in 2024, up from 66% the year before. The organizations now moving to agentic AI — one tier above predictive AI in autonomy and workflow scope — are reporting 20–28% reductions in documentation time and measurable drops in prior authorization denial rates. Those waiting are falling behind in physician satisfaction, payer performance, and administrative cost per encounter.

This article is written for healthcare executives, not engineers. It covers what AI agents actually do, what the business data shows, where they fail, what the regulatory exposure looks like from a leadership perspective, and how to evaluate whether your organization is ready to move.

Table of Contents

What AI Agents Actually Do in Healthcare

AI agents in healthcare are autonomous software systems that perform multi-step clinical and administrative workflows — including writing documentation, managing prior authorizations, coordinating patient communications, and routing clinical alerts — without requiring step-by-step human instruction for each action.

That definition matters because it separates AI agents from two technologies frequently conflated with them. Predictive AI analyzes data and surfaces recommendations for humans to act on. Copilot-style AI tools assist humans in performing tasks. AI agents plan, execute, and complete workflows autonomously, then report outcomes for human review.

The five primary roles AI agents perform in US healthcare settings today:

- Clinical documentation: Ambient AI listens to patient-physician conversations and generates complete clinical notes in the physician’s voice. The physician reviews and co-signs; they do not write from scratch.

- Prior authorization and claims management: Agents assemble patient records, match clinical criteria against payer requirements, submit prior authorization requests, track submission status, and flag missing documentation before submission rather than after rejection.

- Patient communication and care coordination: Agents handle appointment reminders, post-discharge follow-up, medication adherence outreach, and Remote Patient Monitoring (RPM) alert escalation without a human staff member placing each contact.

- Clinical decision support routing: Agents direct time-sensitive alerts (abnormal lab values, medication interaction flags, deterioration scores) to the appropriate clinician based on role, shift, and patient assignment — rather than broadcasting to everyone on the floor.

- Revenue cycle automation: Agents process claims, identify coding errors before submission, and manage denial follow-up workflows across the billing cycle.

The Business Case: What Healthcare Organizations Are Reporting

Documentation and clinical productivity

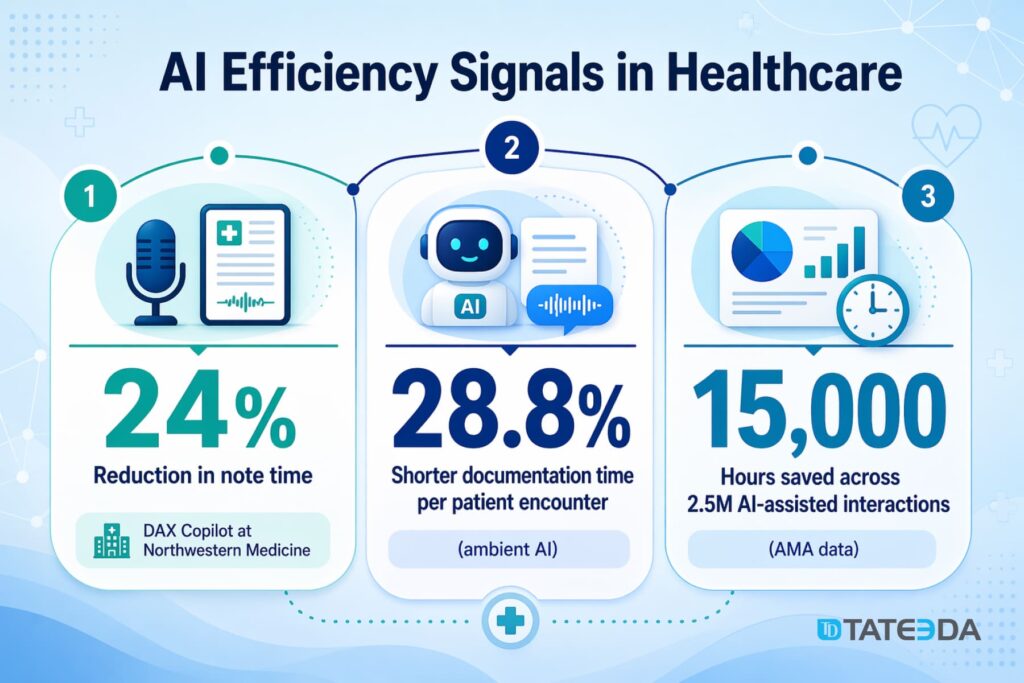

The most mature agentic AI use case in healthcare is ambient clinical documentation, and the outcomes data is substantial. Microsoft’s DAX Copilot deployment at Northwestern Medicine produced a 24% reduction in time spent on clinical notes and a 17% reduction in after-hours documentation. Across 2.5 million AI-assisted interactions tracked by the American Medical Association (AMA), total time savings across participating organizations reached 15,000 hours. A separate analysis found a 28.8% reduction in documentation time per patient encounter.

Consider what that number means in practice. A primary care physician seeing 20 patients per day who recovers 28.8% of per-encounter documentation time gets roughly 90 minutes back daily. Across a 250-day clinical year, that is 375 hours — more than nine full-time equivalent weeks — returned to direct patient care.

| Real-World Outcome Dr. Maria Chen, chief medical officer at a 400-bed community health system in the Pacific Northwest, authorized a pilot of ambient documentation for her emergency department after reviewing equivalent data in late 2024. “The number I kept coming back to wasn’t the time savings,” she said during a post-pilot review. “It was those physicians who felt crushed by documentation who were the same ones leaving. If ambient AI keeps two physicians from burning out and resigning, the ROI calculation is immediate.” The pilot launched with 12 emergency physicians in January 2025. At 90 days, physician satisfaction scores related to documentation burden had improved 31 percentage points. Turnover in that group during the pilot period was zero. |

Revenue cycle and prior authorization

Prior authorization is the most expensive administrative workflow in US healthcare, consuming an estimated $13 billion annually in administrative labor. Denial rates for manually submitted prior auths run 18–22% at most health systems. AI agents that assemble and verify prior auth submissions before they leave the organization — catching missing documentation at the point of submission rather than after rejection — are reporting denial rate reductions of 40–50% in early production deployments. Faster approvals mean faster reimbursements and measurably lower revenue cycle drag.

For technical details on the agent architecture behind prior auth automation, see TATEEDA’s Planner-Executor-Verifier agents in healthcare — the implementation guide for engineering teams building these workflows.

Patient engagement and care adherence

AI agent-managed follow-up workflows — post-discharge check-ins, medication adherence outreach, chronic disease management contacts — consistently achieve completion rates three to four times higher than manual outreach programs constrained by staffing capacity. For organizations managing population health contracts with value-based care payers, that completion rate translates directly into quality metrics and shared savings distributions.

Is your organization evaluating AI agents for clinical workflows?

TATEEDA has built production HIPAA-compliant agentic AI systems for US healthcare organizations.

Where Agentic AI Fails in Healthcare: The Cases Your Vendor Won’t Show You

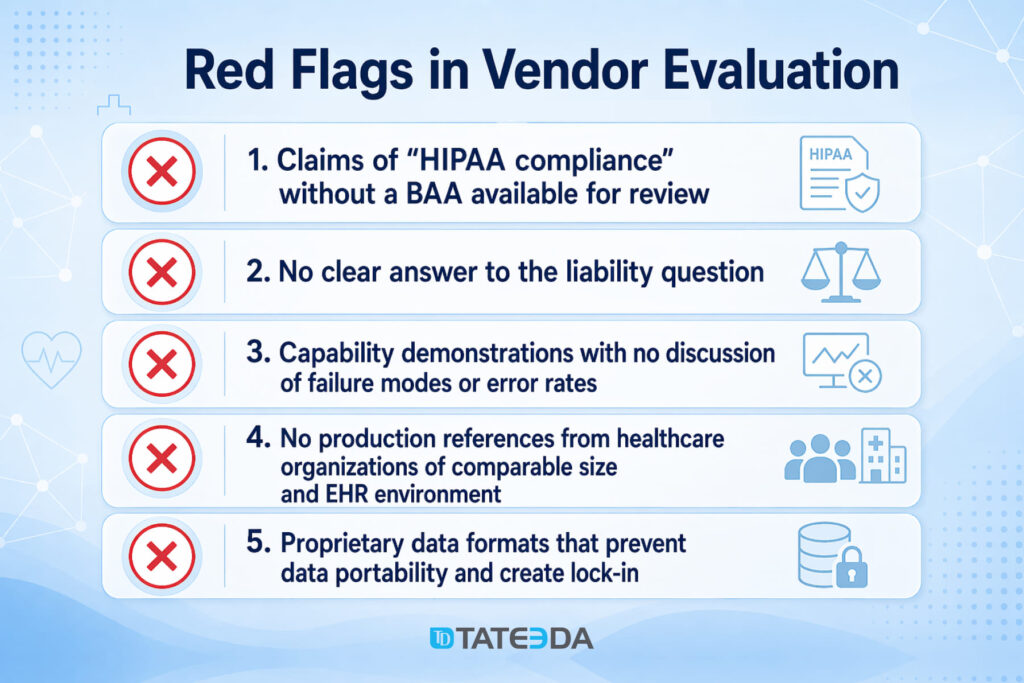

Capability demonstrations don’t show failure modes. A vendor’s discovery call will not cover what happens when an AI agent submits an incomplete prior authorization, hallucinates a dosing detail, or runs silently degraded because your EHR data quality has a problem. This section does.

Silent workflow failures

The most dangerous failure mode in agentic AI is not dramatic — it is invisible. A prior authorization agent that submits incomplete requests will not crash. It will continue operating while your denial rate climbs incrementally, and no one connects the pattern to the agent for weeks.

| Failure Case — Prior Auth Agent In early 2025, a regional health system discovered that its prior authorization agent had been silently failing on a subset of commercial payer submissions for six weeks. A payer had updated its API response format; the agent’s parsing logic didn’t handle the change and submitted requests without triggering any error alert. Claims processors noticed the denial pattern in week seven. The fix took two days. Detection took six weeks and accumulated roughly $380,000 in delayed reimbursements. |

The lesson is architectural, not incidental. Agentic AI deployments require monitoring and alerting infrastructure alongside the agent. Output auditing, anomaly detection on submission success rates, and exception queues for human review are not optional features. They are the difference between an agent that fails loudly and one that fails quietly for weeks.

Hallucination risk in clinical contexts

AI agents that summarize patient records, generate documentation, or provide clinical decision support are operating in environments where hallucinated output — plausible-sounding content that is factually incorrect — can produce patient harm. Published cases of AI documentation tools generating incorrect medication histories, fabricated allergy records, and misattributed diagnoses already exist in peer-reviewed literature.

| The critical success factor is human-in-the-loop verification at every decision point that affects patient safety. An agent who drafts a clinical note is clinically appropriate. An agent whose output is co-signed without physician review is a liability event in progress. |

Organizations deploying AI agents for clinical documentation must establish mandatory review protocols before co-signature — and must audit compliance with those protocols. Notes completed without review are not an efficiency win. They are an institutional exposure.

TATEEDA’s Planner-Executor-Verifier architecture builds mandatory verification gates into the agent design specifically to prevent this failure mode from reaching the clinical record.

Integration failures from poor EHR data quality

AI agents that depend on structured EHR data — prior auth agents, clinical decision support agents, care coordination agents — fail when that data is incomplete, inconsistent, or outdated. The ceiling for what any AI agent can deliver is determined by the quality of the data infrastructure it is operating on.

Organizations that have not invested in data governance, coding accuracy, and EHR hygiene will discover this constraint through degraded agent outputs, not through a clear error message.

The clinician workaround problem

An AI agent that physicians route around is not an efficiency gain — it is a liability with a user interface. If clinicians are copy-pasting AI documentation without reviewing it, editing AI output so extensively that the tool adds no time savings, or bypassing the system entirely because the interface creates friction, the deployment has failed on its most important metric.

| Adoption Recovery Story James Reyes, an internist with 22 years of practice, stopped using his hospital system’s ambient documentation AI after three days. The system had been trained on documentation conventions from a different health system, and its note language consistently differed from his established patterns. He spent more time editing than he would have spent writing from scratch. His feedback — combined with similar reports from 14 colleagues — led the hospital’s IT team to request model fine-tuning from the vendor. Six weeks after the update, usage among previously resistant physicians jumped from 28% to 71%. Clinician resistance is frequently feedback, not obstruction. Physician champion programs, structured feedback loops, and vendor responsiveness to customization are what separate a 30% adoption rate from a 70% one. |

Regulatory and Liability Considerations for Hospital Leadership

FDA’s AI/ML Software Action Plan and what it means for procurement

The Food and Drug Administration’s (FDA) AI/ML Software Action Plan governs AI systems that qualify as Software as a Medical Device (SaMD) — software that performs a medical function. An AI agent that provides diagnostic recommendations or directly influences treatment decisions likely requires FDA clearance under this framework. As of 2024, more than 1,200 AI/ML-enabled medical devices had received FDA clearance or approval.

An AI agent that automates prior authorization, documentation drafting, or appointment scheduling is generally not considered a medical device under current FDA guidance. Hospital executives need this distinction clearly documented in their procurement process. Using an FDA-regulated AI agent outside its cleared indication creates institutional liability. Using an uncleared agent in a clinical function that requires clearance is a compliance violation.

Who is liable when an AI agent makes an error

This is the question every hospital CMO and CFO should ask before signing a contract with an AI agent vendor — and most vendor agreements will not answer it clearly.

The current liability landscape for healthcare AI is unsettled. Physicians who co-sign AI-generated documentation are legally responsible for its accuracy, regardless of whether they generated it. Health systems that deploy AI agents without adequate oversight protocols absorb institutional liability for adverse outcomes connected to those agents.

Vendor terms of service routinely disclaim liability for clinical errors.

Before deployment, your legal and compliance team should obtain specific answers to: What does the vendor’s BAA cover? What is the vendor’s liability scope for documentation errors? What audit trail does the system maintain? What are the indemnification provisions if an AI-generated record contributes to a malpractice claim?

HIPAA BAA requirements for AI vendors

Any AI agent vendor that processes, stores, or transmits Protected Health Information (PHI) on behalf of a covered entity must execute a Business Associate Agreement (BAA). This is non-negotiable under the Health Insurance Portability and Accountability Act (HIPAA).

A vendor claiming “HIPAA compliance” without a BAA available is not HIPAA-compliant for your purposes. A vendor whose BAA scope excludes AI model training data — meaning your PHI may be used to retrain or fine-tune their model — has a significant contractual gap your compliance team needs to evaluate before signature.

Ask directly: Does your BAA cover model training? Can our PHI be used to train or fine-tune your AI systems? What data residency requirements apply?

CMS interoperability rules and AI agents

Centers for Medicare & Medicaid Services (CMS) interoperability rules require covered health systems to provide patient access to health data via standard APIs. AI agents that access payer data, process prior authorization requests, or interface with CMS-regulated systems must operate within these requirements.

In practical terms, agents built on top of Health Level Seven (HL7) FHIR R4-compliant APIs are designed to satisfy these requirements. Agents operating outside the standard interoperability framework create compliance exposure.

Organizational Adoption: Why the Technology Is the Easy Part

The right entry point by organization size

Not every organization should pursue the same starting workflow. The scope of an agentic AI deployment that a community hospital with 200 beds can manage successfully differs fundamentally from what a national health system can absorb without governance infrastructure in place.

| <300 beds | Start with one workflow — ambient documentation or prior authorization, not both simultaneously. The governance infrastructure required for each workflow (review protocols, exception queues, staff training, monitoring dashboards) must be built before deployment. Layering two workflows without governance for either multiplies failure risk. |

| 300–1,000 beds | Multi-workflow pilots are feasible if centralized governance exists or is established as a prerequisite. Define review protocols, monitoring standards, and escalation paths before deployment begins — not during troubleshooting. |

| >1,000 beds | Start with one workflow — ambient documentation or prior authorization, not both simultaneously. The governance infrastructure required for each workflow (review protocols, exception queues, staff training, and monitoring dashboards) must be built before deployment. Layering two workflows without governance for either multiplies failure risk. |

Addressing clinician adoption before deployment

Clinician adoption is not a training problem; it is a design problem. The health systems reporting 70%+ physician adoption of ambient documentation AI share a pattern: they invested in a physician champion program, established a structured feedback loop with the vendor, and treated the first 90 days as an iterative design exercise rather than a go-live event.

The organizations reporting 25–30% adoption treated rollout as a software installation. The technology was identical in both cases.

The practical implication: budget for change management before and during deployment, not after adoption fails. Physician champion compensation, feedback collection infrastructure, and vendor SLA commitments on model customization requests are operational requirements, not afterthoughts.

How to Evaluate Agentic AI Vendors and Partners

Whether you are evaluating a SaaS AI agent product or a custom development partner, the evaluation criteria should be the same. Capability demos are necessary but not sufficient.

Questions to ask every AI agent vendor before signing:

- What is your primary failure mode? How does your system behave when it encounters unexpected data formats, API changes from payers, or workflow exceptions it was not trained on?

- Does your BAA cover model training? Can our PHI be used to fine-tune your AI systems?

- What monitoring and alerting does your platform provide natively? How would my team detect when the agent is producing incorrect outputs?

- What is your audit trail format? Can we produce a complete decision log for a compliance review or litigation hold?

- What is your FDA clearance status for each agent function, and what is the cleared indication?

- Who is liable for errors in AI-generated clinical documentation that contribute to a patient harm event?

- What is your average time to resolve a critical production failure?

Build vs. buy for regulated workflows

For AI agents operating in regulated clinical or administrative workflows — anything touching PHI, FDA-regulated functions, or California labor compliance under the California Private Attorneys General Act (PAGA) — the SaaS product market is still maturing. Most commercial AI agent products are built for horizontal enterprise use cases and require significant customization to meet healthcare compliance requirements.

Organizations with complex EHR environments, multi-state compliance obligations, or specific workflow requirements often benefit from a development partner who builds a HIPAA-compliant agentic system to specification rather than adapting a general-purpose product.

TATEEDA’s healthcare software development team builds custom agentic AI with BAAs in place before the first line of code, compliance-by-design architecture, and the healthcare domain expertise to build verification gates that your clinical staff will actually trust. For organizations that cannot afford to get compliance wrong, talk to our team.

FAQ: AI Agents in Healthcare

What is the difference between AI agents and traditional healthcare AI?

Traditional healthcare AI — predictive analytics, risk stratification models, clinical alert tools — analyzes data and surfaces recommendations for humans to act on. AI agents go further: they plan multi-step workflows, execute actions across connected systems, and complete tasks autonomously. An AI agent for prior authorization doesn’t flag a case as high-risk. It assembles the clinical documentation, submits the request to the payer, monitors the status, and alerts a human when action is required.

Are AI agents FDA-regulated?

It depends on their function. AI agents that perform or support medical functions — diagnostic recommendations, treatment suggestions, clinical decision influence — are likely Software as a Medical Device (SaMD) under the FDA’s AI/ML Software Action Plan and may require clearance. AI agents that automate administrative functions — documentation drafting, prior authorization submission, appointment scheduling, and billing — are generally not SaMD under current FDA guidance. Procurement decisions should reflect this distinction clearly.

Do I need a HIPAA Business Associate Agreement with an AI agent vendor?

Yes, if the AI agent accesses, processes, or stores PHI. The BAA must cover not just data storage but any use of PHI in the vendor’s model training, fine-tuning, or analytics pipelines. Vendors who offer a standard BAA that excludes model training data are creating a contractual gap your compliance team should close before deployment.

How long does it take to deploy an AI agent in a healthcare environment?

A scoped single-workflow deployment — ambient documentation integration with a clean EHR environment and defined governance protocols — can be configured and running in production within 60–90 days. Complex multi-workflow platforms with custom FHIR integrations, compliance documentation, and staff training programs require five to nine months. The variable that most organizations underestimate is the internal change management timeline — physician training, review protocol design, and governance framework development — not the technical build.

What are the biggest failure risks for healthcare AI agents?

Based on production deployments, the five highest-risk failure points are: (1) absent or inadequate human review gates on clinical outputs; (2) poor underlying EHR data quality producing unreliable agent outputs; (3) silent failure modes not detected by monitoring; (4) vendor BAA gaps creating HIPAA exposure; and (5) low clinician adoption that defeats the agent’s purpose while creating liability for outputs no one reviews.

| The Cost of Waiting Organizations running agentic AI pilots today are building institutional expertise, developing governance frameworks, and generating outcomes data their competitors don’t have. That capability compounds. The gap between early movers and late adopters in healthcare AI is not static — it widens with each operational quarter. |