AI Chatbots in Healthcare: Use Cases, HIPAA Requirements, and What Actually Works

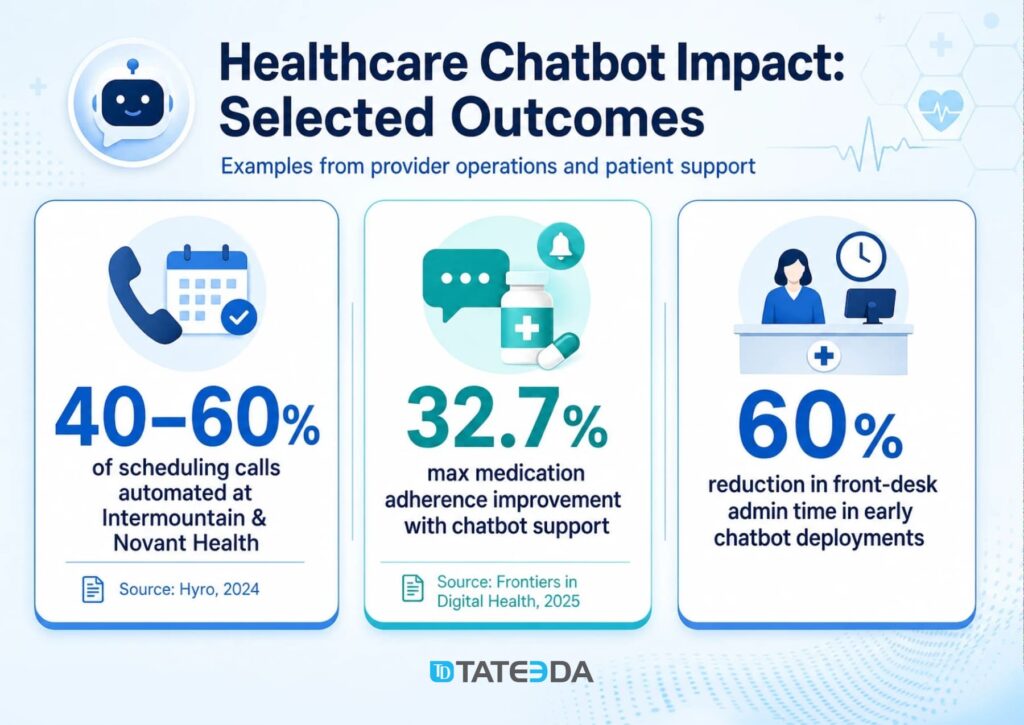

| Key Takeaways: ✅ 32% of US consumers already use AI chatbots for health information (2025); the question for health systems is not whether to deploy, but how to deploy compliantly. ✅ Any chatbot that accesses, processes, or stores PHI requires a signed BAA — and that BAA must explicitly address whether the vendor uses patient conversations to train their model. ✅ Symptom triage chatbots that recommend a care pathway may meet the FDA’s definition of Software as a Medical Device (SaMD); scheduling and billing chatbots generally do not. ✅ Real-world deployments show measurable ROI: Hyro automates 40–60% of scheduling calls; peer-reviewed data shows 6.7–32.7% medication adherence improvement with chatbot support. ✅ The biggest implementation failure is not technology — it is deploying without live EHR integration, which creates stale data, duplicate appointments, and staff workarounds that kill adoption. |

If you require technical assistance in developing and integrating a custom chatbot solution, please contact our AI experts for individualized project consultation!

Nearly one in three US patients now turns to an AI chatbot for health information before calling their doctor. That’s not a projection — it’s 2025 reality, according to Rock Health’s consumer survey. Health systems that haven’t deployed AI chatbots aren’t shielding their patients from AI. They’re leaving them to use unvetted consumer tools instead.

AI chatbots in healthcare are conversational software systems that interact with patients, clinicians, or staff via natural language to handle clinical queries, administrative tasks, and care support workflows. They range from simple appointment scheduling bots to clinician-facing assistants that surface drug interaction data on demand. The market hit $1.6B in 2024 and is projected to reach $7.1B by 2030 — driven by real deployment results, not vendor projections.

This guide is written for CTOs, IT directors, and digital health product managers who are past the “what is a chatbot” stage and need answers to harder questions: what does a compliant vendor contract actually require, how does EHR integration work in practice, what are the failure modes nobody warns you about, and when should you build rather than buy. You’ll leave with a working framework you can take into your compliance, legal, and clinical informatics reviews.

Table of Contents

What Are AI Chatbots in Healthcare?

AI chatbots in healthcare are software applications that use natural language processing (NLP) and large language models (LLMs) to conduct conversations with patients, staff, or clinicians in real time. They interpret user input in natural language, retrieve or process relevant information from connected systems, and respond in plain language without requiring the user to navigate a form or menu.

Healthcare chatbots currently handle six primary use cases:

- Patient triage and symptom assessment: Collecting symptom information, applying clinical protocols to assess urgency, and routing patients to the appropriate care level (ED, urgent care, primary care, self-care).

- Appointment scheduling and care navigation: Booking, rescheduling, and canceling appointments; answering questions about location, preparation, and insurance; reducing call center volume.

- Medication adherence and chronic disease support: Sending personalized reminders, answering medication questions, tracking self-reported adherence data, and flagging non-adherence to care teams.

- Mental health and emotional support: Delivering evidence-based cognitive behavioral therapy (CBT) exercises, stress management tools, and low-acuity emotional support (not clinical diagnosis or crisis intervention).

- Administrative automation: Insurance eligibility verification, billing inquiries, benefits explanations, and pre-visit form completion — tasks that consume front-desk hours without requiring clinical judgment.

- Clinician-facing Q&A and clinical decision support: Surfacing drug interaction data, clinical protocol references, and patient history summaries on demand for clinical staff.

How AI Chatbots Differ from AI Agents in Healthcare

This distinction matters for procurement, compliance, and architecture decisions. The two categories are often conflated in vendor marketing and frequently confused in search results — but they are architecturally distinct.

| Dimension | AI Chatbot | AI Agent |

|---|---|---|

| Core function | Conversational interface; responds to user input | Autonomous workflow executor; acts without per-step instruction |

| Operates within | A dialogue session | Multi-step workflows across connected systems |

| Healthcare examples | Scheduling bot, symptom triage, medication reminders, FAQ | Prior authorization, documentation generation, care gap closure |

| Human in the loop | User drives each exchange | Reviews outcome; does not direct each step |

| Typical deployment | Patient-facing portal, call center, clinician Q&A | EHR-integrated backend workflows, revenue cycle |

The two categories are complementary, not competing. A chatbot collects a patient’s symptom report; an agent processes that report against clinical criteria and schedules the appropriate follow-up. TATEEDA has covered the role of AI agents in healthcare and agentic AI trends in healthcare in depth. This article focuses on the conversational layer.

Do you want to create a healthcare chatbot?

TATEEDA’s team can help you create a patient-facing conversational AI integration for websites or mobile apps.

AI Chatbot Use Cases in Healthcare: What the Data Shows

Patient Triage and Scheduling

Hyro, a conversational AI platform focused on healthcare, handles 40–60% of inbound scheduling calls at Intermountain Health and Novant Health — health systems that process millions of appointments annually. The automation rate is not a pilot number; it reflects production deployments across multiple facilities.

| Case Study — Scheduling Automation A regional health system in the Southwest deployed a scheduling chatbot in its call center in early 2025. The after-hours line, previously unanswered, was losing an estimated 12% of appointment volume to no-shows and failed bookings. Within 60 days of deploying the chatbot, after-hours booking completion went from 0% to 68%. The front desk answered 40% fewer calls during peak hours. Patients who reached the chatbot at 10 PM booked their appointments directly — no callback, no morning hold queue. |

Early healthcare chatbot deployments report a 60% reduction in front-desk administrative time for the use cases they handle. The ROI calculation for a 10-person front-desk operation handling 800 calls per day is not complex.

Medication Adherence and Chronic Disease Support

A peer-reviewed meta-analysis published in Frontiers in Digital Health (2025) found medication adherence improvements of 6.7–32.7% over control groups in studies using conversational AI chatbot support. The range is wide because use cases vary significantly: diabetes self-management, hypertension adherence post-discharge, and chronic pain medication management show different baseline adherence patterns.

The mechanism is access. Patients who won’t call their care manager will text a chatbot at midnight when they can’t remember if they took their metformin. The chatbot answers, logs the interaction, and flags the pattern to the care team if it recurs. That is a workflow that has no human equivalent at scale.

Mental Health Support

Wysa and similar CBT-based chatbots have established clinical evidence for low-acuity mental health support, stress management, and sleep hygiene — deployed in employee assistance programs and as a first layer before clinical referral. The appropriate scope is stress and emotional support, not diagnosis or crisis intervention.

The clinical boundary matters for deployment design. A chatbot that provides CBT exercises for work-related anxiety and immediately escalates to a crisis line when the user mentions self-harm is operating within an appropriate scope. A chatbot that attempts to diagnose depression or substitute for a licensed therapist is not, and may create liability that the vendor’s terms of service do not protect against.

ROI Framework for Health System Leaders

The CFO conversation around healthcare chatbots typically focuses on three data points:

- Call deflection: What percentage of inbound call volume can the chatbot handle without human intervention? Industry benchmarks range from 30–60% for scheduling-focused deployments.

- No-show reduction: After-hours booking capability directly reduces no-shows by converting would-be voicemails into confirmed appointments. A 5% reduction in the no-show rate on a 200-bed hospital’s outpatient schedule represents significant net revenue.

- Labor savings: Front-desk time freed from repetitive scheduling, eligibility, and FAQ calls can be reallocated to tasks requiring human judgment.

Assembling this into a business case requires your actual call volume and labor cost data. TATEEDA’s healthcare IT consulting team can help model these numbers against your specific deployment scenario.

HIPAA Compliance: What Every Healthcare Chatbot Deployment Requires

When a BAA Is Required (and What It Must Cover)

Any chatbot that accesses, processes, or stores Protected Health Information (PHI) — including names, dates of service, diagnosis codes, appointment records, or prescription history — requires a signed Business Associate Agreement (BAA) with the vendor before go-live. This is not optional and is not covered by the vendor’s standard privacy policy.

What most health systems discover too late is that not all BAAs are equivalent. The Health Insurance Portability and Accountability Act (HIPAA) defines minimum BAA requirements, but those requirements were written before large language models existed. Your vendor’s BAA must explicitly address:

- Data storage scope: Where is PHI stored, in what format, and for how long?

- Transmission encryption: What encryption standards govern data in transit (minimum TLS 1.2)?

- Subprocessors: Does the vendor use third-party cloud infrastructure or AI APIs that themselves touch PHI? Each requires a BAA chain.

- Model training on PHI: Does the vendor use patient conversations to fine-tune or improve their AI model? If yes, under what legal basis and with what consent?

- Data residency: Is all data stored within the United States, or does the vendor’s infrastructure cross borders?

- Breach notification timeline: The HIPAA Breach Notification Rule requires notice within 60 days of discovery. Your BAA should confirm the vendor’s obligation to notify you promptly enough for you to meet that deadline.

| Real-World Case — The BAA Gap A health system’s legal team discovered six months after go-live that their chatbot vendor’s BAA excluded model training data. Patient conversations were potentially being used to fine-tune the vendor’s shared model — disclosed in paragraph 14 of the master services agreement, not in the BAA itself. The health system negotiated a contract amendment, implemented technical controls to restrict data export to the vendor’s training pipeline, and notified their Chief Compliance Officer. The remediation took four months. A BAA review before signing would have taken four hours. |

Technical Safeguards for HIPAA-Compliant Chatbot Deployments

Beyond the vendor contract, your deployment architecture must include:

- End-to-end encryption: TLS 1.2 or higher in transit; AES-256 encryption at rest for all PHI stored by the chatbot system.

- Session management: Automatic timeout for PHI-handling interfaces after inactivity. A patient portal chatbot that remains active on an unlocked shared device is a HIPAA exposure.

- Audit logging: Every PHI access must be logged — which user accessed what, when, and what the chatbot returned. Logs must be retained for a minimum of six years under HIPAA’s documentation retention requirements.

- Role-based access control: Clinician-facing chatbot functions that surface patient history should require authentication and enforce minimum-necessary access based on the user’s clinical role.

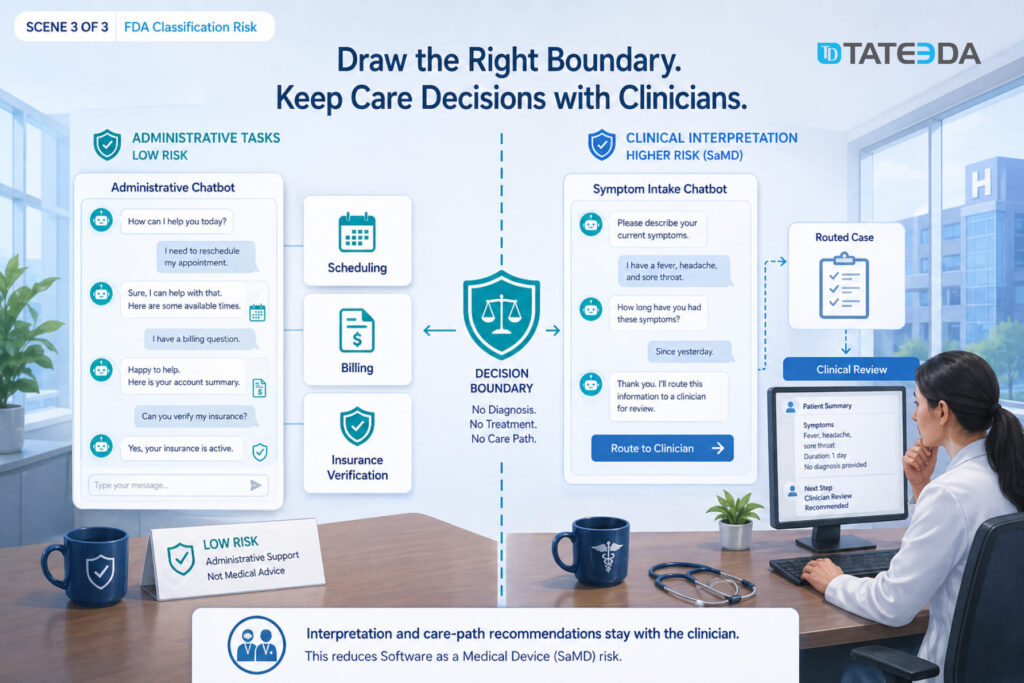

FDA Classification Risk

A healthcare chatbot that interprets symptoms and recommends a care pathway may meet the FDA’s definition of Software as a Medical Device (SaMD). The FDA’s AI/ML Software Action Plan defines SaMD as software that functions in the diagnosis, prevention, monitoring, prediction, prognosis, treatment, or alleviation of disease.

Symptom triage that generates a recommendation based on clinical criteria sits in FDA classification risk territory. Administrative chatbots that handle scheduling, billing inquiries, and insurance verification are generally outside SaMD scope. The architectural implication: a triage chatbot should collect symptoms and route to a clinician for interpretation, not generate the interpretation itself. Building that boundary into the system architecture — not just the terms of service — is what keeps it outside FDA regulatory scope.

For teams building custom chatbot workflows in this area, TATEEDA’s experience with custom AI development for healthcare includes FDA classification analysis as part of the architecture review process.

EHR Integration: What It Actually Requires

Healthcare chatbots described as “EHR-integrated” in vendor marketing can mean anything from a live bidirectional Fast Healthcare Interoperability Resources (FHIR) API connection to a nightly flat-file export. The difference matters enormously for patient safety and operational accuracy.

A scheduling chatbot that books appointments without checking provider availability in the Electronic Health Record (EHR) creates duplicate bookings, blocks schedules, and generates staff corrections that eliminate the efficiency gain. A medication adherence chatbot operating on a 24-hour-old medication list may reassure a patient that they haven’t taken a dose when they have. These are not edge cases; they are the expected failure modes of chatbots deployed without live EHR integration.

FHIR R4 for Modern EHR Environments

Health systems running Epic, Oracle Health (formerly Cerner), or athenahealth on current versions can connect chatbots via FHIR R4 APIs with SMART on FHIR authorization. This provides OAuth 2.0 authentication flows that verify patient or clinician identity before granting EHR access, scoped resource access so the chatbot reads only what it needs, and real-time data — the chatbot sees the same appointment slots and patient data as a front-desk staff member.

SMART on FHIR scopes define exactly what the chatbot can read or write. A scheduling chatbot should have read access to provider availability and write access to the appointment resource — nothing else. This principle of minimum necessary access is both a HIPAA requirement and a sound security design for AI systems.

Legacy HL7 v2 for Older Environments

Health systems not yet on FHIR-capable APIs, or running older EHR versions, will need Health Level Seven (HL7) v2 interfaces. These are mature, well-understood integration patterns, but they are batch-oriented by design, which means real-time chatbot functions require additional middleware to bridge the latency.

When evaluating a chatbot vendor, the right question is: “Which EHRs do you have production integrations with, and are those integrations FHIR R4 bidirectional or HL7 file-based?” Production integrations with your specific EHR version, in real health system deployments, are a different credential than “FHIR-compatible” in vendor marketing materials.

Where Healthcare Chatbots Fail: The Cases Vendors Don’t Discuss

Hallucination and Clinical Scope Creep

| Failure Case — Symptom Triage A digital health startup launched a symptom-checking chatbot trained on publicly available medical literature in 2024. Within three months, two separate users reported that the chatbot had told them their chest pain was likely acid reflux. Both were presenting with symptoms consistent with myocardial infarction. The chatbot had no EHR access, no physician escalation pathway, and no fallback to emergency services for high-acuity inputs. The product was pulled. |

This is not an argument against symptom collection chatbots. It is an argument for architectural scope enforcement. A chatbot that collects symptom descriptions and routes to a triage nurse is a different system from one that interprets symptoms and produces clinical conclusions.

The first is defensible. The second is a liability in most regulatory environments and a patient safety risk in all of them.

LLMs produce plausible language. In clinical contexts, plausible and correct are not synonymous. “Non-diagnostic” scope must be enforced by system architecture — routing logic, escalation triggers, and hard-coded refusal to answer questions that require clinical interpretation — not by terms of service that users don’t read and plaintiffs’ attorneys will.

EHR Integration Failures

The second most common failure pattern: chatbots deployed with incomplete or asynchronous EHR integration that produce incorrect information because they are working from stale data. Scheduling bots that don’t check real-time provider availability create double-bookings. Medication chatbots working from 24-hour-old records send adherence reminders for medications that were discontinued at discharge.

These failures are not technology problems. They are integration scope problems that appear in demos built against clean test data and then manifest in production against real EHR complexity: provider availability that changes hourly, medication lists updated mid-encounter, and insurance coverage that varies by service type.

Staff Bypass and Patient Distrust

Staff route around chatbots when the system doesn’t fit their workflow. Front-desk staff who find it faster to book directly in Epic than to field a chatbot handoff will develop unofficial bypass procedures within weeks of go-live. This is not resistance to technology — it is a rational response to a workflow that adds friction.

Patient distrust emerges when chatbot responses contradict what their physician told them. Recovering that trust is harder than building it the first time.

Deployment success requires clinical workflow integration, not just technical integration, which means involving front-desk staff, care coordinators, and clinical informaticists in the design process before any vendor contract is signed.

Build vs. Buy: The Decision Framework for Health Systems

The build-vs.-buy question for healthcare chatbots comes down to complexity, customization requirements, and compliance ownership.

When SaaS is the right choice…

- Single-use-case deployments with standard EHR integrations

- Community hospitals without dedicated engineering teams

- Standard EHR environments with certified vendor integrations

- 30–90 day deployment target; willing to accept vendor-defined constraints

When custom development is right…

- Multi-EHR environments with no single vendor integration

- CCPA / CPRA or state-specific data residency requirements

- Branded patient experience with deep clinical workflow integration

- Chatbot capability as a core product differentiator, not a commodity feature

What to look for in a development partner for custom chatbot work: HIPAA-compliant practices by default (BAA signed before code is written), demonstrated EHR integration experience with your specific EHR version, FDA SaMD classification experience for any triage-adjacent functionality, and engineers who have shipped healthcare software in production — not engineers learning HIPAA terminology on your project.

FAQ: AI Chatbots in Healthcare

What is the difference between an AI chatbot and an AI agent in healthcare?

An AI chatbot is a conversational interface that responds to user input in natural language — booking appointments, answering questions, collecting symptoms, and routing to humans when needed. An AI agent is an autonomous workflow executor that takes multi-step actions across systems without human instruction for each step — submitting prior authorizations, updating EHR records, or managing care coordination end-to-end. The two are architecturally distinct and are typically deployed for different use cases, though they can work together in integrated care platforms.

Do AI chatbots in healthcare require FDA clearance?

It depends on the function. Chatbots that collect administrative information, book appointments, or deliver general health education are generally not subject to FDA oversight. Chatbots that interpret symptoms, produce clinical recommendations, or influence clinical decision-making may meet the FDA’s definition of Software as a Medical Device (SaMD) and require clearance under the 510(k) pathway or De Novo review. The architectural distinction — collecting vs. interpreting — determines the regulatory risk. Review your use case against the FDA’s published AI/ML Software Action Plan before finalizing your design.

Does my chatbot vendor need to sign a HIPAA Business Associate Agreement?

Yes, if the chatbot accesses, processes, or stores any PHI. This includes names, appointment dates, diagnosis codes, insurance information, and medication history. A vendor’s privacy policy does not substitute for a BAA. The BAA must explicitly address data storage, subprocessors, model training on PHI, data residency, and breach notification obligations. Review the BAA with healthcare legal counsel, not just your procurement team.

How do healthcare chatbots integrate with Epic or Oracle Health?

Modern EHR integrations use FHIR R4 APIs with SMART on FHIR authorization for real-time, scoped data access. This allows the chatbot to check appointment availability, confirm patient demographics, and read relevant clinical data using OAuth 2.0-authenticated API calls, without exposing the full patient record. Health systems running older EHR versions use HL7 v2 interfaces with middleware to approximate real-time behavior. Verify your vendor’s integration type — FHIR R4 bidirectional vs. HL7 file-based — before contract execution.

What are the biggest risks of deploying a healthcare AI chatbot?

The three highest-risk failure modes: (1) deploying a triage chatbot without architectural scope enforcement, creating clinical liability when the system produces plausible but incorrect clinical conclusions; (2) deploying with incomplete EHR integration, creating stale-data errors that generate patient safety incidents or duplicate appointments; (3) signing a vendor BAA that does not address model training on PHI, which may result in patient conversations being used to train a shared commercial model.

How long does it take to deploy a healthcare chatbot?

A SaaS scheduling chatbot with a standard EHR integration at a community hospital can go live in 60–90 days. A custom-built chatbot with multi-EHR integration, FHIR R4 API development, clinical workflow design, and compliance review typically requires 4–8 months. The deployment timeline is primarily a function of EHR integration complexity and clinical workflow design scope, not the chatbot technology itself.